Pokemon Color Transfer: Difference between revisions

| (45 intermediate revisions by 2 users not shown) | |||

| Line 6: | Line 6: | ||

In this project, we focus on two primary goals: | In this project, we focus on two primary goals: | ||

a. Aesthetic quality | a. Style preservation: the recolored image should adopts the color style, mood, or chromatic distribution of a another image. | ||

b. Aesthetic quality: the recolored Pokemon should look visually pleasing and harmonious. | |||

To achieve these goals, we apply palette-based color transfer methods to generate new Pokemon styles and systematically evaluate the results. Aesthetic quality is assessed through a human-preference survey questionnaire, while similarity to the original image is measured using quantitative metrics such as FID, SSIM, and other perceptual distance measures. Together, these evaluations allow us to study both the creativity and fidelity of color-transferred Pokemon, providing a balanced assessment of stylistic expressiveness and structural preservation. | To achieve these goals, we apply palette-based color transfer methods to generate new Pokemon styles and systematically evaluate the results. Aesthetic quality is assessed through a human-preference survey questionnaire, while similarity to the original image is measured using quantitative metrics such as FID, SSIM, and other perceptual distance measures. Together, these evaluations allow us to study both the creativity and fidelity of color-transferred Pokemon, providing a balanced assessment of stylistic expressiveness and structural preservation. | ||

| Line 16: | Line 17: | ||

=== Color and Style Transfer === | === Color and Style Transfer === | ||

Style and color transfer [1] is a fundamental technique in computer vision and computational photography that aims to reinterpret an image by borrowing visual characteristics from another. Early work focused on texture synthesis and color statistics matching, enabling simple recoloring or pattern transfer across images. With the rise of deep learning, neural style transfer demonstrated that high-level artistic patterns—such as brushstrokes, textures, and global color mood—could be extracted from one image and blended with the structural content of another [2]. Modern approaches further incorporate perceptual features, palette manipulation, and learned representations to achieve more faithful, controllable, and efficient transformations. These methods are now widely used in artistic rendering, photo enhancement, film post-production, augmented reality, and low-power edge-device imaging pipelines [3]. | |||

The aim of color transfer in this project is to reinterpret an image by borrowing visual characteristics from another, and modify the color appearance of a pair of images so that it adopts the color style, mood, or chromatic distribution of a another image, and looks good to human. | |||

=== HSV Color Space === | |||

HSV (Hue-Saturation-Value) is a cylindrical color space that separates chromatic information from intensity, making it particularly suitable for color manipulation tasks. Unlike RGB, which mixes color and brightness, HSV represents: | |||

* '''Hue (H)''': The color type (0-360° or 0-1 normalized), representing position on the color wheel | |||

* '''Saturation (S)''': Color purity or intensity (0-1), from grayscale to pure color | |||

* '''Value (V)''': Brightness (0-1), from black to full intensity | |||

'''Reasons to use HSV for Color Transfer''' | |||

We employ HSV alongside LAB in our pipeline for complementary purposes: | |||

* '''Intuitive Color Shifts''': HSV allows direct manipulation of color properties. Hue differences (ΔH) naturally represent color-to-color mappings (e.g., green → blue), while saturation and value adjustments preserve tonal relationships. | |||

* '''Perceptual Separation''': By decoupling chromatic content (H, S) from luminance (V), we can modify colors while preserving shading and depth cues that define Pokémon structure. | |||

* '''Gradient Preservation''': When transferring between palette colors, HSV shifts maintain smooth color transitions better than direct RGB interpolation. This is critical for preserving gradients in Pokémon artwork (e.g., shading on Squirtle's shell). | |||

* '''Complementary to LAB''': While LAB excels at perceptual distance measurement (used in palette matching), HSV excels at color transformation. Our methods use LAB to determine ''which'' colors should map to each other, then HSV to compute ''how'' to shift pixel colors. | |||

'''Implementation in Our Methods''': | |||

* '''Baseline & Clustering methods''': Compute palette correspondence in LAB space (minimizing ΔE), then apply shifts in HSV | |||

* '''Neighbor-based methods''': Use HSV shifts with spatial smoothing to maintain local color coherence | |||

* '''Hue wrapping''': Circular nature of hue (red at 0° and 360°) is handled via modulo arithmetic: <math>H' = (H + \Delta H) \bmod 1.0</math> | |||

This dual color space strategy—LAB for perception-based matching and HSV for intuitive transformation—enables both accurate palette alignment and smooth, natural-looking color transitions in the transferred Pokémon images. | |||

=== Palette Extraction === | === Palette Extraction === | ||

| Line 22: | Line 52: | ||

==== K-Means ==== | ==== K-Means ==== | ||

We use K-Means to cluster pixel values and use its center as palette. To avoid clustering on millions of pixels, we quantize RGB space into 16 bins per channel. For each pixel with RGB value <math>(r,g,b)</math>: | We use K-Means [4] to cluster pixel values and use its center as palette. To avoid clustering on millions of pixels, we quantize RGB space into 16 bins per channel. For each pixel with RGB value <math>(r,g,b)</math>: | ||

<math display="block"> | <math display="block"> | ||

| Line 112: | Line 142: | ||

=== Neighbor Segments === | === Neighbor Segments === | ||

Neighbor Segments (NS) Method groups pixels into local, perceptually coherent regions and uses neighborhood relationships to guide smooth and consistent recoloring. Instead of treating each pixel independently, the image is first segmented into small regions | Neighbor Segments (NS) Method groups pixels into local, perceptually coherent regions and uses neighborhood relationships to guide smooth and consistent recoloring. Instead of treating each pixel independently, the image is first segmented into small regions as color-coherent clusters, where each segment represents a set of spatially adjacent pixels with similar color statistics. Let the image be segmented into | ||

<math>S = {s_1, s_2, \ldots, s_K}</math>, | <math>S = {s_1, s_2, \ldots, s_K}</math>, | ||

where each segment <math>s_k</math> contains pixels with similar color features. | where each segment <math>s_k</math> contains pixels with similar color features. | ||

| Line 141: | Line 171: | ||

This neighborhood-aware propagation allows the algorithm to maintain structural consistency, preserve texture boundaries, and generate recolorings that are both stable and visually coherent. Overall, Neighbor Segments provides a lightweight way to incorporate spatial smoothness into palette-based transfer, producing natural transitions while keeping computation efficient. | This neighborhood-aware propagation allows the algorithm to maintain structural consistency, preserve texture boundaries, and generate recolorings that are both stable and visually coherent. Overall, Neighbor Segments provides a lightweight way to incorporate spatial smoothness into palette-based transfer, producing natural transitions while keeping computation efficient. | ||

=== | === Clustering-based palette transfer === | ||

This method leverages the K-means-extracted palettes (clusters of representative colors) and establishes an optimal correspondence between them before applying spatially-weighted color shifts. | |||

Given extracted palettes <math>P^A = \{p^A_1, \ldots, p^A_K\}</math> and <math>P^B = \{p^B_1, \ldots, p^B_K\}</math>, we first compute a one-to-one matching via the Hungarian algorithm in CIE-Lab space: | |||

<math>P^A={p^A_1,\ldots,p^A_K}</math> and | |||

<math>P^B={p^B_1,\ldots,p^B_K}</math> | |||

<math display="block"> | <math display="block"> | ||

\pi^* = \arg\min_{\pi \in S_K} \sum_{i=1}^{K} \|p_i^A - p_{\pi(i)}^B\|_2 | \pi^* = \arg\min_{\pi \in S_K} \sum_{i=1}^{K} \|p_i^A - p_{\pi(i)}^B\|_2, | ||

</math> | </math> | ||

This yields a permutation <math>\pi^*</math> that | where <math>S_K</math> denotes the set of all permutations of <math>\{1,\ldots,K\}</math>. This yields a permutation <math>\pi^*</math> that minimizes the total perceptual distance between matched palette colors. | ||

For each pixel <math>x</math> in image A, we compute soft | For each pixel <math>x</math> in image <math>A</math>, we compute soft membership weights to <math>A</math>'s palette in Lab space: | ||

<math display="block"> | <math display="block"> | ||

w_i(x) = \frac{\exp(-\|\text{Lab}(x) - p_i^A\|_2^2/\ | w_i(x) = \frac{\exp(-\|\text{Lab}(x) - p_i^A\|_2^2 / \sigma^2)}{\sum_{j=1}^K \exp(-\|\text{Lab}(x) - p_j^A\|_2^2 / \sigma^2)}, | ||

</math> | </math> | ||

For each matched pair, | where <math>\sigma = 10</math> controls the softness of palette assignment. Black and white pixels (<math>L^* > 90</math> or <math>L^* < 30</math> with <math>|a^*|, |b^*| < 10</math>) are excluded from recoloring. | ||

For each matched palette pair <math>(p^A_i, p^B_{\pi^*(i)})</math>, we compute the HSV shift: | |||

<math display="block"> | <math display="block"> | ||

\Delta_i = \ | \Delta_i = \text{HSV}(p^B_{\pi^*(i)}) - \text{HSV}(p^A_i), | ||

</math> | </math> | ||

with hue wrapped to <math>(- | with hue differences wrapped to <math>(-0.5, 0.5]</math> to ensure minimal angular distance. Each pixel's color is updated by a weighted blend of these shifts: | ||

<math display="block"> | <math display="block"> | ||

\ | \text{HSV}'(x) = \text{HSV}(x) + \sum_{i=1}^{K} w_i(x) \, \Delta_i, | ||

</math> | </math> | ||

followed by clipping to | followed by hue wrapping (<math>H' \bmod 1</math>), saturation and value clipping to <math>[0,1]</math>, and conversion back to RGB. Image <math>B</math> is recolored symmetrically using the inverse permutation <math>\pi^{*-1}</math>. | ||

=== Direct Spatial Clustering Transfer === | |||

This method automatically discovers spatially-coherent color regions by clustering each image in joint Lab+XY space, then transfers colors by optimally matching these implicit palette clusters across the two images. | |||

'''Cluster discovery:''' | '''Cluster discovery:''' | ||

For each image, we build Lab+XY features for every pixel, | For each image, we build Lab+XY features for every pixel, | ||

| Line 184: | Line 212: | ||

spatial centroid <math>\boldsymbol{m}^{xy}_k</math>, and area <math>A_k = |C_k|</math>. | spatial centroid <math>\boldsymbol{m}^{xy}_k</math>, and area <math>A_k = |C_k|</math>. | ||

'''Cluster matching:''' | '''Cluster matching:''' | ||

| Line 207: | Line 236: | ||

or an optional affine mapping in chroma (when use_affine = True): | or an optional affine mapping in chroma (when use_affine = True): | ||

<math display="block"> | <math display="block"> | ||

\mathbf{A}i = \left(\boldsymbol{\Sigma}^{ab,B}_{\pi^*(i)}\right)^{\tfrac12} \left(\boldsymbol{\Sigma}^{ab,A}_i\right)^{-\tfrac12} | \mathbf{A}i = \left(\boldsymbol{\Sigma}^{ab,B}_{\pi^*(i)}\right)^{\tfrac12} \left(\boldsymbol{\Sigma}^{ab,A}_i\right)^{-\tfrac12}. | ||

</math> | </math> | ||

| Line 222: | Line 249: | ||

affine mode: | affine mode: | ||

<math display="block"> | <math display="block"> | ||

\mathbf{z}'(x)=\sum_{i=1}^{K} w_i(x)\ | \mathbf{z}'(x) = \sum_{i=1}^{K} w_i(x) \left[\mathbf{A}_i\left(\mathbf{z}(x) - \boldsymbol{\mu}^{ab,A}_i\right) + \boldsymbol{\mu}^{ab,B}_{\pi^*(i)}\right], | ||

</math> | </math> | ||

We preserve lightness (<math>L</math>) to keep shading/speculars intact and form | We preserve lightness (<math>L</math>) to keep shading/speculars intact and form | ||

<math>\mathrm{Lab}'(x)=[L(x),\mathbf{z}'(x)]</math>, then convert to RGB with clipping. The same symmetric procedure recolors image B using clusters discovered in B and matched to A. | <math>\mathrm{Lab}'(x)=[L(x),\mathbf{z}'(x)]</math>, then convert to RGB with clipping. The same symmetric procedure recolors image B using clusters discovered in B and matched to A. | ||

'''Background preservation:''' | |||

By default (preserve_bg=True), near-white pixels (RGB > 0.92 in all channels) are masked and restored after recoloring to preserve clean backgrounds and line art. | |||

=== Convex-hull Transfer === | === Convex-hull Transfer === | ||

| Line 276: | Line 306: | ||

*Gentle blend: mix the recolored result with the original (e.g., 80–90%) to suppress artifacts while retaining the target palette’s look. | *Gentle blend: mix the recolored result with the original (e.g., 80–90%) to suppress artifacts while retaining the target palette’s look. | ||

We apply the same procedure in the opposite direction to recolor image B. | |||

=== Neighbor Segments with Superpixel (NS-S) === | === Neighbor Segments with Superpixel (NS-S) === | ||

| Line 401: | Line 433: | ||

=== Quantitative and Qualitative Results === | === Quantitative and Qualitative Results === | ||

==== Experiment 1 ==== | |||

{| class="wikitable" | {| class="wikitable" | ||

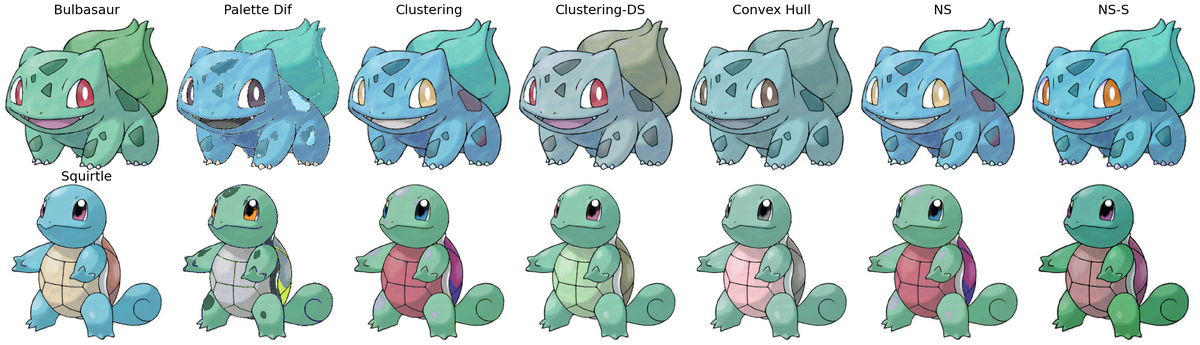

|+ Table 1: Quantitative Evaluation Results on Bulbasaur and Squirtle | |+ Table 1: Quantitative Evaluation Results on Bulbasaur and Squirtle | ||

! Metric !! Palette Dif !! Clustering !! Clustering- | ! Metric !! Palette Dif !! Clustering !! Clustering-DS !! Convex Hull !! NS !! NS-S | ||

|- | |- | ||

| FID || | | FID || 795.74/229.05 || 106.22/110.64 || '''46.78'''/61.91 || 96.12/66.19 || 107.60/108.85 || 75.15/'''42.77''' | ||

|- | |- | ||

| Histogram-Corr || 0.91/0.96 || 0.91/0.96 || 0. | | Histogram-Corr || '''0.91/0.96''' || '''0.91/0.96''' || '''0.90/0.96''' || '''0.87/0.96''' || '''0.91/0.96''' || '''0.90/0.96''' | ||

|- | |- | ||

| Histogram-Chi2 || 2. | | Histogram-Chi2 || '''2.79'''/36.67 || 8.97/'''2.35''' || 535.42/'''2.33''' || 23.28/'''2.02''' || 8.35/'''2.39''' || 6.38/9.97 | ||

|- | |- | ||

| CIELAB || 19. | | CIELAB || 19.54/14.45 || 18.32/14.88 || 13.34/10.08 || 13.03/'''8.76''' || 18.33/14.63 || 16.88/15.51 | ||

|- | |- | ||

| CIE94 || | | CIE94 || 14.82/10.72 || 13.44/11.13 || '''8.84/7.41''' || 8.78/'''6.71''' || 13.45/10.98 || 12.20/11.76 | ||

|- | |- | ||

| CIEDE2000 || | | CIEDE2000 || 13.97/10.06 || 13.10/10.97 || '''9.20/7.83''' || '''8.77/7.14''' || 13.11/10.81 || 11.82/11.20 | ||

|- | |- | ||

| SSIM || 0. | | SSIM || 0.82/0.90 || '''0.96/0.95''' || '''0.99/0.99''' || '''0.98/0.99''' || '''0.96/0.96''' || '''0.97/0.95''' | ||

|- | |- | ||

| VGG Latent Space Distance || | | VGG Latent Space Distance || 35.29/32.63 || '''15.46'''/21.98 || '''14.13'''/21.83 || '''13.73'''/'''18.18''' || '''15.76'''/22.05 || '''17.29'''/'''18.31''' | ||

|} | |} | ||

[[File:Bulbasaur_Squirtle_all_methods.png|none|thumb|1200px|Figure 1: Results of Bulbasaur and Squirtle]] | [[File:Bulbasaur_Squirtle_all_methods.png|none|thumb|1200px|Figure 1: Results of Bulbasaur and Squirtle]] | ||

==== Experiment 2 ==== | |||

{| class="wikitable" | {| class="wikitable" | ||

|+ Table 2: Quantitative Evaluation Results on Bunnelby and Charmeleon | |+ Table 2: Quantitative Evaluation Results on Bunnelby and Charmeleon | ||

! Metric !! Palette Dif !! Clustering !! | ! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hull !! NS !! NS-S | ||

|- | |- | ||

| FID || 604.47/428.24 || 506.28/846.57 || 191.41/652.94 || 22.94/305.28 || 476.33/421.44 || 198.56/327.57 | | FID | ||

|| 604.47/428.24 | |||

|| 506.28/846.57 | |||

|| 191.41/652.94 | |||

|| '''22.94'''/'''305.28''' | |||

|| 476.33/421.44 | |||

|| 198.56/327.57 | |||

|- | |- | ||

| Histogram-Corr || 0.98/0.98 || 0.98/0.98 || -0.00/0.98 || 0.99/0.98 || 0.98/0.98 || 0.98/0.99 | | Histogram-Corr | ||

|| 0.98/0.98 | |||

|| 0.98/0.98 | |||

|| -0.00/0.98 | |||

|| '''0.99'''/0.98 | |||

|| 0.98/0.98 | |||

|| 0.98/'''0.99''' | |||

|- | |- | ||

| Histogram-Chi2 || 0.72/53.33 || 0.73/52.58 || 4.69/108.98 || 18.49/40.69 || 0.74/51.02 || 1.00/38.11 | | Histogram-Chi2 | ||

|| '''0.72'''/53.33 | |||

|| 0.73/52.58 | |||

|| 4.69/108.98 | |||

|| 18.49/40.69 | |||

|| 0.74/51.02 | |||

|| 1.00/'''38.11''' | |||

|- | |- | ||

| CIELAB || 23.98/17.02 || 16.46/16.59 || 13.57/18.01 || 1.73/13.16 || 18.37/16.04 || 7.03/21.23 | | CIELAB | ||

|| 23.98/17.02 | |||

|| 16.46/16.59 | |||

|| 13.57/18.01 | |||

|| '''1.73'''/'''13.16''' | |||

|| 18.37/16.04 | |||

|| 7.03/21.23 | |||

|- | |- | ||

| CIE94 || 17.48/11.64 || 11.77/10.97 || 11.77/10.98 || 1.13/5.69 || 13.10/10.52 || 5.21/14.10 | | CIE94 | ||

|| 17.48/11.64 | |||

|| 11.77/10.97 | |||

|| 11.77/10.98 | |||

|| '''1.13'''/'''5.69''' | |||

|| 13.10/10.52 | |||

|| 5.21/14.10 | |||

|- | |- | ||

| CIEDE2000 || 12.09/10.85 || 10.30/9.41 || 10.59/9.37 || 1.23/5.44 || 11.25/10.18 || 4.85/12.43 | | CIEDE2000 | ||

|| 12.09/10.85 | |||

|| 10.30/9.41 | |||

|| 10.59/9.37 | |||

|| '''1.23'''/'''5.44''' | |||

|| 11.25/10.18 | |||

|| 4.85/12.43 | |||

|- | |- | ||

| SSIM || 0.73/0.85 || 0.86/0.93 || 0.98/0.94 || 0.99/0.96 || 0.80/0.87 || 0.95/0.88 | | SSIM | ||

|| 0.73/0.85 | |||

|| 0.86/0.93 | |||

|| 0.98/0.94 | |||

|| '''0.99'''/'''0.96''' | |||

|| 0.80/0.87 | |||

|| 0.95/0.88 | |||

|- | |- | ||

| VGG Latent Space Distance || 38.70/42.80 || 34.23/37.29 || 23.12/29.51 || 12.53/29.97 || 37.86/37.41 || 25.25/25.67 | | VGG Latent Space Distance | ||

|| 38.70/42.80 | |||

|| 34.23/37.29 | |||

|| 23.12/29.51 | |||

|| '''12.53'''/29.97 | |||

|| 37.86/37.41 | |||

|| 25.25/'''25.67''' | |||

|} | |} | ||

[[File:Bunnelby_Charmeleon_all_methods.png|none|thumb|1200px|Figure 2: Results of Bunnelby and Charmeleon]] | [[File:Bunnelby_Charmeleon_all_methods.png|none|thumb|1200px|Figure 2: Results of Bunnelby and Charmeleon]] | ||

==== Experiment 3 ==== | |||

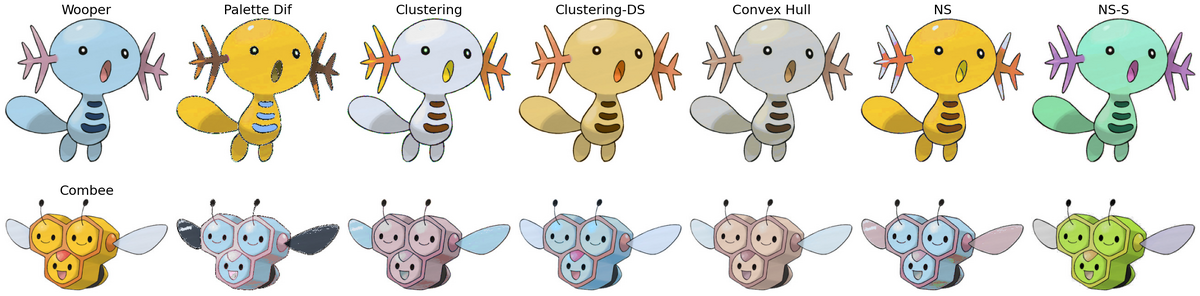

{| class="wikitable" | {| class="wikitable" | ||

|+ Table 3: Quantitative Evaluation Results on Wooper and Combee | |+ Table 3: Quantitative Evaluation Results on Wooper and Combee | ||

! Metric !! Palette Dif !! Clustering !! | ! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hull !! NS !! NS-S | ||

|- | |- | ||

| FID || 766.17/717.07 || 205.08/215.13 || 217.28/236.68 || 445.31/146.82 || 150.13/147.53 || 69.81/52.21 | | FID | ||

|| 766.17/717.07 | |||

|| 205.08/215.13 | |||

|| 217.28/236.68 | |||

|| 445.31/146.82 | |||

|| 150.13/147.53 | |||

|| '''69.81'''/'''52.21''' | |||

|- | |- | ||

| Histogram-Corr || 0.95/0.98 || 0.95/0.98 || -0.00/0.00 || 0.95/0.98 || 0.95/0.98 || 0.96/0.99 | | Histogram-Corr | ||

|| 0.95/0.98 | |||

|| 0.95/0.98 | |||

|| -0.00/0.00 | |||

|| 0.95/0.98 | |||

|| 0.95/0.98 | |||

|| '''0.96'''/'''0.99''' | |||

|- | |- | ||

| Histogram-Chi2 || 0.82/1.20 || 1160.72/2.03 || 1.85/1118.66 || 143.71/3.60 || 1.13/1.10 || 1.02/18.90 | | Histogram-Chi2 | ||

|| '''0.82'''/1.20 | |||

|| 1160.72/2.03 | |||

|| 1.85/1118.66 | |||

|| 143.71/3.60 | |||

|| 1.13/'''1.10''' | |||

|| 1.02/18.90 | |||

|- | |- | ||

| CIELAB || 32.61/27.79 || 9.69/20.75 || 39.02/23.37 || 7.42/16.33 || 31.56/23.39 || 13.82/12.89 | | CIELAB | ||

|| 32.61/27.79 | |||

|| 9.69/20.75 | |||

|| 39.02/23.37 | |||

|| '''7.42'''/16.33 | |||

|| 31.56/23.39 | |||

|| 13.82/'''12.89''' | |||

|- | |- | ||

| CIE94 || 19.73/18.92 || 7.00/12.61 || 31.80/14.86 || 6.14/7.95 || 18.42/14.38 || 9.70/7.60 | | CIE94 | ||

|| 19.73/18.92 | |||

|| 7.00/12.61 | |||

|| 31.80/14.86 | |||

|| '''6.14'''/7.95 | |||

|| 18.42/14.38 | |||

|| 9.70/'''7.60''' | |||

|- | |- | ||

| CIEDE2000 || 16.95/16.65 || 6.43/11.03 || 24.08/13.29 || 5.95/7.06 || 15.87/12.65 || 9.47/8.14 | | CIEDE2000 | ||

|| 16.95/16.65 | |||

|| 6.43/11.03 | |||

|| 24.08/13.29 | |||

|| '''5.95'''/'''7.06''' | |||

|| 15.87/12.65 | |||

|| 9.47/8.14 | |||

|- | |- | ||

| SSIM || 0.79/0.71 || 0.95/0.91 || 0.95/0.92 || 0.98/0.93 || 0.87/0.88 || 0.98/0.96 | | SSIM | ||

|| 0.79/0.71 | |||

|| 0.95/0.91 | |||

|| 0.95/0.92 | |||

|| '''0.98'''/0.93 | |||

|| 0.87/0.88 | |||

|| '''0.98'''/'''0.96''' | |||

|- | |- | ||

| VGG Latent Space Distance || 50.05/43.90 || 23.22/27.97 || 12.17/25.04 || 15.94/23.81 || 30.56/30.23 || 16.39/21.61 | | VGG Latent Space Distance | ||

|| 50.05/43.90 | |||

|| 23.22/27.97 | |||

|| '''12.17'''/25.04 | |||

|| 15.94/23.81 | |||

|| 30.56/30.23 | |||

|| 16.39/'''21.61''' | |||

|} | |} | ||

[[File:Wooper_Combee_all_methods.png|none|thumb|1200px|Figure 3: Results of Wooper and Combee]] | [[File:Wooper_Combee_all_methods.png|none|thumb|1200px|Figure 3: Results of Wooper and Combee]] | ||

==== Experiment 4 ==== | |||

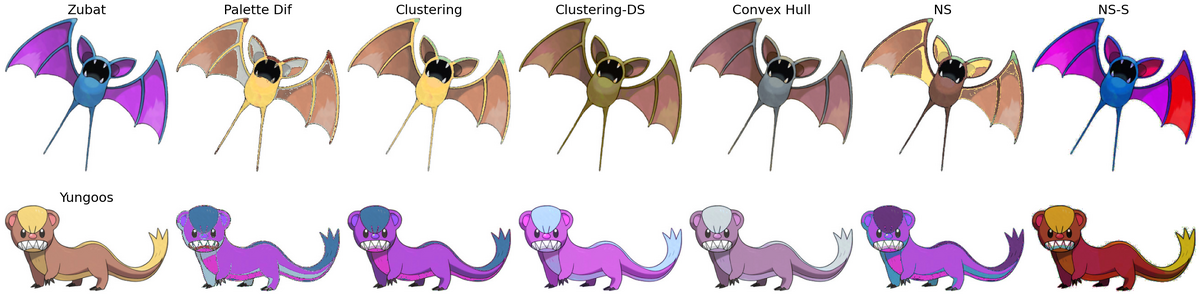

{| class="wikitable" | {| class="wikitable" | ||

|+ Table | |+ Table 4: Quantitative Evaluation Results on Zubat and Yungoos | ||

! Metric !! Palette Dif !! Clustering !! | ! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hull !! NS !! NS-S | ||

|- | |- | ||

| FID || 303.70/474.41 || 98.14/85.93 || 91.85/53.84 || 76.67/119.88 || 178.35/175.20 || 91.03/150.78 | | FID || 303.70/474.41 || 98.14/85.93 || 91.85/'''53.84''' || '''76.67'''/119.88 || 178.35/175.20 || 91.03/150.78 | ||

|- | |- | ||

| Histogram-Corr || 1.00/0.96 || 1.00/0.96 || 1.00/0.97 || 1.00/0.97 || 1.00/0.97 || 1.00/0.98 | | Histogram-Corr || '''1.00'''/0.96 || '''1.00'''/0.96 || '''1.00'''/0.97 || '''1.00'''/0.97 || '''1.00'''/0.97 || '''1.00'''/'''0.98''' | ||

|- | |- | ||

| Histogram-Chi2 || 6.48/0.73 || 0.65/0.73 || 1.47/0.80 || 3.81/2.61 || 1.02/0.73 || 0.88/2.90 | | Histogram-Chi2 || 6.48/'''0.73''' || '''0.65'''/'''0.73''' || 1.47/0.80 || 3.81/2.61 || 1.02/'''0.73''' || 0.88/2.90 | ||

|- | |- | ||

| CIELAB || 23.33/30.84 || 21.80/30.68 || 20.28/27.59 || 15.11/13.90 || 21.09/31.86 || 12.43/15.90 | | CIELAB || 23.33/30.84 || 21.80/30.68 || 20.28/27.59 || 15.11/'''13.90''' || 21.09/31.86 || '''12.43'''/15.90 | ||

|- | |- | ||

| CIE94 || 14.77/18.98 || 12.81/17.68 || 11.36/15.53 || 7.77/9.86 || 12.72/19.12 || 6.28/12.42 | | CIE94 || 14.77/18.98 || 12.81/17.68 || 11.36/15.53 || 7.77/'''9.86''' || 12.72/19.12 || '''6.28'''/12.42 | ||

|- | |- | ||

| CIEDE2000 || 13.03/17.22 || 11.45/15.81 || 10.31/14.03 || 6.57/8.89 || 11.54/17.66 || 5.19/11.14 | | CIEDE2000 || 13.03/17.22 || 11.45/15.81 || 10.31/14.03 || 6.57/'''8.89''' || 11.54/17.66 || '''5.19'''/11.14 | ||

|- | |- | ||

| SSIM || 0.81/0.76 || 0.89/0.88 || 0.93/0.94 || 0.96/0.97 || 0.85/0.82 || 0.90/0.83 | | SSIM || 0.81/0.76 || 0.89/0.88 || 0.93/0.94 || '''0.96'''/'''0.97''' || 0.85/0.82 || 0.90/0.83 | ||

|- | |- | ||

| VGG Latent Space Distance || 40.42/42.78 || 37.38/30.40 || 24.55/27.87 || 32.95/23.34 || 35.27/34.75 || 17.47/38.24 | | VGG Latent Space Distance || 40.42/42.78 || 37.38/30.40 || 24.55/27.87 || 32.95/'''23.34''' || 35.27/34.75 || '''17.47'''/38.24 | ||

|} | |} | ||

[[File: | |||

[[File:Zubat_Yungoos_all_methods.png|none|thumb|1200px|Figure 4: Results of Zubat and Yungoos]] | |||

==== Experiment 5 ==== | |||

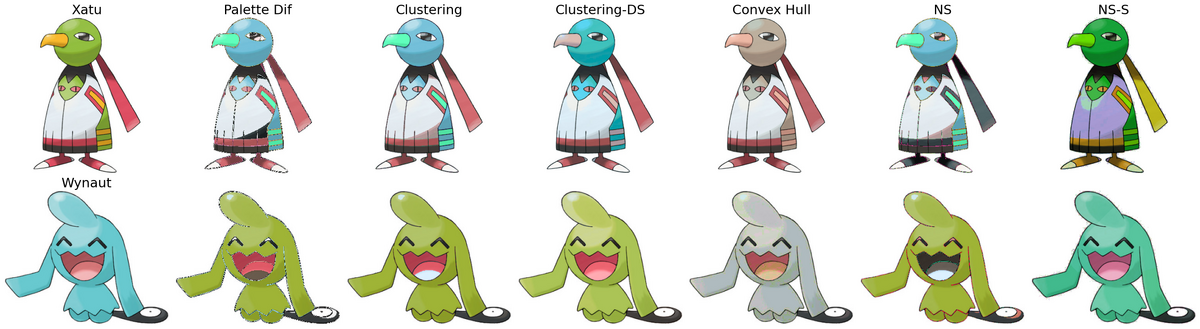

{| class="wikitable" | |||

|+ Table 5: Quantitative Evaluation Results on Xatu and Wynaut | |||

! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hull !! NS !! NS-S | |||

|- | |||

| FID || 635.17/1461.28 || 141.47/259.03 || 140.36/193.12 || '''136.43'''/414.58 || 616.74/942.14 || 152.16/'''89.86''' | |||

|- | |||

| Histogram-Corr || '''0.99'''/0.96 || '''0.99'''/0.96 || 0.98/0.96 || '''0.99'''/0.97 || '''0.99'''/0.96 || 0.98/'''0.98''' | |||

|- | |||

| Histogram-Chi2 || 52.29/'''0.70''' || 49.28/0.82 || 112.63/4.12 || 73.87/17.01 || '''11.84'''/9.26 || 30.80/1.10 | |||

|- | |||

| CIELAB || 16.27/23.28 || 13.94/22.01 || 12.41/19.99 || '''9.81'''/'''8.95''' || 19.24/24.00 || 19.51/9.59 | |||

|- | |||

| CIE94 || 10.59/14.25 || 8.17/12.88 || 6.85/11.81 || '''4.76'''/6.34 || 12.37/14.36 || 13.41/'''6.29''' | |||

|- | |||

| CIEDE2000 || 9.78/12.49 || 7.83/11.43 || 6.39/10.59 || '''4.44'''/6.96 || 10.79/12.64 || 12.37/'''6.06''' | |||

|- | |||

| SSIM || 0.76/0.79 || 0.93/0.90 || 0.94/0.94 || '''0.97'''/0.94 || 0.83/0.86 || 0.85/'''0.97''' | |||

|- | |||

| VGG Latent Space Distance || 41.13/40.54 || 27.05/19.41 || 28.61/16.23 || 35.77/'''15.34''' || 32.55/24.16 || '''23.39'''/17.14 | |||

|} | |||

[[File:Xatu_Wynaut_all_methods.png|none|thumb|1200px|Figure 5: Results of Xatu and Wynaut]] | |||

==== Experiment 6 ==== | |||

{| class="wikitable" | |||

|+ Table 6: Quantitative Evaluation Results on Whismur and Vulpix | |||

! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hull !! NS !! NS-S | |||

|- | |||

| FID || 670.79/943.51 || 287.37/460.26 || 235.06/271.43 || '''161.65'''/198.80 || 280.37/459.79 || 280.11/'''127.84''' | |||

|- | |||

| Histogram-Corr || 0.80/0.92 || 0.80/0.92 || 0.78/0.91 || 0.79/0.92 || '''0.82'''/0.92 || 0.81/'''0.94''' | |||

|- | |||

| Histogram-Chi2 || 4.78/114.91 || '''1.34'''/6.70 || 130.44/2658.28 || 112.91/449.76 || 1.47/6.69 || 56.17/'''3.07''' | |||

|- | |||

| CIELAB || 25.36/31.34 || 26.38/23.69 || 15.39/19.88 || '''11.04'''/'''16.62''' || 25.92/23.68 || 20.30/38.21 | |||

|- | |||

| CIE94 || 17.65/22.71 || 18.53/16.90 || 10.85/11.73 || '''8.48'''/'''8.01''' || 18.34/16.87 || 13.58/21.73 | |||

|- | |||

| CIEDE2000 || 15.35/19.42 || 16.42/15.10 || 10.06/11.58 || '''8.09'''/'''7.85''' || 16.30/15.08 || 12.20/20.39 | |||

|- | |||

| SSIM || 0.85/0.63 || 0.88/0.88 || '''0.98'''/'''0.95''' || '''0.98'''/'''0.95''' || 0.88/0.88 || 0.93/0.89 | |||

|- | |||

| VGG Latent Space Distance || 30.93/41.00 || 23.97/24.99 || '''15.14'''/22.16 || 21.43/'''17.08''' || 23.90/25.02 || 29.67/22.50 | |||

|} | |||

[[File:Whismur_Vulpix_all_methods.png|none|thumb|1200px|Figure 6: Results of Whinsmur and Vulpix]] | |||

=== Human Preference === | |||

We ask human volunteers to select their favorite color transfer result. The test images are selected by randomly picking a pair of pokemons and run each algorithm. | |||

{| class="wikitable" | |||

|+ Table 7: Human Preference in % | |||

! Metric !! Palette Dif !! Clustering !! Clustering_DS !! Convex Hall !! NS !! NS-S | |||

|- | |||

| Preference Ratio || 3.5 || 12.4 || '''30.6''' || 11.7 || 13.2 || 28.6 | |||

|} | |||

== Conclusions == | == Conclusions == | ||

In this project, we explored a family of palette- and cluster-based color transfer methods for Pokémon artwork, aiming to achieve visually appealing style changes while preserving content and structure. Across six Pokémon pairs and multiple quantitative metrics, our experiments show that simple palette differencing provides a useful creative baseline. Still, more structured methods that incorporate palette geometry, spatial grouping, or cluster correspondences produce recolorings that are both more stable and more faithful to the originals. | |||

From the quantitative results, several consistent trends emerge. The naive Palette Dif baseline typically yields the largest FID, ΔE, and VGG feature-space distances, indicating substantial shifts in both color appearance and high-level structure. In contrast, methods that explicitly align color distributions—such as Convex-hull transfer and Palette-aware clustering—generally achieve much lower ΔE (CIELAB, CIE94, CIEDE2000) and higher SSIM, reflecting better preservation of Pokémon shapes, shading, and semantic details. The Convex-hull method, in particular, often attains some of the lowest perceptual color differences while still producing convincing stylizations, suggesting that preserving barycentric relationships in palette space is an effective way to constrain recoloring. | |||

Spatially aware methods further improve robustness and visual coherence. Both Neighbor Segments (NS) and Neighbor Segments with Superpixels (NS-S) explicitly regularize neighboring regions, reducing local artifacts and preserving edges, as reflected in consistently high SSIM scores and competitive VGG distances. NS-S often strikes a favorable balance between global palette adaptation and local smoothness, particularly on more complex or textured Pokémon, where superpixel grouping helps prevent noisy or flickering color assignments. Cluster Transfer without Palette (Clustering_DS) demonstrates that palette-like structure can also be discovered directly in Lab+XY space; when cluster matching is stable, this method can achieve strong numerical performance, though its sensitivity to clustering hyperparameters sometimes leads to larger histogram divergences or perceptual shifts. | |||

Taken together, our results highlight that no single metric fully captures “good” color transfer. Methods that aggressively change color (e.g., Palette Dif) can look creative but rank poorly on perceptual distance measures. In contrast, very conservative methods score well numerically but may feel less stylistically interesting. Evaluating with a diverse set of metrics—FID, histogram similarity, multiple ΔE formulas, SSIM, and VGG feature distances—reveals complementary aspects of quality and emphasizes the importance of balancing stylistic expressiveness with structural fidelity. In practice, we find that geometry-aware (Convex-hull) and spatially regularized (NS-S) approaches offer the most reliable trade-off across different Pokémon pairs. | |||

There are several promising directions for future work. First, integrating learned deep features directly into the transfer objective could better align the recolorings with human perceptual judgments than hand-designed metrics alone. Second, extending our framework to handle backgrounds, multi-Pokémon scenes, or temporal consistency in animations would make the methods more broadly applicable to fan art and game assets. Finally, adding interactive controls (e.g., user-pinned colors, region-specific constraints, or color-vision–aware sliders) could turn these algorithms into practical tools for artists and designers. Overall, our study suggests that combining compact palettes, simple geometric constraints, and lightweight spatial regularization is a powerful recipe for controllable, Pokémon-style color transfer. | |||

== References == | |||

[1] Lei, Mingkun, et al. "StyleStudio: Text-Driven Style Transfer with Selective Control of Style Elements." Proceedings of the Computer Vision and Pattern Recognition Conference. 2025. | |||

[2] Reinhard, Erik, et al. "Color transfer between images." IEEE Computer graphics and applications 21.5 (2002): 34-41. | |||

[3] Lv, Chenlei, et al. "Color transfer for images: A survey." ACM Transactions on Multimedia Computing, Communications and Applications 20.8 (2024): 1-29. | |||

[4] Ahmed, Mohiuddin, Raihan Seraj, and Syed Mohammed Shamsul Islam. "The k-means algorithm: A comprehensive survey and performance evaluation." Electronics 9.8 (2020): 1295. | |||

[5] Wang, Y., Liu, Y. and Xu, K. (2019), An Improved Geometric Approach for Palette-based Image Decomposition and Recoloring. Computer Graphics Forum, 38: 11-22. https://doi.org/10.1111/cgf.13812 | |||

[6] Zheng-Jun Du, Kai-Xiang Lei, Kun Xu, Jianchao Tan, and Yotam Gingold. 2021. Video recoloring via spatial-temporal geometric palettes. ACM Trans. Graph. 40, 4, Article 150 (August 2021), 16 pages. https://doi.org/10.1145/3450626.3459675 | |||

== Appendix I == | == Appendix I == | ||

Our code is available at: https://drive.google.com/drive/folders/1aCk4Cb7wqQxMhNLclY0jbShn3LOqAL0n?usp=sharing | |||

== Appendix II == | == Appendix II == | ||

Wenxiao Cai: | Wenxiao Cai: proposal, investigation, codes and experiments, report writing and presentation | ||

Yifei Deng: | Yifei Deng: roposal, investigation, codes and experiments, report writing and presentation | ||

Latest revision as of 18:06, 12 December 2025

Introduction

Color transfer is a technique that modifies the color style of an image by borrowing or reassociating colors from another source while preserving the original spatial structure and content. By separating “what the image is” from “how the image is colored,” color transfer enables creative style manipulation without altering shapes, outlines, or semantic meaning. It has been widely used in artistic stylization, recoloring, and visual design tasks due to its ability to generate visually coherent outputs from simple palette or distribution transformations.

In the context of Pokemon artwork, color transfer offers a powerful and intuitive way to design new visual styles. Pokemon are typically drawn using a small number of distinctive colors that define their character identity (e.g., Charmander’s warm oranges or Squirtle’s blues). By transferring color styles between Pokemon, we can create novel, aesthetically pleasing variations that feel natural and consistent with the Pokemon universe, while still preserving the original line art and recognizable features. In this project, we focus on two primary goals:

a. Style preservation: the recolored image should adopts the color style, mood, or chromatic distribution of a another image.

b. Aesthetic quality: the recolored Pokemon should look visually pleasing and harmonious.

To achieve these goals, we apply palette-based color transfer methods to generate new Pokemon styles and systematically evaluate the results. Aesthetic quality is assessed through a human-preference survey questionnaire, while similarity to the original image is measured using quantitative metrics such as FID, SSIM, and other perceptual distance measures. Together, these evaluations allow us to study both the creativity and fidelity of color-transferred Pokemon, providing a balanced assessment of stylistic expressiveness and structural preservation.

Background

Color and Style Transfer

Style and color transfer [1] is a fundamental technique in computer vision and computational photography that aims to reinterpret an image by borrowing visual characteristics from another. Early work focused on texture synthesis and color statistics matching, enabling simple recoloring or pattern transfer across images. With the rise of deep learning, neural style transfer demonstrated that high-level artistic patterns—such as brushstrokes, textures, and global color mood—could be extracted from one image and blended with the structural content of another [2]. Modern approaches further incorporate perceptual features, palette manipulation, and learned representations to achieve more faithful, controllable, and efficient transformations. These methods are now widely used in artistic rendering, photo enhancement, film post-production, augmented reality, and low-power edge-device imaging pipelines [3]. The aim of color transfer in this project is to reinterpret an image by borrowing visual characteristics from another, and modify the color appearance of a pair of images so that it adopts the color style, mood, or chromatic distribution of a another image, and looks good to human.

HSV Color Space

HSV (Hue-Saturation-Value) is a cylindrical color space that separates chromatic information from intensity, making it particularly suitable for color manipulation tasks. Unlike RGB, which mixes color and brightness, HSV represents:

- Hue (H): The color type (0-360° or 0-1 normalized), representing position on the color wheel

- Saturation (S): Color purity or intensity (0-1), from grayscale to pure color

- Value (V): Brightness (0-1), from black to full intensity

Reasons to use HSV for Color Transfer

We employ HSV alongside LAB in our pipeline for complementary purposes:

- Intuitive Color Shifts: HSV allows direct manipulation of color properties. Hue differences (ΔH) naturally represent color-to-color mappings (e.g., green → blue), while saturation and value adjustments preserve tonal relationships.

- Perceptual Separation: By decoupling chromatic content (H, S) from luminance (V), we can modify colors while preserving shading and depth cues that define Pokémon structure.

- Gradient Preservation: When transferring between palette colors, HSV shifts maintain smooth color transitions better than direct RGB interpolation. This is critical for preserving gradients in Pokémon artwork (e.g., shading on Squirtle's shell).

- Complementary to LAB: While LAB excels at perceptual distance measurement (used in palette matching), HSV excels at color transformation. Our methods use LAB to determine which colors should map to each other, then HSV to compute how to shift pixel colors.

Implementation in Our Methods:

- Baseline & Clustering methods: Compute palette correspondence in LAB space (minimizing ΔE), then apply shifts in HSV

- Neighbor-based methods: Use HSV shifts with spatial smoothing to maintain local color coherence

- Hue wrapping: Circular nature of hue (red at 0° and 360°) is handled via modulo arithmetic:

This dual color space strategy—LAB for perception-based matching and HSV for intuitive transformation—enables both accurate palette alignment and smooth, natural-looking color transitions in the transferred Pokémon images.

Palette Extraction

Palette extraction is the process of analyzing an image and summarizing its millions of pixel colors into a small, representative set of key colors — known as a color palette. Instead of working directly with every pixel’s RGB value, palette extraction identifies the dominant or most perceptually important colors that define the visual appearance of the image. A palette typically contains only 4–8 colors, yet these colors capture the essential chromatic structure of the image. This compact representation removes noise, eliminates redundant colors, and preserves the underlying style of the original artwork. We implement two palette extraction methods.

K-Means

We use K-Means [4] to cluster pixel values and use its center as palette. To avoid clustering on millions of pixels, we quantize RGB space into 16 bins per channel. For each pixel with RGB value :

For each non-empty bin, we count pixels and compute average LAB value of all pixels in that bin. To avoid randomness in K-Means, the method uses a weighted farthest-point initialization. We select the bin with the largest weight as the first center. For each new center, compute squared distance from existing centers and apply attenuation:

We then pick the bin with the largest attenuated weight. Each histogram bin with LAB color and weight is assigned to the nearest center. The center update rule is:

Black and white anchors remain fixed. The convergence criterion is:

Blind Separation Palette Extraction (BSS-LLE Method)

This second method treats palette extraction as a blind unmixing problem with spatial smoothness constraints. It is computationally more expensive but yields globally coherent palettes.

Each pixel forms a 5-D feature vector:

where: ∈ , are normalized coordinates, controls spatial smoothness. For each pixel, we find nearest neighbors and compute LLE weights by solving:

Construct Laplacian:

We assume each pixel’s color can be expressed as a mixture of palette colors:

Where are mixture weights, and are palette colors. We minimize:

The optimization uses alternating minimization:

1. Update W (closed-form linear system) 2. Hard-threshold W to enforce sparsity 3. Update C by solving

4. Increase β to gradually enforce sparsity (continuation method)

The learned palette may lie off-manifold. Thus each palette color is replaced by the mean of its nearest real RGB pixels. This ensures interpretability and consistent color reproduction.

Final Output

Both methods return a palette:

Methods

In this section, we describe the methods we use to transfer color between pokemons.

Baseline: Palette Based Random Transfer

Palette-based random transfer works by first extracting a compact color palette from each image, then randomly matching colors between the two palettes to generate a playful and diverse recoloring. Instead of enforcing a strict one-to-one correspondence or optimizing for perceptual similarity, the method randomly permutes or samples palette colors and maps all pixels associated with a source palette color to a randomly chosen target palette color. This allows the transferred image to preserve structural details while producing vivid, surprising, and stylistically varied recolorings. Because it operates only on palette colors rather than individual pixels, palette-based random transfer is fast, interpretable, and ideal for generating creative variations in tasks like Pokemon color stylization. We use Palette-based random transfer as the baseline.

Neighbor Segments

Neighbor Segments (NS) Method groups pixels into local, perceptually coherent regions and uses neighborhood relationships to guide smooth and consistent recoloring. Instead of treating each pixel independently, the image is first segmented into small regions as color-coherent clusters, where each segment represents a set of spatially adjacent pixels with similar color statistics. Let the image be segmented into , where each segment contains pixels with similar color features. These segments form a neighborhood graph , where an undirected edge indicates that the two segments touch in the spatial domain. The adjacency matrix of the graph satisfies:

During color transfer, each segment is assigned one palette color. Let be the target palette, and let denote the transferred color assigned to segment . The NS method encourages smoothness by minimizing a neighborhood-consistency energy:

where is a weight encoding the similarity of the segments (e.g., based on LAB difference or boundary strength). This term penalizes large color differences between adjacent segments, preventing abrupt color transitions or blocky artifacts. At the same time, the transferred color for each segment should remain close to its mapped palette color determined by the palette mapping rule:

The final transferred colors are obtained by minimizing the combined objective:

where controls the strength of neighborhood smoothing. This neighborhood-aware propagation allows the algorithm to maintain structural consistency, preserve texture boundaries, and generate recolorings that are both stable and visually coherent. Overall, Neighbor Segments provides a lightweight way to incorporate spatial smoothness into palette-based transfer, producing natural transitions while keeping computation efficient.

Clustering-based palette transfer

This method leverages the K-means-extracted palettes (clusters of representative colors) and establishes an optimal correspondence between them before applying spatially-weighted color shifts.

Given extracted palettes and , we first compute a one-to-one matching via the Hungarian algorithm in CIE-Lab space: where denotes the set of all permutations of . This yields a permutation that minimizes the total perceptual distance between matched palette colors.

For each pixel in image , we compute soft membership weights to 's palette in Lab space: where controls the softness of palette assignment. Black and white pixels ( or with ) are excluded from recoloring.

For each matched palette pair , we compute the HSV shift: with hue differences wrapped to to ensure minimal angular distance. Each pixel's color is updated by a weighted blend of these shifts: followed by hue wrapping (), saturation and value clipping to , and conversion back to RGB. Image is recolored symmetrically using the inverse permutation .

Direct Spatial Clustering Transfer

This method automatically discovers spatially-coherent color regions by clustering each image in joint Lab+XY space, then transfers colors by optimally matching these implicit palette clusters across the two images. Cluster discovery: For each image, we build Lab+XY features for every pixel, where is the pixel coordinate, are image dimensions, and (the xy_scale) balances spatial locality versus color. K-means on these features yields clusters . For each cluster, we store:

mean chroma ,

chroma covariance ,

spatial centroid , and area .

Cluster matching:

Let superscripts and denote the two images. We define a matching cost between cluster in A and cluster in B:

where weight color, spatial proximity, and relative area, and are pixel counts. A one-to-one correspondence is obtained by minimizing the total cost with the Hungarian algorithm.

Per-pixel soft assignment. Each pixel in image A gets soft memberships to A’s clusters using a temperatured softmax in chroma (ab) space: where (soft_tau) controls how sharply pixels commit to a single cluster (larger → crisper; smaller → smoother blends).

Cluster-pair color mapping: For each matched pair we define either:a mean-shift in chroma: ,

or an optional affine mapping in chroma (when use_affine = True):

Pixel update and reconstruction: For a pixel in A with chroma vector , we blend the cluster-pair mappings:

mean-shift mode:

affine mode: We preserve lightness () to keep shading/speculars intact and form , then convert to RGB with clipping. The same symmetric procedure recolors image B using clusters discovered in B and matched to A.

Background preservation: By default (preserve_bg=True), near-white pixels (RGB > 0.92 in all channels) are masked and restored after recoloring to preserve clean backgrounds and line art.

Convex-hull Transfer

Convex-hull transfer treats each image’s palette as a convex set in color space. It transfers colors by preserving a pixel’s barycentric coordinates with respect to its source palette while mapping those coordinates onto the target palette.

Palette alignment: Given palettes and (in RGB or Lab), we first compute a one-to-one correspondence with a Hungarian assignment that minimizes total Lab distance: This permutes to best match perceptually.

Inside-hull mapping (barycentric coordinates): Let be a pixel color (as a 3-vector) in image A. If lies in the convex hull of , we find a simplex (e.g., Delaunay triangle/tetra in RGB) with vertices (typically in 2D projections or in RGB). We compute barycentric weights such that: We then map to the target palette by reusing the same weights on the matched vertices:

Outside-hull fallback (local simplex projection): If lies outside , we select the nearest palette colors (e.g., 3–5 neighbors) and solve a constrained least squares to obtain a convex combination that best approximates : The solution (via simplex projection) yields weights that we again transfer to the matched targets:

Reconstruction and safeguards: To preserve shading and highlights, we keep the lightness channel and only replace chroma: We apply practical guards for stability:

- Background/line protection: skip updates for near-white/near-black or very low-chroma pixels.

- Chroma clamp: cap to avoid oversaturation.

- Gentle blend: mix the recolored result with the original (e.g., 80–90%) to suppress artifacts while retaining the target palette’s look.

We apply the same procedure in the opposite direction to recolor image B.

Neighbor Segments with Superpixel (NS-S)

Neighbor Segments with Superpixels (NS-S) method extends palette-based color transfer by incorporating spatially coherent superpixel regions. Instead of operating on individual pixels, the image is first partitioned into a set of superpixels, each representing a compact region of adjacent pixels with similar color and texture characteristics. Let the superpixel segmentation produce , where each superpixel is treated as a unit for color assignment. A neighborhood graph is constructed, where an edge indicates that two superpixels touch or share a boundary in the image plane. The adjacency matrix is defined as:

Given a target palette , each superpixel receives a transferred color , determined by a palette-matching function (e.g., hard assignment, soft matching, or nearest palette color).

To ensure smooth and visually coherent recoloring across the image, the NS-SP method minimizes the following energy:

where:

- encourages each superpixel to adopt its intended palette color,

- enforces spatial smoothness between adjacent superpixels,

- weights the similarity between boundary segments (e.g., based on color difference, gradient magnitude, or LAB distance),

- controls the strength of neighborhood smoothing.

By working at the superpixel level, NS-SP effectively reduces noise, prevents pixel-level flicker, and maintains clean boundaries between meaningful regions. The neighborhood smoothing further prevents abrupt color jumps, producing recolored images that maintain Pokémon shapes, shading, and visual consistency while still allowing strong palette-driven stylistic changes.

Overall, the NS-SP formulation provides a computationally efficient and perceptually stable approach for palette-based color transfer, balancing stylization with structural fidelity.

Results

Evaluation Metrics

We quantitatively evaluate our color-transfer methods by comparing the original Pokémon image and the recolored result using several complementary metrics.

Fréchet Inception Distance (FID)

Fréchet Inception Distance (FID) measures the perceptual similarity between two images by comparing their deep feature distributions. Instead of relying on pixel-level differences, FID uses the Inception-V3 network to extract high-level feature embeddings that represent shapes, textures, and semantic content. For both the original and recolored images, we compute the mean (μ) and covariance (Σ) of these feature activations, and then measure the Fréchet distance between the two resulting Gaussian distributions.

A lower FID value indicates that the recolored image is closer to the original in perceptual and semantic structure. A value of 0 signifies perfectly matched feature distributions. FID is widely used in image generation and style-transfer research to assess the realism and fidelity of transformed images.

Color Histogram Similarity (Correlation / Chi-Square)

Color Histogram Similarity evaluates how two images distribute colors across the RGB or HSV color space. This metric focuses purely on global color composition, ignoring spatial structure. For each image, we compute color histograms and compare them using two complementary measures:

Correlation – Measures how closely the two histograms co-vary, with values ranging from –1 to 1. A value of 1 indicates perfectly matched color distributions, while higher values generally correspond to greater color consistency.

Chi-Square Distance – Measures divergence between two histograms by summing the normalized squared differences across bins. A value of 0 indicates identical histograms, and lower values reflect stronger color similarity.

These metrics quantify how well the recolored image preserves the overall palette of the original, making them useful for evaluating global color fidelity in color-transfer tasks.

D-CIELAB (ΔEab for Normal and Color-Deficient Observers)

D-CIELAB measures pixel-wise color differences using CIELAB (Lab) color space and extends them to different types of observers, including those with simulated color-vision deficiencies. For each pair of corresponding pixels in the original and recolored images, we compute the Euclidean distance ΔEab in Lab space, then average over all pixels.

We report four average ΔE values:

- ΔEab (trichromat) – Mean per-pixel color difference for a normal color-vision observer.

- ΔEab (protan) – Mean difference as seen by a protan (L-cone-deficient) observer.

- ΔEab (deutan) – Mean difference as seen by a deutan (M-cone-deficient) observer.

- ΔEab (tritan) – Mean difference as seen by a tritan (S-cone-deficient) observer.

In all cases, lower values indicate more similar colors. Differences around 1 in ΔEab are often near the threshold of human just-noticeable color differences, while larger numbers indicate more visible shifts. This metric allows us to assess how well the color transfer preserves appearance for both normal and color-blind viewers.

CIE94 Color Difference

CIE94 is a perceptual color-difference formula that improves on raw ΔEab by weighting lightness, chroma, and hue differences according to human sensitivity. We convert both images to Lab space and, for each pixel, decompose the color difference into:

- ΔL – difference in lightness,

- ΔC – difference in chroma (saturation),

- ΔH – difference in hue,

and then combine these components with empirically derived weights to obtain a single ΔE94 value per pixel. We average ΔE94 across all pixels to get the final score.

- 0 indicates identical colors.

- Lower mean ΔE94 values correspond to more perceptually similar recolorings.

Compared to plain ΔEab, CIE94 is more aligned with human judgments in many regions of color space.

CIEDE2000 Color Difference

CIEDE2000 (ΔE00) is a more recent and widely adopted color-difference formula that further refines perceptual uniformity compared to CIE94. It introduces additional corrections for:

- Nonlinearities in chroma and hue perception,

- Interactions between chroma and hue differences,

- Local variations in perceptual sensitivity across Lab space.

As with CIE94, we compute ΔE00 for each pixel between the original and recolored images and average over all pixels.

- 0 indicates identical colors.

- Lower mean ΔE00 values indicate recolorings that are closer to the original in terms of human-perceived color.

CIEDE2000 calibrates against large psychophysical datasets and corrects known non-uniformities in CIELAB and CIE94/

Structural Similarity Index (SSIM)

Structural Similarity Index (SSIM) measures perceptual image quality by comparing local patterns of luminance, contrast, and structure rather than raw pixel differences. For each color channel, we compute SSIM over local windows using Gaussian-weighted means and variances, then average across channels and spatial locations.

- The SSIM score typically ranges from 0 to 1 for natural images.

- A value of 1 means the images are structurally identical.

- Higher values indicate better preservation of local structure, contrast, and luminance.

In our context, SSIM tells us whether the color transfer preserves the underlying Pokémon shapes, edges, and textures, even when colors change.

VGG Feature-Space Distance

The VGG feature-space distance measures how different two images are in the high-level feature space of a pretrained VGG16 network. We feed both the original and recolored images into VGG16 and extract intermediate convolutional feature maps. Each spatial location in a feature map is treated as a point in a high-dimensional feature space.

We then compute the symmetric Hausdorff distance between the two sets of feature vectors:

- For each feature point in the original image, find the closest feature point in the recolored image, and record the worst (largest) such distance.

- Repeat in the opposite direction (recolored to original).

- Take the maximum of these two worst-case distances as the final metric.

Lower VGG distances mean that, at every location, the network can find a similar feature in the other image, indicating similar high-level structure and texture. Higher values indicate more substantial semantic or structural changes beyond simple color shifts.

Quantitative and Qualitative Results

Experiment 1

| Metric | Palette Dif | Clustering | Clustering-DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 795.74/229.05 | 106.22/110.64 | 46.78/61.91 | 96.12/66.19 | 107.60/108.85 | 75.15/42.77 |

| Histogram-Corr | 0.91/0.96 | 0.91/0.96 | 0.90/0.96 | 0.87/0.96 | 0.91/0.96 | 0.90/0.96 |

| Histogram-Chi2 | 2.79/36.67 | 8.97/2.35 | 535.42/2.33 | 23.28/2.02 | 8.35/2.39 | 6.38/9.97 |

| CIELAB | 19.54/14.45 | 18.32/14.88 | 13.34/10.08 | 13.03/8.76 | 18.33/14.63 | 16.88/15.51 |

| CIE94 | 14.82/10.72 | 13.44/11.13 | 8.84/7.41 | 8.78/6.71 | 13.45/10.98 | 12.20/11.76 |

| CIEDE2000 | 13.97/10.06 | 13.10/10.97 | 9.20/7.83 | 8.77/7.14 | 13.11/10.81 | 11.82/11.20 |

| SSIM | 0.82/0.90 | 0.96/0.95 | 0.99/0.99 | 0.98/0.99 | 0.96/0.96 | 0.97/0.95 |

| VGG Latent Space Distance | 35.29/32.63 | 15.46/21.98 | 14.13/21.83 | 13.73/18.18 | 15.76/22.05 | 17.29/18.31 |

Experiment 2

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 604.47/428.24 | 506.28/846.57 | 191.41/652.94 | 22.94/305.28 | 476.33/421.44 | 198.56/327.57 |

| Histogram-Corr | 0.98/0.98 | 0.98/0.98 | -0.00/0.98 | 0.99/0.98 | 0.98/0.98 | 0.98/0.99 |

| Histogram-Chi2 | 0.72/53.33 | 0.73/52.58 | 4.69/108.98 | 18.49/40.69 | 0.74/51.02 | 1.00/38.11 |

| CIELAB | 23.98/17.02 | 16.46/16.59 | 13.57/18.01 | 1.73/13.16 | 18.37/16.04 | 7.03/21.23 |

| CIE94 | 17.48/11.64 | 11.77/10.97 | 11.77/10.98 | 1.13/5.69 | 13.10/10.52 | 5.21/14.10 |

| CIEDE2000 | 12.09/10.85 | 10.30/9.41 | 10.59/9.37 | 1.23/5.44 | 11.25/10.18 | 4.85/12.43 |

| SSIM | 0.73/0.85 | 0.86/0.93 | 0.98/0.94 | 0.99/0.96 | 0.80/0.87 | 0.95/0.88 |

| VGG Latent Space Distance | 38.70/42.80 | 34.23/37.29 | 23.12/29.51 | 12.53/29.97 | 37.86/37.41 | 25.25/25.67 |

Experiment 3

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 766.17/717.07 | 205.08/215.13 | 217.28/236.68 | 445.31/146.82 | 150.13/147.53 | 69.81/52.21 |

| Histogram-Corr | 0.95/0.98 | 0.95/0.98 | -0.00/0.00 | 0.95/0.98 | 0.95/0.98 | 0.96/0.99 |

| Histogram-Chi2 | 0.82/1.20 | 1160.72/2.03 | 1.85/1118.66 | 143.71/3.60 | 1.13/1.10 | 1.02/18.90 |

| CIELAB | 32.61/27.79 | 9.69/20.75 | 39.02/23.37 | 7.42/16.33 | 31.56/23.39 | 13.82/12.89 |

| CIE94 | 19.73/18.92 | 7.00/12.61 | 31.80/14.86 | 6.14/7.95 | 18.42/14.38 | 9.70/7.60 |

| CIEDE2000 | 16.95/16.65 | 6.43/11.03 | 24.08/13.29 | 5.95/7.06 | 15.87/12.65 | 9.47/8.14 |

| SSIM | 0.79/0.71 | 0.95/0.91 | 0.95/0.92 | 0.98/0.93 | 0.87/0.88 | 0.98/0.96 |

| VGG Latent Space Distance | 50.05/43.90 | 23.22/27.97 | 12.17/25.04 | 15.94/23.81 | 30.56/30.23 | 16.39/21.61 |

Experiment 4

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 303.70/474.41 | 98.14/85.93 | 91.85/53.84 | 76.67/119.88 | 178.35/175.20 | 91.03/150.78 |

| Histogram-Corr | 1.00/0.96 | 1.00/0.96 | 1.00/0.97 | 1.00/0.97 | 1.00/0.97 | 1.00/0.98 |

| Histogram-Chi2 | 6.48/0.73 | 0.65/0.73 | 1.47/0.80 | 3.81/2.61 | 1.02/0.73 | 0.88/2.90 |

| CIELAB | 23.33/30.84 | 21.80/30.68 | 20.28/27.59 | 15.11/13.90 | 21.09/31.86 | 12.43/15.90 |

| CIE94 | 14.77/18.98 | 12.81/17.68 | 11.36/15.53 | 7.77/9.86 | 12.72/19.12 | 6.28/12.42 |

| CIEDE2000 | 13.03/17.22 | 11.45/15.81 | 10.31/14.03 | 6.57/8.89 | 11.54/17.66 | 5.19/11.14 |

| SSIM | 0.81/0.76 | 0.89/0.88 | 0.93/0.94 | 0.96/0.97 | 0.85/0.82 | 0.90/0.83 |

| VGG Latent Space Distance | 40.42/42.78 | 37.38/30.40 | 24.55/27.87 | 32.95/23.34 | 35.27/34.75 | 17.47/38.24 |

Experiment 5

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 635.17/1461.28 | 141.47/259.03 | 140.36/193.12 | 136.43/414.58 | 616.74/942.14 | 152.16/89.86 |

| Histogram-Corr | 0.99/0.96 | 0.99/0.96 | 0.98/0.96 | 0.99/0.97 | 0.99/0.96 | 0.98/0.98 |

| Histogram-Chi2 | 52.29/0.70 | 49.28/0.82 | 112.63/4.12 | 73.87/17.01 | 11.84/9.26 | 30.80/1.10 |

| CIELAB | 16.27/23.28 | 13.94/22.01 | 12.41/19.99 | 9.81/8.95 | 19.24/24.00 | 19.51/9.59 |

| CIE94 | 10.59/14.25 | 8.17/12.88 | 6.85/11.81 | 4.76/6.34 | 12.37/14.36 | 13.41/6.29 |

| CIEDE2000 | 9.78/12.49 | 7.83/11.43 | 6.39/10.59 | 4.44/6.96 | 10.79/12.64 | 12.37/6.06 |

| SSIM | 0.76/0.79 | 0.93/0.90 | 0.94/0.94 | 0.97/0.94 | 0.83/0.86 | 0.85/0.97 |

| VGG Latent Space Distance | 41.13/40.54 | 27.05/19.41 | 28.61/16.23 | 35.77/15.34 | 32.55/24.16 | 23.39/17.14 |

Experiment 6

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hull | NS | NS-S |

|---|---|---|---|---|---|---|

| FID | 670.79/943.51 | 287.37/460.26 | 235.06/271.43 | 161.65/198.80 | 280.37/459.79 | 280.11/127.84 |

| Histogram-Corr | 0.80/0.92 | 0.80/0.92 | 0.78/0.91 | 0.79/0.92 | 0.82/0.92 | 0.81/0.94 |

| Histogram-Chi2 | 4.78/114.91 | 1.34/6.70 | 130.44/2658.28 | 112.91/449.76 | 1.47/6.69 | 56.17/3.07 |

| CIELAB | 25.36/31.34 | 26.38/23.69 | 15.39/19.88 | 11.04/16.62 | 25.92/23.68 | 20.30/38.21 |

| CIE94 | 17.65/22.71 | 18.53/16.90 | 10.85/11.73 | 8.48/8.01 | 18.34/16.87 | 13.58/21.73 |

| CIEDE2000 | 15.35/19.42 | 16.42/15.10 | 10.06/11.58 | 8.09/7.85 | 16.30/15.08 | 12.20/20.39 |

| SSIM | 0.85/0.63 | 0.88/0.88 | 0.98/0.95 | 0.98/0.95 | 0.88/0.88 | 0.93/0.89 |

| VGG Latent Space Distance | 30.93/41.00 | 23.97/24.99 | 15.14/22.16 | 21.43/17.08 | 23.90/25.02 | 29.67/22.50 |

Human Preference

We ask human volunteers to select their favorite color transfer result. The test images are selected by randomly picking a pair of pokemons and run each algorithm.

| Metric | Palette Dif | Clustering | Clustering_DS | Convex Hall | NS | NS-S |

|---|---|---|---|---|---|---|

| Preference Ratio | 3.5 | 12.4 | 30.6 | 11.7 | 13.2 | 28.6 |

Conclusions

In this project, we explored a family of palette- and cluster-based color transfer methods for Pokémon artwork, aiming to achieve visually appealing style changes while preserving content and structure. Across six Pokémon pairs and multiple quantitative metrics, our experiments show that simple palette differencing provides a useful creative baseline. Still, more structured methods that incorporate palette geometry, spatial grouping, or cluster correspondences produce recolorings that are both more stable and more faithful to the originals.

From the quantitative results, several consistent trends emerge. The naive Palette Dif baseline typically yields the largest FID, ΔE, and VGG feature-space distances, indicating substantial shifts in both color appearance and high-level structure. In contrast, methods that explicitly align color distributions—such as Convex-hull transfer and Palette-aware clustering—generally achieve much lower ΔE (CIELAB, CIE94, CIEDE2000) and higher SSIM, reflecting better preservation of Pokémon shapes, shading, and semantic details. The Convex-hull method, in particular, often attains some of the lowest perceptual color differences while still producing convincing stylizations, suggesting that preserving barycentric relationships in palette space is an effective way to constrain recoloring.

Spatially aware methods further improve robustness and visual coherence. Both Neighbor Segments (NS) and Neighbor Segments with Superpixels (NS-S) explicitly regularize neighboring regions, reducing local artifacts and preserving edges, as reflected in consistently high SSIM scores and competitive VGG distances. NS-S often strikes a favorable balance between global palette adaptation and local smoothness, particularly on more complex or textured Pokémon, where superpixel grouping helps prevent noisy or flickering color assignments. Cluster Transfer without Palette (Clustering_DS) demonstrates that palette-like structure can also be discovered directly in Lab+XY space; when cluster matching is stable, this method can achieve strong numerical performance, though its sensitivity to clustering hyperparameters sometimes leads to larger histogram divergences or perceptual shifts.

Taken together, our results highlight that no single metric fully captures “good” color transfer. Methods that aggressively change color (e.g., Palette Dif) can look creative but rank poorly on perceptual distance measures. In contrast, very conservative methods score well numerically but may feel less stylistically interesting. Evaluating with a diverse set of metrics—FID, histogram similarity, multiple ΔE formulas, SSIM, and VGG feature distances—reveals complementary aspects of quality and emphasizes the importance of balancing stylistic expressiveness with structural fidelity. In practice, we find that geometry-aware (Convex-hull) and spatially regularized (NS-S) approaches offer the most reliable trade-off across different Pokémon pairs.

There are several promising directions for future work. First, integrating learned deep features directly into the transfer objective could better align the recolorings with human perceptual judgments than hand-designed metrics alone. Second, extending our framework to handle backgrounds, multi-Pokémon scenes, or temporal consistency in animations would make the methods more broadly applicable to fan art and game assets. Finally, adding interactive controls (e.g., user-pinned colors, region-specific constraints, or color-vision–aware sliders) could turn these algorithms into practical tools for artists and designers. Overall, our study suggests that combining compact palettes, simple geometric constraints, and lightweight spatial regularization is a powerful recipe for controllable, Pokémon-style color transfer.

References

[1] Lei, Mingkun, et al. "StyleStudio: Text-Driven Style Transfer with Selective Control of Style Elements." Proceedings of the Computer Vision and Pattern Recognition Conference. 2025.

[2] Reinhard, Erik, et al. "Color transfer between images." IEEE Computer graphics and applications 21.5 (2002): 34-41.

[3] Lv, Chenlei, et al. "Color transfer for images: A survey." ACM Transactions on Multimedia Computing, Communications and Applications 20.8 (2024): 1-29.

[4] Ahmed, Mohiuddin, Raihan Seraj, and Syed Mohammed Shamsul Islam. "The k-means algorithm: A comprehensive survey and performance evaluation." Electronics 9.8 (2020): 1295.

[5] Wang, Y., Liu, Y. and Xu, K. (2019), An Improved Geometric Approach for Palette-based Image Decomposition and Recoloring. Computer Graphics Forum, 38: 11-22. https://doi.org/10.1111/cgf.13812

[6] Zheng-Jun Du, Kai-Xiang Lei, Kun Xu, Jianchao Tan, and Yotam Gingold. 2021. Video recoloring via spatial-temporal geometric palettes. ACM Trans. Graph. 40, 4, Article 150 (August 2021), 16 pages. https://doi.org/10.1145/3450626.3459675

Appendix I

Our code is available at: https://drive.google.com/drive/folders/1aCk4Cb7wqQxMhNLclY0jbShn3LOqAL0n?usp=sharing

Appendix II

Wenxiao Cai: proposal, investigation, codes and experiments, report writing and presentation

Yifei Deng: roposal, investigation, codes and experiments, report writing and presentation