ISETHDR CV Experiment: Difference between revisions

| (28 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

== Introduction == | == Introduction == | ||

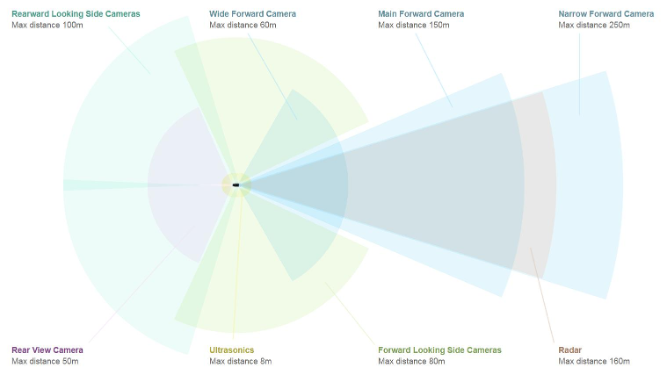

Autonomous vehicles rely heavily on robust perception systems to interpret complex driving environments. Although many sensor modalities exist such as LiDAR, radar, and ultrasonic the automotive industry increasingly prioritizes camera based vision systems due to their lower cost and rich spatial detail. Typical AV systems include 6–10 cameras compared with 7 LiDAR units, resulting in substantial cost savings for large scale deployment.

In this project, we examine how HDR (High Dynamic Range) and exposure settings in the camera image signal processing (ISP) pipeline affect the performance of a modern computer vision model YOLO-E when identifying objects in low light or highly variable lighting conditions. | |||

Our central research question is: | |||

''How do different HDR and exposure settings influence YOLO’s confidence and reliability in low-light driving scenes?'' | |||

== Background == | == Background == | ||

=== Autonomous Vehicle Imaging Systems === | |||

* Automatic emergency braking | |||

* Speed assist | |||

* Autopark / Autosteer | |||

* Traffic sign awareness | |||

* Navigation under Autopilot | |||

A typical AV uses 8–9 cameras to provide a complete 360° view, making reliable image processing essential. | |||

[[File:CameraSelfDrive.png]] | |||

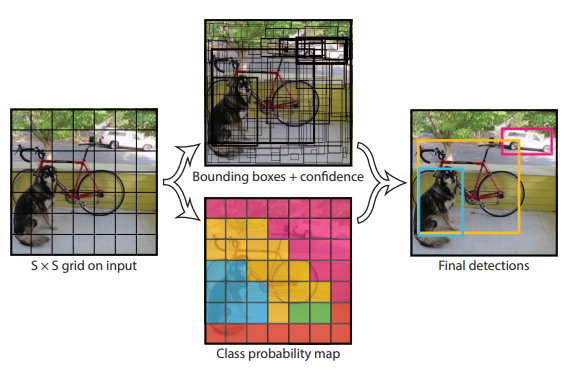

=== YOLO-E - You Only Look Once computer vision model === | |||

* Single-shot detector: Divides the image into grid cells and predicts bounding boxes + class probabilities. | |||

* Confidence estimation: Uses a sigmoid activation function, outputting scores from 0 → 1. | |||

* Bounding boxes: Predicted relative to grid cell → refined using anchor boxes. | |||

* HDR sensitivity: YOLO relies on local contrast; loss of detail from over or under exposure reduces object confidence. | |||

[[File:Yolo.PNG]] | |||

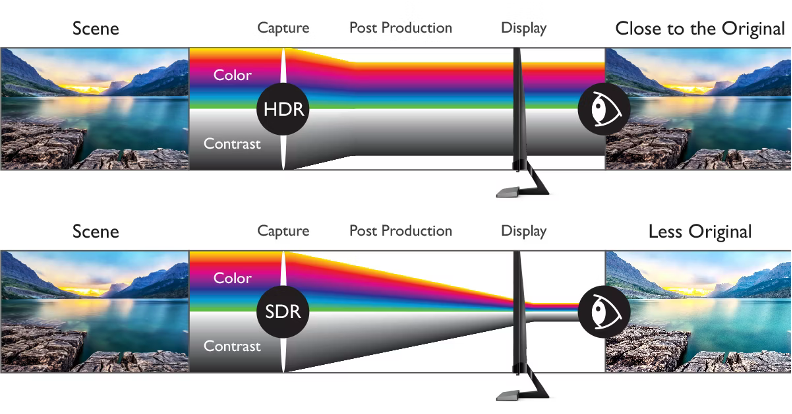

=== HDR - High Dynamic Range === | |||

* Dynamic range is influenced by exposure time, ambient illumination, and sensor design. | |||

* HDR scenes preserve detail in both dark and bright regions. | |||

* SDR collapses detail near shadows and highlights → degrading YOLO detection. | |||

Our challenge: Represent and manipulate HDR conditions using ISETCam tools. | |||

[[File:HDR.png]] | |||

== Methods == | == Methods == | ||

Image generation | === Image generation === | ||

First, we need to assemble a dataset of driving images to run the YOLO algorithm on. We acquired four driving scenes from the ISR HDR Sensor Repository. Each scene includes .exr files that contain radiance data for the sky, street lights, headlights, and other lights. | First, we need to assemble a dataset of driving images to run the YOLO algorithm on. We acquired four driving scenes from the ISR HDR Sensor Repository. Each scene includes .exr files that contain radiance data for the sky, street lights, headlights, and other lights. | ||

Scene images < | ::{| class="wikitable" style="border:none; text-align:center;" | ||

|- | |||

| [[File:id1112201236_ar0132at_day_05_4.0ms.png|200px]] | |||

| [[File:id1112184733_ar0132at_day_05_4.0ms.png|200px]] | |||

| [[File:id1113094429_ar0132at_day_05_4.0ms.png|200px]] | |||

| [[File:id1114031438_ar0132at_day_05_4.0ms.png|200px]] | |||

|- | |||

| colspan="4" style="text-align:center" | ISET HDR Scenes 1112201236, 1112184733, 1113094429, and 1114031438 | |||

|} | |||

To set up the scenes, we consider four lighting scenarios that commonly occur during driving. The light scenarios are defined by a vector of weights for headlights, streetlights, other lights, sky map, in order. The daytime scenario has strong illumination from the sky only. The nighttime scenario is illuminated almost only by headlights and streetlights. The dusk scenario falls between day and night; it has half of the daytime sky illumination combined with headlights and streetlights. Finally, the blind scenario represents a nighttime scenario with stronger artificial lighting; the headlights and streetlights are 10x greater than in the nighttime scenario. | |||

: ''Illumination vector: [ headlights, streetlights, other lights, sky map ]'' | |||

* Day - [ 0, 0, 0, 50 ] | |||

* Night - [ 0.2, 0.001, 0, 0.0005 ] | |||

* Dusk - [ 0.2, 0.001, 0, 20 ] | |||

* Blind - [ 2, 0.1, 0, 0.0005 ] | |||

::{| class="wikitable" style="border:none; text-align:center;" | |||

|- | |||

| [[File:id1114031438_ar0132at_day_07_8.0ms.png|200px]] | |||

| [[File:id1114031438_ar0132at_dusk_07_8.0ms.png|200px]] | |||

| [[File:id1114031438_ar0132at_night_07_8.0ms.png|200px]] | |||

| [[File:id1114031438_ar0132at_blind_07_8.0ms.png|200px]] | |||

|- | |||

| colspan="4" style="text-align:center" | Scene 1114031438 under day, dusk, night, and blind light scenarios with an exposure time of 8 ms | |||

|} | |||

Next, we need to set up the camera. We selected four sensors. The ar0123at and mt9v024 are single pixel sensors for automotive applications while the ov2312 is a split pixel sensor also for automotive applications. In contrast, the imx363 is a single pixel sensor for smartphone applications. | |||

Dynamic range, field of view, and resolution are some sensor parameters that are relevant in driving applications. In this project, we examine the influence of dynamic range on object identification. A large field of view is advantageous; since the car needs to be able to sense 360 degrees around its surrounding, larger field of view means that fewer sensors are required. A higher resolution, however, is not necessarily advantageous. The objective is to simply identify objects, rather than distinguish fine details, so a high resolution may require excessive data transmission and processing. We consider the field of view and resolution (table below) but do not vary them in the experiment. | |||

::{| class="wikitable" | |||

! style="text-align:left; padding: 0 12px;"| Sensor | |||

! style="padding: 0 12px;"| Pixel type | |||

! style="text-align:center; padding: 0 12px;"| Resolution | |||

! style="text-align:center; padding: 0 12px;"| Dynamic range | |||

! style="padding: 0 12px;"| Application | |||

! style="text-align:center; padding: 0 12px;"| FOV | |||

|- | |||

| style="padding: 0 12px;"| AR0132AT | |||

| style="padding: 0 12px;"| Single | |||

| style="text-align:center; padding: 0 12px;"| 1.2 MP | |||

| style="text-align:center; padding: 0 12px;"| 115 dB | |||

| style="padding: 0 12px;"| Automotive | |||

| style="text-align:center; padding: 0 12px;"| 76° | |||

|- | |||

| style="padding: 0 12px;"| MT9V024 | |||

| style="padding: 0 12px;"| Single | |||

| style="text-align:center; padding: 0 12px;"| 0.4 MP | |||

| style="text-align:center; padding: 0 12px;"| 100 dB | |||

| style="padding: 0 12px;"| Automotive | |||

| style="text-align:center; padding: 0 12px;"| 69° | |||

|- | |||

| style="padding: 0 12px;"| IMX363 | |||

| style="padding: 0 12px;"| Single | |||

| style="text-align:center; padding: 0 12px;"| 12 MP | |||

| style="text-align:center; padding: 0 12px;"| n/a | |||

| style="padding: 0 12px;"| Smartphone | |||

| style="text-align:center; padding: 0 12px;"| 21° | |||

|- | |||

| style="padding: 0 12px;"| OV2312 | |||

| style="padding: 0 12px;"| Split | |||

| style="text-align:center; padding: 0 12px;"| 2 MP | |||

| style="text-align:center; padding: 0 12px;"| 68 dB | |||

| style="padding: 0 12px;"| Automotive | |||

| style="text-align:center; padding: 0 12px;"| 81° | |||

|} | |||

::''Field of view is calculated for a 4 mm focal length'' | |||

In order to vary dynamic range, we sweep exposure time across 14 values from 0.1 ms to 1000 ms. In all, we sweep 4x scenes, 4x light scenarios, 4x sensors, and 14x exposure times, for a total of 896 images. | |||

=== YOLO === | |||

* YOLO inference is executed using a nested FOR-loop structure iterating over sensor settings and scene parameters. | |||

* After processing all 896 images, detection outputs are parsed to extract: | |||

* Object class | |||

* Bounding box | |||

* Confidence interval | |||

Heatmaps are generated to visualize how confidence varies with exposure time across sensors. | |||

Below is the script used to iterate over the sensors and running YOLO on the ISET generated PNGs. | |||

<pre><nowiki> | |||

import os | |||

import re | |||

import argparse | |||

import csv | |||

from ultralytics import YOLO | |||

import cv2 | |||

import numpy as np | |||

import pandas as pd | |||

import seaborn as sns | |||

import matplotlib.pyplot as plt | |||

def parse_exposure_from_filename(filename: str): | |||

match = re.search(r'(\d+)\s*ms', filename.lower()) | |||

if match: | |||

return int(match.group(1)) | |||

return None | |||

def process_images( | |||

root_dir: str, | |||

model_path: str, | |||

output_dir: str, | |||

csv_path: str | |||

): | |||

model = YOLO(model_path) | |||

annotated_dir = os.path.join(output_dir, "annotated") | |||

os.makedirs(annotated_dir, exist_ok=True) | |||

csv_fields = [ | |||

"sensor_type", | |||

"image_path", | |||

"image_name", | |||

"exposure_ms", | |||

"num_detections", | |||

"class_ids", | |||

"class_names", | |||

"confidences" | |||

] | |||

csv_rows = [] | |||

for dirpath, _, filenames in os.walk(root_dir): | |||

for fname in filenames: | |||

if not fname.lower().endswith(".png"): | |||

continue | |||

image_path = os.path.join(dirpath, fname) | |||

rel_path = os.path.relpath(image_path, root_dir) | |||

parts = rel_path.split(os.sep) | |||

sensor_type = parts[0] if len(parts) > 1 else "unknown" | |||

exposure_ms = parse_exposure_from_filename(fname) | |||

print(f"Processing: {rel_path} (sensor: {sensor_type}, exposure: {exposure_ms} ms)") | |||

results = model(image_path) | |||

if not results: | |||

csv_rows.append({ | |||

"sensor_type": sensor_type, | |||

"image_path": rel_path, | |||

"image_name": fname, | |||

"exposure_ms": exposure_ms, | |||

"num_detections": 0, | |||

"class_ids": "", | |||

"class_names": "", | |||

"confidences": "" | |||

}) | |||

continue | |||

result = results[0] | |||

annotated_img = result.plot() | |||

rel_dir = os.path.dirname(rel_path) | |||

save_dir = os.path.join(annotated_dir, rel_dir) | |||

os.makedirs(save_dir, exist_ok=True) | |||

base_name, ext = os.path.splitext(os.path.basename(rel_path)) | |||

annotated_path = os.path.join(save_dir, base_name + "_annotated.png") | |||

cv2.imwrite(annotated_path, annotated_img) | |||

boxes = result.boxes | |||

names = model.names | |||

class_ids_list = [] | |||

[ | class_names_list = [] | ||

confidences_list = [] | |||

if boxes is not None and len(boxes) > 0: | |||

for box in boxes: | |||

cls_id = int(box.cls) | |||

conf = float(box.conf) | |||

class_name = names.get(cls_id, str(cls_id)) | |||

class_ids_list.append(str(cls_id)) | |||

class_names_list.append(class_name) | |||

confidences_list.append(f"{conf:.4f}") | |||

num_detections = len(class_ids_list) | |||

else: | |||

num_detections = 0 | |||

csv_rows.append({ | |||

"sensor_type": sensor_type, | |||

"image_path": rel_path, | |||

"image_name": fname, | |||

"exposure_ms": exposure_ms, | |||

"num_detections": num_detections, | |||

"class_ids": ";".join(class_ids_list), | |||

"class_names": ";".join(class_names_list), | |||

"confidences": ";".join(confidences_list) | |||

}) | |||

os.makedirs(os.path.dirname(csv_path), exist_ok=True) | |||

with open(csv_path, "w", newline="", encoding="utf-8") as f: | |||

writer = csv.DictWriter(f, fieldnames=csv_fields) | |||

writer.writeheader() | |||

for row in csv_rows: | |||

writer.writerow(row) | |||

print(f"\nDone. Annotated images saved to: {annotated_dir}") | |||

print(f"CSV saved to: {csv_path}") | |||

print("\nGenerating heatmaps (per sensor type)...") | |||

df = pd.read_csv(csv_path) | |||

df["exposure_ms"] = pd.to_numeric(df["exposure_ms"], errors="coerce") | |||

df["class_list"] = df["class_names"].fillna("").apply( | |||

lambda x: x.split(";") if x != "" else [] | |||

) | |||

df["conf_list"] = df["confidences"].fillna("").apply( | |||

lambda x: list(map(float, x.split(";"))) if x != "" else [] | |||

) | |||

long_rows = [] | |||

for _, row in df.iterrows(): | |||

for cname, conf in zip(row["class_list"], row["conf_list"]): | |||

long_rows.append([ | |||

row["sensor_type"], | |||

row["image_name"], | |||

row["exposure_ms"], | |||

cname, | |||

conf | |||

]) | |||

df_long = pd.DataFrame( | |||

long_rows, | |||

columns=["sensor_type", "image_name", "exposure_ms", "class_name", "confidence"] | |||

) | |||

df_long = df_long.dropna(subset=["exposure_ms"]) | |||

df_long["exposure_ms"] = pd.to_numeric(df_long["exposure_ms"], errors="coerce") | |||

df_long = df_long.sort_values("exposure_ms") | |||

heatmap_root = os.path.join(output_dir, "heatmaps") | |||

os.makedirs(heatmap_root, exist_ok=True) | |||

sensor_types = sorted(df["sensor_type"].unique()) | |||

for sensor_type in sensor_types: | |||

all_exposures = ( | |||

df[df["sensor_type"] == sensor_type]["exposure_ms"] | |||

.dropna() | |||

.unique() | |||

) | |||

all_exposures = np.sort(all_exposures) | |||

df_s = df_long[df_long["sensor_type"] == sensor_type] | |||

pivot = ( | |||

df_s | |||

.groupby(["exposure_ms", "class_name"])["confidence"] | |||

.max() | |||

.unstack(fill_value=0.0) | |||

) | |||

pivot = pivot.reindex(all_exposures, fill_value=0.0) | |||

if pivot.empty: | |||

print(f"No detection data for sensor '{sensor_type}', skipping heatmap.") | |||

continue | |||

plt.figure(figsize=(10, 6)) | |||

ax = sns.heatmap(pivot, annot=False, cmap="viridis", vmin=0, vmax=1) | |||

ax.set_yticks(np.arange(len(all_exposures)) + 0.5) | |||

ax.set_yticklabels([f"{int(e)} ms" for e in all_exposures]) | |||

plt.title(f"Max Confidence per Class vs Exposure\nSensor: {sensor_type}") | |||

plt.ylabel("Exposure Time") | |||

plt.xlabel("Class Name") | |||

sensor_safe = re.sub(r"[^A-Za-z0-9_.-]+", "_", sensor_type) | |||

heatmap_path = os.path.join(heatmap_root, f"confidence_heatmap_{sensor_safe}.png") | |||

plt.savefig(heatmap_path, dpi=300, bbox_inches="tight") | |||

plt.close() | |||

print(f"Saved heatmap for sensor '{sensor_type}' → {heatmap_path}") | |||

def main(): | |||

parser = argparse.ArgumentParser( | |||

description="Run YOLOv8 object detection on a directory tree of PNG images." | |||

) | |||

parser.add_argument( | |||

"--root_dir", | |||

type=str, | |||

required=True, | |||

help="Root directory containing folders of images. Each sensor has its own folder." | |||

) | |||

parser.add_argument( | |||

"--model", | |||

type=str, | |||

default="yolov8n.pt", | |||

help="YOLOv8 model path or model name (e.g. 'yolov8n.pt')." | |||

) | |||

parser.add_argument( | |||

"--output_dir", | |||

type=str, | |||

default="yolo_outputs", | |||

help="Directory to save annotated images and CSV." | |||

) | |||

parser.add_argument( | |||

"--csv_name", | |||

type=str, | |||

default="detections.csv", | |||

help="Name of the output CSV file." | |||

) | |||

args = parser.parse_args() | |||

csv_path = os.path.join(args.output_dir, args.csv_name) | |||

process_images( | |||

root_dir=args.root_dir, | |||

model_path=args.model, | |||

output_dir=args.output_dir, | |||

csv_path=csv_path | |||

) | |||

if __name__ == "__main__": | |||

main() | |||

</nowiki></pre> | |||

[[File:YoloSigmoid.png|200px]] | |||

== Results == | == Results == | ||

=== YOLO Output Characteristics === | |||

* Good HDR performance ⇒ confidence remains stable across exposure times | |||

* Overexposure ⇒ removes gradients and edges → YOLO loses confidence | |||

* Underexposure ⇒ insufficient signal → YOLO fails to detect smaller objects | |||

=== Sensor-Specific Performance === | |||

=== Automotive Sensors (AR0132AT & MT9V024) === | |||

* Much more stable confidence across exposures | |||

* Optimized for HDR → recover spatial gradients even at low exposures | |||

* AR0132AT performs exceptionally in low-light (0.1–2 ms) | |||

* Both struggle in extreme overexposure (blind scenario) | |||

[[File:MT9V024.png|150px]] | |||

[[File:AR0123AT.png|150px]] | |||

==== Smartphone Sensor (IMX363) ==== | |||

* Poor HDR behavior → saturates easier | |||

* Narrow field of view limits contextual detection | |||

* Performs visibly worse than automotive sensors | |||

[[File:IMX363.png|150px]] | |||

=== OmniVision OV2312 Split Pixel === | |||

* LPD-HCG / LPD-LCG = strong low-light performance | |||

* SPD = handles bright/high-exposure regions | |||

* Combined output = the most consistent performance across all exposure levels | |||

[[File:OV2312.png|150px]] | |||

== Conclusions == | == Conclusions == | ||

* YOLO is generally very effective across a wide HDR range. | |||

* The main failure mode occurs in near-total darkness, where insufficient light prevents reliable detection. | |||

* Improving hardware (sensor design, illumination, additional modalities like LiDAR or NIR) is more impactful than modifying YOLO itself. | |||

* Automotive HDR sensors consistently outperform consumer smartphone sensors for AV tasks. | |||

* Split-pixel architectures (e.g., OV2312) provide the most robust overall performance. | |||

[[File:ResultsConfidence.png|500px]] | |||

[[File:CombinedConfidenceIntervals.png|650px]] | |||

[[File:SensorDiscussion.png|500px]] | |||

== Appendix == | == Appendix == | ||

Latest revision as of 20:29, 9 December 2025

Introduction

Autonomous vehicles rely heavily on robust perception systems to interpret complex driving environments. Although many sensor modalities exist such as LiDAR, radar, and ultrasonic the automotive industry increasingly prioritizes camera based vision systems due to their lower cost and rich spatial detail. Typical AV systems include 6–10 cameras compared with 7 LiDAR units, resulting in substantial cost savings for large scale deployment. In this project, we examine how HDR (High Dynamic Range) and exposure settings in the camera image signal processing (ISP) pipeline affect the performance of a modern computer vision model YOLO-E when identifying objects in low light or highly variable lighting conditions.

Our central research question is: How do different HDR and exposure settings influence YOLO’s confidence and reliability in low-light driving scenes?

Background

Autonomous Vehicle Imaging Systems

- Automatic emergency braking

- Speed assist

- Autopark / Autosteer

- Traffic sign awareness

- Navigation under Autopilot

A typical AV uses 8–9 cameras to provide a complete 360° view, making reliable image processing essential.

YOLO-E - You Only Look Once computer vision model

- Single-shot detector: Divides the image into grid cells and predicts bounding boxes + class probabilities.

- Confidence estimation: Uses a sigmoid activation function, outputting scores from 0 → 1.

- Bounding boxes: Predicted relative to grid cell → refined using anchor boxes.

- HDR sensitivity: YOLO relies on local contrast; loss of detail from over or under exposure reduces object confidence.

HDR - High Dynamic Range

- Dynamic range is influenced by exposure time, ambient illumination, and sensor design.

- HDR scenes preserve detail in both dark and bright regions.

- SDR collapses detail near shadows and highlights → degrading YOLO detection.

Our challenge: Represent and manipulate HDR conditions using ISETCam tools.

Methods

Image generation

First, we need to assemble a dataset of driving images to run the YOLO algorithm on. We acquired four driving scenes from the ISR HDR Sensor Repository. Each scene includes .exr files that contain radiance data for the sky, street lights, headlights, and other lights.

To set up the scenes, we consider four lighting scenarios that commonly occur during driving. The light scenarios are defined by a vector of weights for headlights, streetlights, other lights, sky map, in order. The daytime scenario has strong illumination from the sky only. The nighttime scenario is illuminated almost only by headlights and streetlights. The dusk scenario falls between day and night; it has half of the daytime sky illumination combined with headlights and streetlights. Finally, the blind scenario represents a nighttime scenario with stronger artificial lighting; the headlights and streetlights are 10x greater than in the nighttime scenario.

- Illumination vector: [ headlights, streetlights, other lights, sky map ]

- Day - [ 0, 0, 0, 50 ]

- Night - [ 0.2, 0.001, 0, 0.0005 ]

- Dusk - [ 0.2, 0.001, 0, 20 ]

- Blind - [ 2, 0.1, 0, 0.0005 ]

Next, we need to set up the camera. We selected four sensors. The ar0123at and mt9v024 are single pixel sensors for automotive applications while the ov2312 is a split pixel sensor also for automotive applications. In contrast, the imx363 is a single pixel sensor for smartphone applications.

Dynamic range, field of view, and resolution are some sensor parameters that are relevant in driving applications. In this project, we examine the influence of dynamic range on object identification. A large field of view is advantageous; since the car needs to be able to sense 360 degrees around its surrounding, larger field of view means that fewer sensors are required. A higher resolution, however, is not necessarily advantageous. The objective is to simply identify objects, rather than distinguish fine details, so a high resolution may require excessive data transmission and processing. We consider the field of view and resolution (table below) but do not vary them in the experiment.

Sensor Pixel type Resolution Dynamic range Application FOV AR0132AT Single 1.2 MP 115 dB Automotive 76° MT9V024 Single 0.4 MP 100 dB Automotive 69° IMX363 Single 12 MP n/a Smartphone 21° OV2312 Split 2 MP 68 dB Automotive 81°

- Field of view is calculated for a 4 mm focal length

In order to vary dynamic range, we sweep exposure time across 14 values from 0.1 ms to 1000 ms. In all, we sweep 4x scenes, 4x light scenarios, 4x sensors, and 14x exposure times, for a total of 896 images.

YOLO

- YOLO inference is executed using a nested FOR-loop structure iterating over sensor settings and scene parameters.

- After processing all 896 images, detection outputs are parsed to extract:

* Object class * Bounding box * Confidence interval

Heatmaps are generated to visualize how confidence varies with exposure time across sensors.

Below is the script used to iterate over the sensors and running YOLO on the ISET generated PNGs.

import os

import re

import argparse

import csv

from ultralytics import YOLO

import cv2

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

def parse_exposure_from_filename(filename: str):

match = re.search(r'(\d+)\s*ms', filename.lower())

if match:

return int(match.group(1))

return None

def process_images(

root_dir: str,

model_path: str,

output_dir: str,

csv_path: str

):

model = YOLO(model_path)

annotated_dir = os.path.join(output_dir, "annotated")

os.makedirs(annotated_dir, exist_ok=True)

csv_fields = [

"sensor_type",

"image_path",

"image_name",

"exposure_ms",

"num_detections",

"class_ids",

"class_names",

"confidences"

]

csv_rows = []

for dirpath, _, filenames in os.walk(root_dir):

for fname in filenames:

if not fname.lower().endswith(".png"):

continue

image_path = os.path.join(dirpath, fname)

rel_path = os.path.relpath(image_path, root_dir)

parts = rel_path.split(os.sep)

sensor_type = parts[0] if len(parts) > 1 else "unknown"

exposure_ms = parse_exposure_from_filename(fname)

print(f"Processing: {rel_path} (sensor: {sensor_type}, exposure: {exposure_ms} ms)")

results = model(image_path)

if not results:

csv_rows.append({

"sensor_type": sensor_type,

"image_path": rel_path,

"image_name": fname,

"exposure_ms": exposure_ms,

"num_detections": 0,

"class_ids": "",

"class_names": "",

"confidences": ""

})

continue

result = results[0]

annotated_img = result.plot()

rel_dir = os.path.dirname(rel_path)

save_dir = os.path.join(annotated_dir, rel_dir)

os.makedirs(save_dir, exist_ok=True)

base_name, ext = os.path.splitext(os.path.basename(rel_path))

annotated_path = os.path.join(save_dir, base_name + "_annotated.png")

cv2.imwrite(annotated_path, annotated_img)

boxes = result.boxes

names = model.names

class_ids_list = []

class_names_list = []

confidences_list = []

if boxes is not None and len(boxes) > 0:

for box in boxes:

cls_id = int(box.cls)

conf = float(box.conf)

class_name = names.get(cls_id, str(cls_id))

class_ids_list.append(str(cls_id))

class_names_list.append(class_name)

confidences_list.append(f"{conf:.4f}")

num_detections = len(class_ids_list)

else:

num_detections = 0

csv_rows.append({

"sensor_type": sensor_type,

"image_path": rel_path,

"image_name": fname,

"exposure_ms": exposure_ms,

"num_detections": num_detections,

"class_ids": ";".join(class_ids_list),

"class_names": ";".join(class_names_list),

"confidences": ";".join(confidences_list)

})

os.makedirs(os.path.dirname(csv_path), exist_ok=True)

with open(csv_path, "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=csv_fields)

writer.writeheader()

for row in csv_rows:

writer.writerow(row)

print(f"\nDone. Annotated images saved to: {annotated_dir}")

print(f"CSV saved to: {csv_path}")

print("\nGenerating heatmaps (per sensor type)...")

df = pd.read_csv(csv_path)

df["exposure_ms"] = pd.to_numeric(df["exposure_ms"], errors="coerce")

df["class_list"] = df["class_names"].fillna("").apply(

lambda x: x.split(";") if x != "" else []

)

df["conf_list"] = df["confidences"].fillna("").apply(

lambda x: list(map(float, x.split(";"))) if x != "" else []

)

long_rows = []

for _, row in df.iterrows():

for cname, conf in zip(row["class_list"], row["conf_list"]):

long_rows.append([

row["sensor_type"],

row["image_name"],

row["exposure_ms"],

cname,

conf

])

df_long = pd.DataFrame(

long_rows,

columns=["sensor_type", "image_name", "exposure_ms", "class_name", "confidence"]

)

df_long = df_long.dropna(subset=["exposure_ms"])

df_long["exposure_ms"] = pd.to_numeric(df_long["exposure_ms"], errors="coerce")

df_long = df_long.sort_values("exposure_ms")

heatmap_root = os.path.join(output_dir, "heatmaps")

os.makedirs(heatmap_root, exist_ok=True)

sensor_types = sorted(df["sensor_type"].unique())

for sensor_type in sensor_types:

all_exposures = (

df[df["sensor_type"] == sensor_type]["exposure_ms"]

.dropna()

.unique()

)

all_exposures = np.sort(all_exposures)

df_s = df_long[df_long["sensor_type"] == sensor_type]

pivot = (

df_s

.groupby(["exposure_ms", "class_name"])["confidence"]

.max()

.unstack(fill_value=0.0)

)

pivot = pivot.reindex(all_exposures, fill_value=0.0)

if pivot.empty:

print(f"No detection data for sensor '{sensor_type}', skipping heatmap.")

continue

plt.figure(figsize=(10, 6))

ax = sns.heatmap(pivot, annot=False, cmap="viridis", vmin=0, vmax=1)

ax.set_yticks(np.arange(len(all_exposures)) + 0.5)

ax.set_yticklabels([f"{int(e)} ms" for e in all_exposures])

plt.title(f"Max Confidence per Class vs Exposure\nSensor: {sensor_type}")

plt.ylabel("Exposure Time")

plt.xlabel("Class Name")

sensor_safe = re.sub(r"[^A-Za-z0-9_.-]+", "_", sensor_type)

heatmap_path = os.path.join(heatmap_root, f"confidence_heatmap_{sensor_safe}.png")

plt.savefig(heatmap_path, dpi=300, bbox_inches="tight")

plt.close()

print(f"Saved heatmap for sensor '{sensor_type}' → {heatmap_path}")

def main():

parser = argparse.ArgumentParser(

description="Run YOLOv8 object detection on a directory tree of PNG images."

)

parser.add_argument(

"--root_dir",

type=str,

required=True,

help="Root directory containing folders of images. Each sensor has its own folder."

)

parser.add_argument(

"--model",

type=str,

default="yolov8n.pt",

help="YOLOv8 model path or model name (e.g. 'yolov8n.pt')."

)

parser.add_argument(

"--output_dir",

type=str,

default="yolo_outputs",

help="Directory to save annotated images and CSV."

)

parser.add_argument(

"--csv_name",

type=str,

default="detections.csv",

help="Name of the output CSV file."

)

args = parser.parse_args()

csv_path = os.path.join(args.output_dir, args.csv_name)

process_images(

root_dir=args.root_dir,

model_path=args.model,

output_dir=args.output_dir,

csv_path=csv_path

)

if __name__ == "__main__":

main()

Results

YOLO Output Characteristics

- Good HDR performance ⇒ confidence remains stable across exposure times

- Overexposure ⇒ removes gradients and edges → YOLO loses confidence

- Underexposure ⇒ insufficient signal → YOLO fails to detect smaller objects

Sensor-Specific Performance

Automotive Sensors (AR0132AT & MT9V024)

- Much more stable confidence across exposures

- Optimized for HDR → recover spatial gradients even at low exposures

- AR0132AT performs exceptionally in low-light (0.1–2 ms)

- Both struggle in extreme overexposure (blind scenario)

Smartphone Sensor (IMX363)

- Poor HDR behavior → saturates easier

- Narrow field of view limits contextual detection

- Performs visibly worse than automotive sensors

OmniVision OV2312 Split Pixel

- LPD-HCG / LPD-LCG = strong low-light performance

- SPD = handles bright/high-exposure regions

- Combined output = the most consistent performance across all exposure levels

Conclusions

- YOLO is generally very effective across a wide HDR range.

- The main failure mode occurs in near-total darkness, where insufficient light prevents reliable detection.

- Improving hardware (sensor design, illumination, additional modalities like LiDAR or NIR) is more impactful than modifying YOLO itself.

- Automotive HDR sensors consistently outperform consumer smartphone sensors for AV tasks.

- Split-pixel architectures (e.g., OV2312) provide the most robust overall performance.