Simulating Vision through Retinal Prothesis: Difference between revisions

imported>Projects221 |

imported>Projects221 |

||

| Line 113: | Line 113: | ||

[2] "The Argus® II Retinal Prosthesis System" 2-sight.eu/en/product-en. The Argus II Retinal Prosthesis System, 2014. Web. 15 Mar 2014. <http://2-sight.eu/en/about-us-en> | [2] "The Argus® II Retinal Prosthesis System" 2-sight.eu/en/product-en. The Argus II Retinal Prosthesis System, 2014. Web. 15 Mar 2014. <http://2-sight.eu/en/about-us-en> | ||

[3] "Holographic display system for restoration of sight to the blind" G A Goetz et al 2013 J. Neural Eng. 10 056021 | |||

[3] "Introduction to OpenCV" opencv-python-tutroals.readthedocs.org. OpenCV-Python Tutorials, 18 Feb 2014. Web. 15 Mar 2014. <http://opencv-python-tutroals.readthedocs.org/en/latest/py_tutorials/py_setup/py_table_of_contents_setup/py_table_of_contents_setup.html> | [3] "Introduction to OpenCV" opencv-python-tutroals.readthedocs.org. OpenCV-Python Tutorials, 18 Feb 2014. Web. 15 Mar 2014. <http://opencv-python-tutroals.readthedocs.org/en/latest/py_tutorials/py_setup/py_table_of_contents_setup/py_table_of_contents_setup.html> | ||

Revision as of 23:28, 18 March 2014

Group Members: Alex Martinez

Back to Psych 221 Projects 2014

Introduction

Retinal degenerative diseases such as age-related macular degeneration or retinitis pigmentosa are among the leading causes of blindness in the developed world. These diseases lead to a loss of photoreceptors, while the inner retinal neurons survive to a large extent. Electrical stimulation of the surviving retinal neurons has been achieved either epiretinally, in which case the primary targets of stimulation are the retinal ganglion cells (RGCs), or subretinally to bypass the degenerated photoreceptors and use neurons in the inner nuclear layer (bipolar, amacrine and horizontal cells) as primary targets. Other fully optical approaches to restoration of sight include optogenetics, in which retinal neurons are transfected to express light-sensitive Na and Cl channels, small- molecule photoswitches which bind to K channels and make them light sensitive or photovoltaic implants based on thin-film polymers.

Recent clinical studies with epiretinal and subretinal prosthetic systems have demonstrated improvements of the visual function in certain tasks, with some patients being able to identify letters with equivalent visual acuity of up to 20/550. Despite progress in improving visual acuity, normal vision at this resolution lacks much functionality. Simulating vision through retinal prothesis, as well as processing the image through various means could determine better methods in transferring information through the retina at this limited bandwidth. In order to aid the development of future image processing software, this group will simulate vision through the retinal prothesis developed by the Palanker Lab.

What Has Been Done in the Past

Our group investigated prior simulations and descriptions given by patients about their restored vision. We will look at the CNIB eye simulator to understand impaired sight, the Xense project to learn from prior attempts at simulating restored vision, and the Argus II Retinal Prothesis to better understand the frontier of restored eyesight and what patients say it looks like.

CNIB iPhone App

The EyeSimulator shows how common eye diseases like glaucoma, diabetic retinopathy, age-related macular degeneration and cataracts may affect vision. It is used as an educational tool and has been helpful in acquiring public attention of these diseases.

The eye simulator demonstrates that, although vision is impaired, our familiarity with the objects we expect to see help interpret and ultimately perceive what's in our surroundings. The patients for the retinal prothesis, having had eye sight before, will also have this intuition of what objects they expect to find to help aid in interpreting a scene. Intelligent image processing to help accentuate features in an image could be utilized by this intuition to enhance vision functionality in cases of low visual acuity.

Project Xense

Developed at the Entertainment Technology Center at Carnegie Mellon University, Project Xense is a collection of three museum exhibits about medical implants and prostheses technology. One of these exhibits simulate vision through a retinal implant. Guests wear a head-mounted display equipped with a camera; the display will show video from the camera that has been processed in real-time to resemble the low-resolution vision afforded by retinal implants.

When guests put on the head-mounted display, their field of vision will be blocked out and replaced by small screens that show processed live video from a camera mounted on the headset. The processed video reduces images into a black-and-white grid based on brightness; the resolution of the grid directly corresponds to different important historical and theoretical milestones in retinal implant technology. If guests press the control buttons, they can scan through these various resolutions to experience what it would be like to have the corresponding retinal implant.

While this simulation gives a good sense of vision through a retinal prothesis, it is not robust enough to capture variation between different models of retinal protheses. For instance, this group will specifically model the retinal prothesis developed by the Palanker Lab. The group anticipates implanting 1000 pixels subretinally, in a concentric arrangement. Additionally, improvements in hardware should both allow for better localization and control of the voltage applied by a pixel, resulting in a more stable observed pixel radius with greater dynamic range.

Argus II Retinal Prothesis

With the Argus II system, a camera mounted on a pair of glasses captures images, and corresponding signals are fed wirelessly to chip implanted near the retina. These signals are sent to an array of implanted electrodes that stimulate retinal cells, producing light in the patient’s field of view.

So far, the Argus II can restore only limited vision. One testimonial features a male patient from Manchester, England, who received the Argus II Retinal Prosthesis System in 2009. He describes the experience, recalling how at a fireworks display he saw, "flashing lights and rockets going off" and, upon going to a pub, he can "know where the people are. [He] can't make out the faces."[6] Although we can acquire from patients an understanding of recovered vision functionality, it's difficult to understand exactly what that restored eye sight is like.

What We Intend to Do

Judging from the sparsity of information of clinical trials, as well as the difficulty in understanding what the patient actually sees from testimonials, it is beyond the scope of this project to determine what exactly a patient sees through the retinal protheses developed by the Palanker Lab. However, we can note a relationship between pixel density and visual acuity, as well as voltage control/ localization and perceived pixel dynamic range/size.

In light of this, our group will develop a simulation of restored vision with the adjustment of these two parameters as focal points. In addition, we will incorporate elements of computer vision, such a facial recognition, in enhancing what information is output by the simulation.

Background

Starting from a basic understanding of computer vision, I consulted with Professors Daniel Palanker and E.J. Chichilnisky on what features my restored vision simulator should incorporate. I also consulted with Ph.D. candidate Georges Goetz and Ariel Rokem Ph.D. on how I should build my simulator.

Methods

Parameter Adjustment

Adjustable parameter allow for the simulation to be robust enough for experimentation. To simulate processing speeds slower than what our visual system can interpret, we allow for an adjustable frame. Other adjustable parameters include a color vs. black and white toggle, pixel density, pixel representation, employed method of thresholding, blurring, edge detection, and the symbolic representation of facial recognition.

Image Thresholding

We implemented various thresholding methods to accentuate important details in the field of vision. These method are Adaptive Mean Thresholding, Adaptive Gaussian Thresholding, and Ostu Thresholding.

Adaptive mean thresholding is where, for small regions of an image, we calculate a threshold unique to that region,. Using this threshold, we evaluate pixels and determine whether they are above or below the threshold, which, in practice corresponds to whether that pixel is represented as white or black, respectively.

Adaptive gaussian thresholding is similar to mean thresholding save for the threshold value being weighted sum of neighboring pixels, with weighting determined by a gaussian.

Otsu Thresholding converts a grayscale image into a bimodal image, by choosing the threshold which minimizes the within-class variance. You could think of Otsu binarization as K-means clustering of pixel values with 2 clusters. This method works well at differentiating the fore ground from the background.

Facial Recognition

For facial recognition, I used the OpenCV training set and cv2.cascadeClassifier method for determining whether or not there was a face on the screen. The algorithm is not robust enough to be able to distinguish a particular face; it can only detect the presence of a face. With face detection in place, we could superimpose over the face an object that would be much easier to detect. In this particular case, we used meme faces as the objects with which to superimpose over the face.

Pixellation

I employed 4 methods of pixellation:

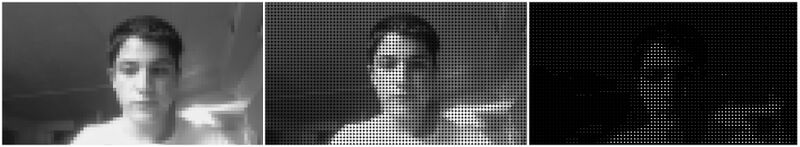

In the first, I shrink down the source image by an adjustable constant, and then restore the original image to its original size. the result is a computationally quick method of pixellation that doesn't perfectly constrain image coloring to well defined pixel blocks. However, it is passable as a means of simulating visual acuity of restored sight, and can be used to test the effectiveness of visual acuity with and without the assistance computer vision algorithms.

In the second, I iterate through an image via blocks of an adjustable size. Within each block, I take the mean intensity value of the block and assign that value to each pixel in the block. This method is computationally more expensive, although it does allow for sharply defined pixel blocks.

In the third, I undergo the same procedure of acquiring pixel values as in the second method. However, this time I apply the pixel values to an array of dots. This is an effort to account for how, in vivo, the range of an applied voltage from a microarray pixel will more likely emanate radially than it is to conform to to a square.

In the final implementation, I use the mean values determined in the second method to instead dictate the radius of a white circle. This resembles the method demonstrated by Project Xense, which positively correlates pixel intensity with the a microchip pixel's range of stimulation.

Noise Suppression

Noise can arise from a number of sources, ranging from photon gaussian noise, to imperfections between the pixel array and retinal cells. Our concern lies more with high frequency noise. Although the final image sent through the retinal prosthesis is not capable of displaying high frequency signals, this noise can effect the output of our image processing, such as thresholding, which would manifest itself the final, low resolution image. In order to combat this, we employ classical convolution techniques of high frequency noise removal, such Gaussian blurring and assigning pixels the average of its neighbors. Gaussian blurring was chosen as the major method of removing high frequency noise in order to improve thresholding, with further blurring through averaging as an adjustable parameter.

Results

Our group met some success in simulating restored sight, as well as using computer vision to improve the amount of information conveyed by the simulated image. However, both tasks revealed themselves to be much more complex than originally anticipated, and our beyond the scope of this project's rudimentary simulation.

Simulating Restored Vision

The main features I found I needed to incorporate into my simulation were: encoding the dynamic range applied voltages of individual pixels, varying resolution in response to pixel density on the retinal prothesis, and removing color from images (as it's doubtful our prothesis will be able to transmit color information).

These specifications were met. A user of the simulator has control of pixel density, color, as well as how dynamic range is expressed. To elaborate dynamic range can be expressed either by pixel color or pixel radius, and this dynamic range can span the entire spectrum of grays, to just two colors, as demonstrated by the Otsu Thresholding. If the user chooses to pixellate the image, there are three options from which to choose: square, dot, and radial

There was another specification that needed to be met as well. The camera feed actually gives too large an angle of view. After consulting with Professor Palanker, I sought to simulate approximately 15 degree angle of view in keeping with anticipated angle of view for restored sight. Toggling on a pixel mask that would restrict the output image to a 15 degree angle of view is another feature that helps simulate restored vision.

Computer Vision Assistance

I employed a moderate amount functions to better convey important information in the image, and they helped to varying degrees.

Utilizing facial recognition, I was able to substitute faces for symbols that are generally easier to recognize through low resolution vision as a face. There was the caveat that some threshold and edge detection filters compromised the effectiveness of this face substitution

Regarding edge detection, I found that only Canny edge detection seemed to be useful, at least in theory. My other two high pass filter implementations, Sobel and Laplacian derivatives, failed to sharply distinguish edges from noise, enough to be recognized in latter stage pixellation once the low frequency content is lost. With that said, Canny edge detection still outputs an image that is difficult to interpret with large enough pixel sizes, and fails to communicate edge information upon pixillation of the image. In practice, the implementation of edge detection was not successful

Regarding thresholding, I found Otsu thresholding to be more effective at communicating information than the other two threshold implementations I use: Gaussian and mean adaptive thresholding. At very large pixel sizes, the image is still able to maintain the 2 contiguous shapes generated by the Otsu method. In contexts where key information, such as the presence of a face or large object, is held in the foreground, Otsu binarization does an adequate job of still relaying that information at very low resolutions. While a high dynamic range gray scale will generally communicate information better than a low dynamic range, the prothesis will have difficulty meeting the level of dynamic range displayed in the left image. In cases of very limited dynamic range, Otsu binarization is an adequate means of communicating information.

Conclusions and Future Work

This experiment of simulating yielded some insights into the difficulty of conveying important information to to the wearer, as well as the difficulty in developing an adequate simulator. First off, the limited information regarding what restored vision looks like from patient testimonials made confidence in any particular strategy of simulating restored vision difficult. The dot array and pixillation strategies I did employ however did convey the difficulty of perceiving objects at low resolutions. In light of this development, the edge detection, thresholding, and face detection strategies I employ, while arguably improving perception, left a lot of room for improvement, and justified the need for more advanced computer vision algorithms to improve how restored vision functionality.

In the future, we could, accompanied with a better understanding of what corrected vision looks like, develop a more accurate model of what patients actually see. Once we have a better base of pixels from which to work, we could add more sophisticated computer vision algorithms to incorporate features such as object detection and edge enhancement. Additionally, having a smart camera determine from context what information to send to the patient, such as deciding to emphasize a bathroom sign if one is caught in the patients visual field, could do wonders in enhancing the functionality of restored vision. Hopefully, this simulation can be used to better illustrate the the quality of vision patients have to work with, and can be used as a tool in developing computer vision algorithms that can improve the functionality of this retinal prosthesis and future methods of restoring eyesight.

References - Resources and related work

References

[1] "Project Xense Retinal Implant Simulation" etc.cmu.edu. Carnegie Mellon University, 2012. Web. 14 Mar 2014. <http://www.etc.cmu.edu/projects/tatrc>.

[2] "The Argus® II Retinal Prosthesis System" 2-sight.eu/en/product-en. The Argus II Retinal Prosthesis System, 2014. Web. 15 Mar 2014. <http://2-sight.eu/en/about-us-en>

[3] "Holographic display system for restoration of sight to the blind" G A Goetz et al 2013 J. Neural Eng. 10 056021

[3] "Introduction to OpenCV" opencv-python-tutroals.readthedocs.org. OpenCV-Python Tutorials, 18 Feb 2014. Web. 15 Mar 2014. <http://opencv-python-tutroals.readthedocs.org/en/latest/py_tutorials/py_setup/py_table_of_contents_setup/py_table_of_contents_setup.html>

[4] "Eye Simulator" cnib.ca. Canadian National Institute for the Blind, 2014. Web. 26 Feb 2014 <http://www.cnib.ca/en/about/Pages/default.aspx>

[5] "Photo to colored dot patterns with OpenCV" opencv-code.com OpenCV Code, Feb 13, 2013. Web. 3 Mar 2014 <https://opencv-code.com/tutorials/photo-to-colored-dot-patterns-with-opencv>.

[6] "Testimonial for Argus II Retinal Prosthesis System" ophthalmologytimes.modernmedicine.com. Ophthalmology Times, 28 Feb 2013. Web. 28 Feb 2014.<http://ophthalmologytimes.modernmedicine.com/ophthalmologytimes/news/user-defined-tags/paulo-stanga/testimonial-argus-ii-retinal-prosthesis-syste>

Software

Image Systems Engineering Toolbox http://imageval.com/

Python OpenCV http://http://opencv.org/

Appendix I - Code and Data

In the belief that the techniques used may be illustrated best by example, the Python code used to perform the image processing is available below.

Code

All code was written in Python 2.7.5 for Mac OSX, Mavericks. External dependencies include the OpenCV and NumPy

File:SimulatedVisionPythonScript.zip

Additionally, in the interest of easing the installation of OpenCV, I've attached here a guide for the installation of OpenCV on Mavericks

File:OpenCVInstallationGuide.pdf

Presentation

This project was given as a 10-minute presentation to the PSYCH221 Winter 2014 class at Stanford. The presentation files used are linked below.