DXMPsych2012Project: Difference between revisions

imported>Psych2012 |

imported>Psych2012 |

||

| Line 20: | Line 20: | ||

[[File:Example3.jpg |thumb|300px|center| Figure 3]] | [[File:Example3.jpg |thumb|300px|center| Figure 3]] | ||

<br> | <br> | ||

= Methods = | = Methods = | ||

Revision as of 19:10, 19 March 2012

Back to Psych 221 Projects 2012

Introduction

With the proliferation of digital cameras and advancement of processors, software image processing and image perception applications have become increasing important and widely-used. In applications such as face localization, human detection and pose classification, skin detection is usually the first step for segmentation. Skin detection using color information has been long studied. Color based skin detection is fast and simple to implement, however, it usually suffer from high false detection error due to metamerism, i.e. nonskin surface have the same color space value as skin. This project will evaluate performance of skin detection in different color spaces, and answer the question - is there a color space that is the best for skin detection?. In this project, I will build skin classifiers in RGB, YCbCr, HSV, xyY and CIELAB, and evaluate their performance based on the false detection and false dismissal rate. In addition, we construct hybrid skin detectors that combine information of multiple color spaces.

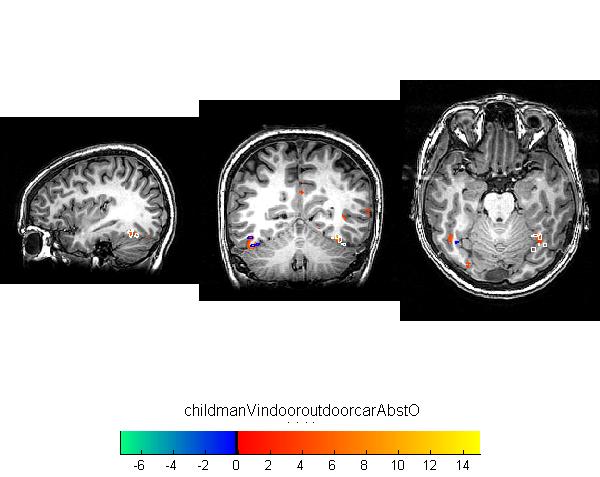

Below is another example of a reinotopic map in a different subject.

Figure 2

Once you upload the images, they look like this. Note that you can control many features of the images, like whether to show a thumbnail, and the display resolution.

Methods

Color Space

GRB

Retinotopic maps were obtained in 5 subjects using Population Receptive Field mapping methods Dumoulin and Wandell (2008). These data were collected for another research project in the Wandell lab. We re-analyzed the data for this project, as described below.

YCbCr

YCbCr method

HSV

HSV method

xyY

xyY method

CIELAB

LAB method

Classifier

How to build a classifier

proposed method

What is the proposed method

Results - What you found

Results in different color spaces

Some text. Some analysis. Some figures. Maybe some equations.

Results with combined method

Some text. Some analysis. Some figures. Maybe some equations.

Equations

If you want to use equations, you can use the same formats that are use on wikipedia.

See wikimedia help on formulas for help.

This example of equation use is copied and pasted from wikipedia's article on the DFT.

The sequence of N complex numbers x0, ..., xN−1 is transformed into the sequence of N complex numbers X0, ..., XN−1 by the DFT according to the formula:

where i is the imaginary unit and is a primitive N'th root of unity. (This expression can also be written in terms of a DFT matrix; when scaled appropriately it becomes a unitary matrix and the Xk can thus be viewed as coefficients of x in an orthonormal basis.)

The transform is sometimes denoted by the symbol , as in or or .

The inverse discrete Fourier transform (IDFT) is given by

Conclusions

Here is where you say what your results mean.

References

[1] Cahi, D. and Ngan, K. N., “Face Segmentation Using Skin-Color Map in Videophone Applications”, IEEE Transaction on Circuit and Systems for Video Technology, Vol. 9, pp. 551-564 (1999).

[2] Crowley, J. L. and Coutaz, J., “vision for Man Machine Interaction”, Robotics and Autonomous Systems, Vol. 19, pp. 347-358 (1997).

[3] Kjeldsen, R. and Kender., J., “Finding Skin in Color Images”, Proceedings of the Second International Conference on Automatic Face and Gesture Recognition, pp 312-217 (1996).

[4] Singh, S. K., Chauhan, D. S., Vatsa, M. And Singh, R., “A Robust Skin Color Based Face Detection Algorithm”, Tamkang Journal of Science and Engineering, Vol. 6, No. 4, pp. 227-234 (2003).

Appendix I - Code and Data

Code

Data

Appendix II - Work partition (if a group project)

Brian and Bob gave the lectures. Jon mucked around on the wiki.