Chris Baldassano: Difference between revisions

imported>Psych204B No edit summary |

imported>Psych204B No edit summary |

||

| Line 14: | Line 14: | ||

= Methods = | = Methods = | ||

=== Data Acquisition === | === Data Acquisition === | ||

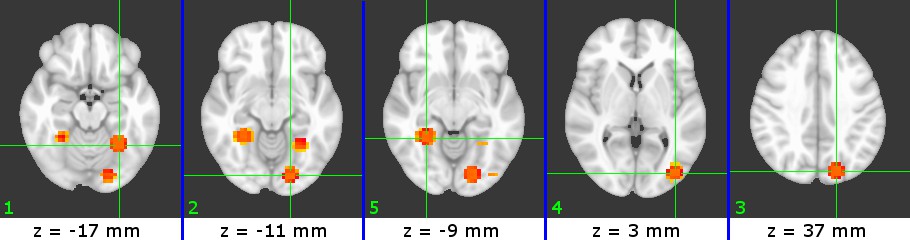

[[File:NaturalScenes.jpg |thumb|Figure 1]] | |||

The data used is from the experiment described in [1]. Subjects passively viewed color images of six types of natural scenes: beaches, buildings, forests, highways, industry, and mountains). The stimuli were presented in a block design, with each block composed of 10 images from the same category displayed for 1.6 seconds each (8 brain acquisitions were made during each block). The subject viewed 12 runs of images, with each run composed of six blocks separated by 12 second fixation periods. (The original study also collected 12 runs with inverted images, but this data was not used for the current experiment). | The data used is from the experiment described in [1]. Subjects passively viewed color images of six types of natural scenes: beaches, buildings, forests, highways, industry, and mountains). The stimuli were presented in a block design, with each block composed of 10 images from the same category displayed for 1.6 seconds each (8 brain acquisitions were made during each block). The subject viewed 12 runs of images, with each run composed of six blocks separated by 12 second fixation periods. (The original study also collected 12 runs with inverted images, but this data was not used for the current experiment). | ||

Example scene images are shown in Figure 1. | |||

=== Data Analysis === | === Data Analysis === | ||

I obtained the | [I obtained data that had already been preprocessed using AFNI to provide motion correction and to subtract out the temporal mean of each voxel] | ||

First, a spherical searchlight of 81 voxels was centered at every voxel in the brain. Searchlights that did not contain 81 valid voxels (due to being near the edge of the brain, for example) were discarded. An SVM was then trained for each searchlight using the MATLAB implementation of LIBSVM [2]. Each of the 12 runs was held out for cross-validation, one at a time; the accuracy of each SVM was calculated as the average accuracy over these 12 cases. This step was very computationally intensive, requiring approximately 48 hours on a 2.7GHz machine. Searchlight ROIs were then ranked by accuracy. | |||

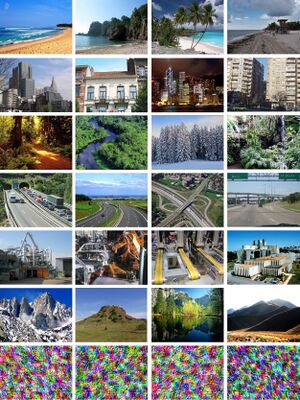

A simple form of nonmaximum suppression was used. Any searchlight that overlapped a searchlight with higher accuracy was discarded. The top five ROIs that remained were then selected. | |||

In order to interpret these ROIs, it was necessary to match them to anatomical images. Although no anatomical scans were done on the subjects in this study, the distortion matrix mapping the volumes to the MNI template has been calculated. In order to view the selected ROIs, the MATLAB searchlights were exported into AFNI format. FSL was then used to warp the ROIs according to a linear distortion matrix. The ROIs were overlayed on top of the MNI anatomical images using AFNI. | |||

[[File:Connectivitymodel.jpg |thumb|Figure 2]] | |||

Finally, a connectivity analysis was conducted based on the graphical model in [3], as shown in Figure 2. The probability estimates output from each SVM are the X variables. Rather than having each ROI "vote" independently to predict the scene type, the predictions from multiple ROIs are allowed to interact in the middle layer. If a connection between two of the Y variables improves classification performance, this indicates that the connected ROIs have complementary information about the scene. Connectivity is learned by comparing classification performance with all the Ys unconnected and with one pair of Ys connected. All of the pairwise connections that improve performance are then added to the final connectivity graph. Although this procedure is not guaranteed to find the minimal connectivity graph, it decreases the running time from <math>O(2^{n^2})</math> to <math>O(n^2)</math> | |||

= Results - What you found = | = Results - What you found = | ||

| Line 25: | Line 33: | ||

== Searchlight Results== | == Searchlight Results== | ||

[[File:B7_all.jpg | Figure 1]] | [[File:B7_all.jpg | Figure 1]] | ||

[[File:C4_all.jpg | Figure 1]] | [[File:C4_all.jpg | Figure 1]] | ||

[[File:W5_all.jpg | Figure 1]] | [[File:W5_all.jpg | Figure 1]] | ||

| Line 38: | Line 48: | ||

[1] D.Walther, E. Caddigan, L. Fei-Fei*, D. Beck*. Natural scene categories revealed in distributed patterns of activity in the human brain. The Journal of Neuroscience, 29(34):10573-10581, 2009 (*indicates equal contribution) | [1] D.Walther, E. Caddigan, L. Fei-Fei*, D. Beck*. Natural scene categories revealed in distributed patterns of activity in the human brain. The Journal of Neuroscience, 29(34):10573-10581, 2009 (*indicates equal contribution) | ||

[2] Chih-Chung Chang and Chih-Jen Lin, LIBSVM : a library for support vector machines, 2001. Software available at http://www.csie.ntu.edu.tw/~cjlin/libsvm | |||

[3] Bangpeng Yao, Dirk B. Walther, Diane M. Beck and Li Fei-Fei. "Hierarchical Mixture of Classification Experts Uncovers Interactions Between Brain Regions." Neural Information Processing Systems Conference (NIPS 2009). December 7-10, 2009. Vancouver, B.C., Canada. | |||

Software | Software | ||

Revision as of 07:01, 15 March 2010

Project Title - Identifying ROIs using SVM Performance

Intro

Background

Scene Processing

References

Support Vector Machines

Basics and training

Complementary Connectivity

Bangpeng's Paper

Methods

Data Acquisition

The data used is from the experiment described in [1]. Subjects passively viewed color images of six types of natural scenes: beaches, buildings, forests, highways, industry, and mountains). The stimuli were presented in a block design, with each block composed of 10 images from the same category displayed for 1.6 seconds each (8 brain acquisitions were made during each block). The subject viewed 12 runs of images, with each run composed of six blocks separated by 12 second fixation periods. (The original study also collected 12 runs with inverted images, but this data was not used for the current experiment).

Example scene images are shown in Figure 1.

Data Analysis

[I obtained data that had already been preprocessed using AFNI to provide motion correction and to subtract out the temporal mean of each voxel] First, a spherical searchlight of 81 voxels was centered at every voxel in the brain. Searchlights that did not contain 81 valid voxels (due to being near the edge of the brain, for example) were discarded. An SVM was then trained for each searchlight using the MATLAB implementation of LIBSVM [2]. Each of the 12 runs was held out for cross-validation, one at a time; the accuracy of each SVM was calculated as the average accuracy over these 12 cases. This step was very computationally intensive, requiring approximately 48 hours on a 2.7GHz machine. Searchlight ROIs were then ranked by accuracy.

A simple form of nonmaximum suppression was used. Any searchlight that overlapped a searchlight with higher accuracy was discarded. The top five ROIs that remained were then selected. In order to interpret these ROIs, it was necessary to match them to anatomical images. Although no anatomical scans were done on the subjects in this study, the distortion matrix mapping the volumes to the MNI template has been calculated. In order to view the selected ROIs, the MATLAB searchlights were exported into AFNI format. FSL was then used to warp the ROIs according to a linear distortion matrix. The ROIs were overlayed on top of the MNI anatomical images using AFNI.

Finally, a connectivity analysis was conducted based on the graphical model in [3], as shown in Figure 2. The probability estimates output from each SVM are the X variables. Rather than having each ROI "vote" independently to predict the scene type, the predictions from multiple ROIs are allowed to interact in the middle layer. If a connection between two of the Y variables improves classification performance, this indicates that the connected ROIs have complementary information about the scene. Connectivity is learned by comparing classification performance with all the Ys unconnected and with one pair of Ys connected. All of the pairwise connections that improve performance are then added to the final connectivity graph. Although this procedure is not guaranteed to find the minimal connectivity graph, it decreases the running time from to

Results - What you found

Searchlight Results

Connectivity Results

Some text. Some analysis. Some figures.

Conclusions

Here is where you say what your results mean.

References - Resources and related work

[1] D.Walther, E. Caddigan, L. Fei-Fei*, D. Beck*. Natural scene categories revealed in distributed patterns of activity in the human brain. The Journal of Neuroscience, 29(34):10573-10581, 2009 (*indicates equal contribution)

[2] Chih-Chung Chang and Chih-Jen Lin, LIBSVM : a library for support vector machines, 2001. Software available at http://www.csie.ntu.edu.tw/~cjlin/libsvm

[3] Bangpeng Yao, Dirk B. Walther, Diane M. Beck and Li Fei-Fei. "Hierarchical Mixture of Classification Experts Uncovers Interactions Between Brain Regions." Neural Information Processing Systems Conference (NIPS 2009). December 7-10, 2009. Vancouver, B.C., Canada.

Software