2009 Jimmy Chion: Difference between revisions

imported>Psych 204 |

imported>Psych 204 |

||

| Line 29: | Line 29: | ||

=== the code === | === the code === | ||

[[ | [[File:samediff3.m]] | ||

== Second approach == | |||

= Results - What you found = | = Results - What you found = | ||

Revision as of 09:53, 8 December 2009

Back to Psych 204 Projects 2009

Project: Intersubject mapping correlations

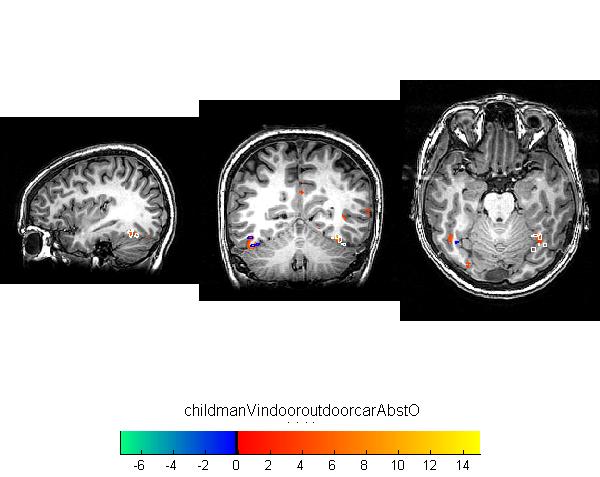

Multisubject analysis remains a core problem in neuroimaging due to the greater variance in cortical activity is less well-defined areas of the brain. This project correlated an ROI in one subject's brain to multiple ROIs in another brain. In our first exploration, Dr. Nathan Witthoft and I wrote MATLAB code to find, for a given voxel in subject A, the ROI in subject B which held the voxel with the highest correlation. We iterated across all voxels in subject A's ROI and found the mode of where the highest correlations were from. The simple correlations were then mapped in various ways. In our second exploration, we wrote code to map (on a voxel by voxel basis) the average correlations of an ROI in subject A to the ROIs in subject B. Our code provides a tool to help quantify the similarity or dissimilarity of ROIs in different subjects.

Background

Research

You can use subsections if you like.

Below is an example of a retinotopic map. Or, to be precise, below will be an example of a retinotopic map once the image is uploaded. To add an image, simply put text like this inside double brackets 'MyFile.jpg | My figure caption'. When you save this text and click on the link, the wiki will ask you for the figure.

Below is another example of a reinotopic map in a different subject.

Figure 2

Once you upload the images, they look like this. Note that you can control many features of the images, like whether to show a thumbnail, and the display resolution.

The problem

Functional regions in visual cortex are identified using various types of experiments. There are two common ways to identify regions. For retinotopic regions, the areas are often well-defined and have agreed upon criteria for distinguishing different areas of the visual cortex. For researchers working with category selectivity (object localizers), or finding areas keen on certain categories, the regions become much more vague. If one considers the fusiform face area (FFA), it is usually one of several areas that show functional activity when face stimuli are presented. These multiple clusters in each individual may vary greatly and it becomes a significant problem in multisubject analysis, where identifying congruent regions is critical for analysis. Selectivity ceases to be a sufficient condition for calling two regions the same.

Methods

First approach

We have subject A and some number of ROIs, including the pEBA and aEBA. We also have some scans of various kinds. In subject B, we have the same scans and ROIs as well as a large number of additional ROIs. For subject A we choose a single voxel in the pEBA and extract the fMRI data associated (timeseries, Glm betas, whatever) with it. We then search through all the data from subject B, voxel by voxel until we determine the voxel in subject B that is most correlated with the voxel from subject A. We then write down the correlation and the name of the ROI in subject B where the voxel was found. We repeat for every voxel in subject A for the pEBA. If the pEBA is 100 voxels, then at the end we should have 100 correlations and 100 ROI choices. If the pEBA is distinct from the aEBA (at least using the type of data we have collected) the majority of those ROI choices should be pEBA in subject 2. We then repeat this for every ROI in subject A. So in the end, we have what each ROI in subject A is best matched with in subject B.

the code

Second approach

Results - What you found

Retinotopic models in native space

Some text. Some analysis. Some figures.

Retinotopic models in individual subjects transformed into MNI space

Some text. Some analysis. Some figures.

Retinotopic models in group-averaged data on the MNI template brain

Some text. Some analysis. Some figures.

Retinotopic models in group-averaged data projected back into native space

Some text. Some analysis. Some figures.

Conclusions

Here is where you say what your results mean.

References - Resources and related work

References

Software

Appendix I - Code and Data

Code

Data

Appendix II - Work partition (if a group project)

Brian and Bob gave the lectures. Jon mucked around on the wiki.

Test Equations

This is a test of equation use on our wiki. The text below is copied and pasted from wikipedia's article on the DFT

Definition

The sequence of N complex numbers x0, ..., xN−1 is transformed into the sequence of N complex numbers X0, ..., XN−1 by the DFT according to the formula:

where i is the imaginary unit and is a primitive N'th root of unity. (This expression can also be written in terms of a DFT matrix; when scaled appropriately it becomes a unitary matrix and the Xk can thus be viewed as coefficients of x in an orthonormal basis.)

The transform is sometimes denoted by the symbol , as in or or .

The inverse discrete Fourier transform (IDFT) is given by

A simple description of these equations is that the complex numbers represent the amplitude and phase of the different sinusoidal components of the input "signal" . The DFT computes the from the , while the IDFT shows how to compute the as a sum of sinusoidal components with frequency cycles per sample. By writing the equations in this form, we are making extensive use of Euler's formula to express sinusoids in terms of complex exponentials, which are much easier to manipulate. In the same way, by writing in polar form, we immediately obtain the sinusoid amplitude and phase from the complex modulus and argument of , respectively:

Note that the normalization factor multiplying the DFT and IDFT (here 1 and 1/N) and the signs of the exponents are merely conventions, and differ in some treatments. The only requirements of these conventions are that the DFT and IDFT have opposite-sign exponents and that the product of their normalization factors be 1/N. A normalization of for both the DFT and IDFT makes the transforms unitary, which has some theoretical advantages, but it is often more practical in numerical computation to perform the scaling all at once as above (and a unit scaling can be convenient in other ways).

(The convention of a negative sign in the exponent is often convenient because it means that is the amplitude of a "positive frequency" . Equivalently, the DFT is often thought of as a matched filter: when looking for a frequency of +1, one correlates the incoming signal with a frequency of −1.)

In the following discussion the terms "sequence" and "vector" will be considered interchangeable.