Deblurring sensor images using known psf models: Difference between revisions

imported>Projects221 |

imported>Projects221 |

||

| Line 52: | Line 52: | ||

* Peak Signal to Noise Ratio (PSNR): | * Peak Signal to Noise Ratio (PSNR): | ||

The Peak signal to noise ratio is the most commonly used image quality metric and high values almost always | The Peak signal to noise ratio is the most commonly used image quality metric and high values almost always signify a better reconstruction of the original image. It is defined as follows:<br> | ||

<math>PSNR=20log_{10}\frac{MAX_i}{\sqrt{MSE}}</math> | <math>PSNR=20log_{10}\frac{MAX_i}{\sqrt{MSE}}</math> | ||

Revision as of 04:03, 21 March 2014

Project Team: Jona Babi, Rakesh Ramesh, Dimitris Papadimitriou

In this project, we aim to deblur the image produced by the sensor using the known PSF model. We extend the study to different types of psf models: shift-invarianat, shift-vairant and wavelength-dependent PSFs, and perform noise-margin analysis for different types of sensor noise: Read noise, Pixel Response Non-Uniformity (PRNU) and Dark Signal Non-Uniformity (DSNU).

We will then use the developed model to frame answers to interesting questions like:

- How well can the image be sharpened using deconvolution if PSF is known?

- Which type of PSF-model provides us sharper deblurred images?

- What noise-margins can a PSF-model tolerate for deblurring?

- What type of noise can be tolerated by the sensor?

Introduction

Image restoration refers to the process of removal or minimization of known degradations in an image. This includes deblurring of images degraded by the limitations of the sensor or its environment, noise filtering, and correction of geometric distortions or non-linearities due to sensors. One of the reasons why the degradation happens is that most lenses including the human eye lens are not perfect optical systems. If an exceedingly small dot of light, is projected through a lens or an image system, the output will include the point surrounded by a small amount of blur. The question is how can this degradation be represented? The answer is using the Point Spread Function (PSF).

The PSF describes the response of an image system to a point input and it is analogous to the impulse response of filters [1]. A point input, represented as a single pixel in the “ideal” image, will be reproduced as something other than a single pixel in the “real” image. The output image may then be regarded as a two-dimensional convolution of the “ideal” image with the PSF: (superposition principle). The PSF can be represented as a 4D-function, PSF(x, y, , , ), which depends on the image plane coordinates (x,y), the original field position (, ) and the wavelength . There are different types of PSF models, one can use to represent the blurring by the optics which are essentially simplifications of the 4D-function by fixing some of the parameters. For this project, we consider 3 PSF-models: Shift-Invariant PSF, Shift-Variant PSF and Wavelength-dependent PSF. Shift-Invariant PSF-model assumes that the PSF is the same over the original scene and thus the final image is just a 2D-convolution of the scene and the PSF. Shift-Variant PSF-model relaxes that constraint which means the PSF is dependent on the position of the pixel in the original image. We represent Shift-Variant PSF using a matrix of Shift-Invariant PSF that is indexed by the field position from the original scene. In Wavelength-dependent PSF model, we vary the PSF for different wavelengths to capture the chromatic aberration due to the different blur functions for different wavelengths.

One approach towards image quality improvement is using the lens point spread function (PSF) to deconvolve the image so that the aberrations can be reduced. This step requires knowledge of the underlying PSFs, the acquiring of which (usually by taking photos of pinhole arrays) is laborious and prohibitive. Alternatively, one can simulate the PSF if the lens model is known. So it is possible to measure and improve the performance of an imaging system by running simulations without any immediate need of hardware usage. Such simulation capabilities allow almost effortless visualization of the image quality impact of component substitution, or modifications to tolerances or component specification. Consequently, the construction of precise image capturing simulations is an active research area due to its significance in image quality improvement. [2] [3]

Methods

Tools

We used the Image Systems Engineering Toolbox (ISET) [4] to perform all our analysis for the project. We utilized the inbuilt Lucy-Richardson deconvolution method [5] to perform the deblurring. Lucy-Richardson method iteratively deconvolves the blurred image and tries to maximize the likelihood of the deblurred image having been blurred by the supplied PSF to obtain . We also use the zemax example optics (rtZemaxExample.mat), found under ISET data, for the Shift-Variant and Wavelength-dependent study.

Steps

Simulation

We simulated the image system pipeline from the scene to the optical image to the sensor image using ISET. Then we deblur the sensor image and compare it to our original image to asses the quality of our deblurring method. Broadly, we can separate our simulation into the following steps:

- Create a scene (point-array / cameraman / lenna)

- Load the zemax ray trace PSF data (aspherical 2mm lens)

- Generate the zemax optical image

- [ Create optical image with a shift invariant zemax psf ]

- Create a sensor (monochromatic / bayer)

- [ Modify the sensor noise attributes ]

- Capture the sensor image

- Deconvolve the sensor image using the known psf

- Compare the deblurred image with the original image (PSNR, SSIM)

Analysis

After setting up the simulation infrastructure and adding in the PSF models to the flow, we perform the following analysis for the purpose of this project:

- Analyse how well the deblurred images compare to our original image with different PSF models (Shift-Invariant, Shift-Variant, Wavelength-dependent)

- Analyse the deblurring quality for different types of scenes - Sparse (Point-array) and Dense (Cameraman, Lenna)

- Analyse the noise margins on the image that can be tolerated by the blur function

- Analyse the noise margins for different types of sensor noise (Read noise, PRNU, DSNU)

Image Quality Metrics

In order to measure the quality of the blurred and deblurred images we will use two of the most widely used metrics, the Peak Signal to Noise Ratio (PSNR) and Structural Similarity Index (SSIM).

- Peak Signal to Noise Ratio (PSNR):

The Peak signal to noise ratio is the most commonly used image quality metric and high values almost always signify a better reconstruction of the original image. It is defined as follows:

with the mean squared error being

.

where I,K and are the control image, output image and the maximum image pixel value of the image respectively.

- Structural Similarity Index (SSIM):

The structural similarity index is a measure of similarity between images by taking into account structural information. The definition is:

with and being the mean, variance and covariance and some constant to avoid division by a small number.

Results

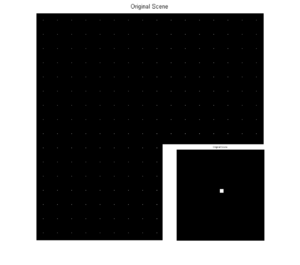

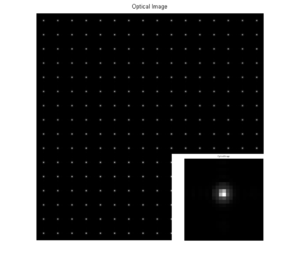

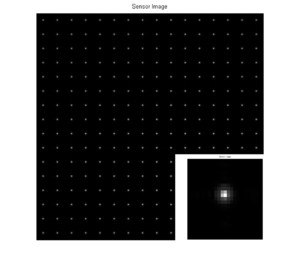

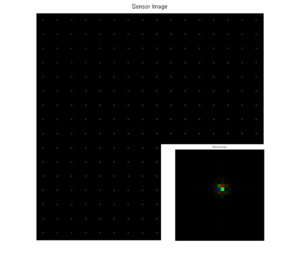

PSF Model: Shift-Invariant; Scene: Point-Array

In the first experiment, we create a point-array scene (1 wavelength only) and blur the scene using a shift-invariant PSF. In order to blur this image uniformly we used only one of the PSFs that we obtained from the optics object of the Zemax file (which returns 37 by 12 distinct PSFs). The blurred optical image and the sensor image can be seen here:

-

Original Scene

-

Optical Image (PSNR=25.76, SSIM=0.87)

-

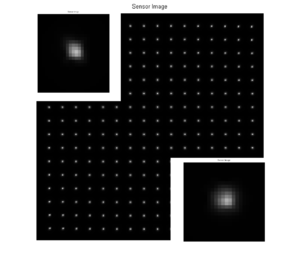

Sensor Image (PSNR=27.40, SSIM=0.86)

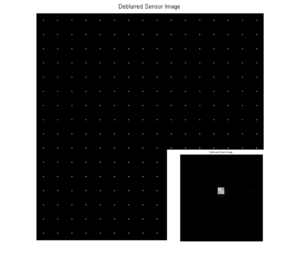

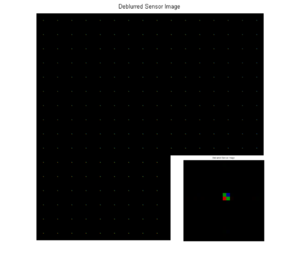

We note that all the pixels of the image are blurred in exactly the same way because the PSF is shift-ivariant. In order to deblur the sensor image we deconvolve it by using the same PSF that we used to blur the original scene. The deblurred image can be seen here:

-

Sensor Image

-

Deblurred Sensor Image (PSNR=28.22, SSIM=0.94)

We observe that the deblurring results in an sharper image with higher PSNR value. However this image slightly differs from the original one because the shift-invariant optics in ISET is implemented as an Optical Transfer Function (OTF) which performs the convolution in the fourier domain, i.e. OTF is multiplied by the FFT of the original image and then IFFT to obtain the blurred image. When the inverse fourier transform is applied, ISET keeps only the magnitude of the resultant blurred image and loses the phase of the complex value. Hence, the final image is the deconvolution of the magnitude of the IFFT which doesnt reproduce the exact original image but is very close to it.

PSF Model: Shift-Variant; Scene: Point-Array

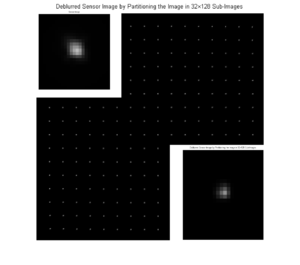

In the shift-variant case we use the field position dependent PSF to blur and deblur the image. The effect of using such PSFs can be seen in the resulting images in which the blur is clearly nonuniform among the pixels. The resulting deblurred image is significantly improved in quality both visually and according to the PSNR and SSIM metrics. Since we study the shift-variant case the deblurring has to take this into account. More specifically the approach that we follow first separates the sensor image into a number of sub-images (). Then we deblur each part of the image by using the PSF that corresponds to each field position and finally we merge the images in order to acquire the complete image. In the case that we present we used a partitioning. The deblurred image is both visually and according to the quality metrics improved. Given the simple structure of the image there is no need to perform any sort of smoothing on the output image since the boundary artifacts do not affect the quality of the image.

-

Sensor Image

-

Deblurred Sensor Image

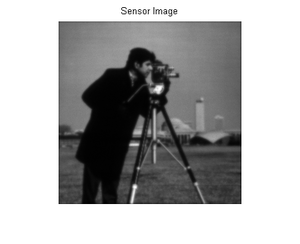

PSF Model: Shift-Invariant; Scene: Cameraman

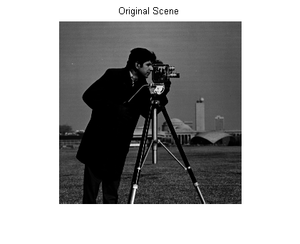

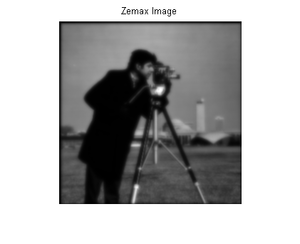

In this part we will use the cameraman monochromatic image in order to perform the pipeline simulations. The original image, along with the blurred Zemax figure are the following:

-

Original Scene

-

Zemax Blurred Optical Image

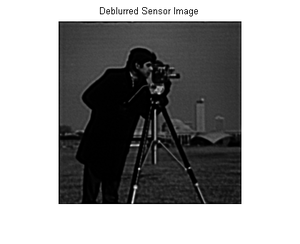

In order to deblur the sensor image that we obtain we use the same field position independent PSF that we used to blur the image. The deblured image is significantly improved compared to the sensor image, but it clearly is not as sharp as the original one and it has lower contrast. Both the sensor and the deblurred image are included below.

-

Sensor Image (PSNR=22.45, SSIM=0.67)

-

Deblurred Sensor Image (PSNR=26.92, SSIM=0.86)

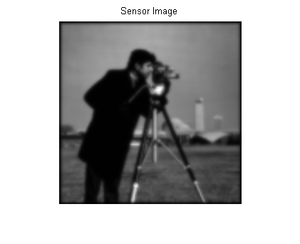

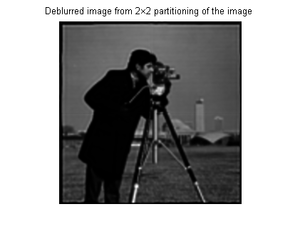

PSF Model: Shift-Variant; Scene: Cameraman

The blurring in this case is not shift-invariant as we use a field position dependent PSF to blur the image. The deblurring is carried out as described in the point array example and the deblurred image that we obtain long with the sensor image can be seen here:

-

Sensor Image

-

Deblurred Image

However, we observe that our method except for deblurring the image it also adds noise in the form of edge artifacts that were not present in the blurred image. These artifacts show up because the deconvolution cannot produce proper results for pixels that are close to the boundary of the image. For this reason we need to perform smoothing in the deblurred image in order to avoid so coarse effects. We tried a number of methods for this cause. First we used edge detection algorithms to detect the sharp edges of the image and delete them. However, this approach resulted in losing information about the already existing edges in the image. Furthermore, uniform gaussian smoothing on the entire image or in the noisy edges of the image did not lead to solid results. The method that gives the best results, according to both visual and PSNR criteria is the one in which we augment each sub-image by replicating the row and column of pixels that are in contact with another sub-image a few times. Then we perform deconvolution on each of these augmented sub-images and merge them together by ignoring the replicated pixels so that we eventually get an image of exactly the same dimension as the initial one. One instance of partitioning of the image can be seen here:

-

Deblurred Image

-

Smoothed Deblurred Image

Looking closely we can still detect the edge artifacts which are now very limited. For this specific image that we are using dividing it into parts smaller than does not result in significant improvement in the PSNR metric.

PSF Model: Wavelength-dependent; Scene: Point-Array

-

Sensor Image

-

Deblurred Image

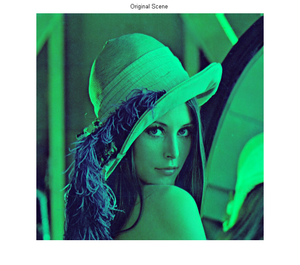

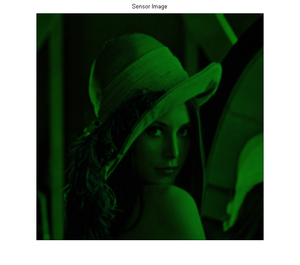

PSF Model: Wavelength-dependent; Scene: Lenna

-

Original Scene

-

Sensor Image

-

Deblurred Image

Noise Margins

The deconvolution algorithm used to deblur the sensor image is sensitive to noise. Non-ideal sensor adds noise to the sensor image. Types of Noise:

- Shot Noise

- Read Noise

- Pixel Response Non-Uniformity (PRNU)

- Dark Signal Non-Uniformity (DSNU)

Noise Margin analysis characterizes the sensitivity of the deblurring scheme to the various type of noise.

Conclusions

The scope of the project was to provide answers on how well we can sharpen an image if we know the underlying PSF as a function of the PSF model and the different noise parameters. Given a field position independent PSF and no additional sensor noise we observed that we could achieve a significant improvement in the sharpness of the image. In the case of shift-variant PSFs we had to partition the image and deblur by using a different PSF for each part. A small partitioning has a significant impact on the PSNR but partitioning the image into very small images does not give further improvement, mainly because the edge artifacts start to have greater effect on the quality.

References

- Wikipedia : Point Spread Function

- Image Enhancement using Calibrated Lens Simulations (Y.Shih,B.Guenter and N.Joshi)

- Image Capture Simulation Using an Accurate and Realistic Lens Model (R.Short,D.Williams and A.Jones)

- Joyce Farrell, Peter B. Catrysse, Brian Wandell, “Digital Camera Simulation”, http://white.stanford.edu/~brian/papers/pdc/2012-DCS-OSA-Farrell.pdf

Appendix I

Appendix II

Our group met frequently to discuss and understand ISET before setting up the simulation infrastructure for the experiments. Then we carried out the experiments in parallel and analysed each part individually and as a group to draw concrete conclusions. We divided the project workload very broadly as listed below:

Rakesh Ramesh: Shift-invariant PSF study, Wavelength-dependent PSF study

Jona Babi: PSF-size influence study, Noise margins analysis

Dimitris Papadimitriou: Shift-variant PSF study, Edge Artefact smoothing, Image Quality Metrics

We worked on the group presentation and the wiki together as a group.

Acknowledgements

We would also like to thank Joyce Farrell and Andy Lin for their helpful guidance in the duration of our project.