Andy Lin: Difference between revisions

imported>Ydna |

imported>Ydna |

||

| Line 120: | Line 120: | ||

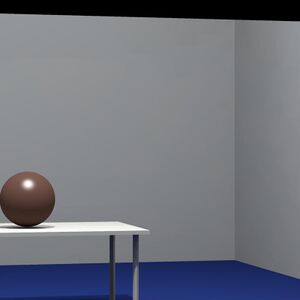

[[File:Andy_FinalRenderedImage_TableSphere.png| | [[File:Andy_FinalRenderedImage_TableSphere.png|300px]] | ||

''Figure 9: ISET rendering of table-sphere.'' | ''Figure 9: ISET rendering of table-sphere.'' | ||

Revision as of 13:11, 18 March 2011

Introduction

It is extremely useful to render 3D scenes and be able to feed the radiance information of these scenes into camera simulation software such as ISET [CITATION: ISET]. Synthesizing scenes allows for the control over scene attributes that we could be difficult to obtain when working with real world scenes. For example, scene synthesis allows for the control over foreground objects, backgrounds, texture and lighting. Most importantly, the depth information is very easy to obtain, in contrast to the complicated depth estimation algorithms for real-world scenes.

Often times, people criticize synthesized scenes and say that they are not realistic enough. However, with enough effort and precision, highly realistic scenes can be regenerated. See Figure 1 for examples of synthesized scenes with the Radiance software [CITATION: Radiance].

Figure 1: Rendered scenes can be quite realistic. These scenes, in particular, are example scenes from Radiance.

Moreover, if these rendered scenes can be fed into a camera simulation software such as ISET, then we could simulate photographs of these artificial scenes. Moreover, the additional 3D information allows for renderings of advanced photography attributes that have not been simulated extensively before in a well-controlled synthesized environment. For example, the 3D information allows for the simulation of multiple camera systems, proper lens depth-of-field simulation, synthetic aperture, flutter shutter, and even light-fields cameras.

For this project, I used the RenderToolBox software tool, provided by David Brainard [CITATION: Brainard] to synthesize example 3D scenes. The radiance of these scenes, along with the depth map were then fed into the ISET simulation environment for a simulated camera capture of the scene.

Methods

In order to use RenderToolBox, a deeper understanding of its architecture is required. RenderToolBox is actually a wrapper software tool of several other simulation software. In particular, Radiance, PsychToolBox, Physicalled Based Rendering (PBRT), and SimToolBox are used to help render an artificial scene. The most important software tool that RenderToolBox is built on, is Radiance, which is a scene rendering tool which contains a ray tracer.

RenderToolBox

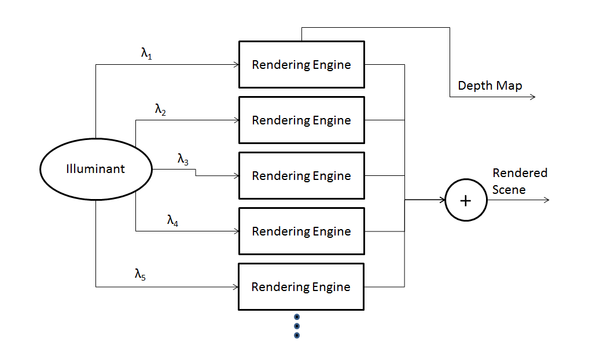

RenderToolBox works by repeatedly calling another a separate rendering engine. There are several rendering engines available in RenderToolBox such as Radiance and PBRT. Although these rendering engines are very capable, they are limited in their output. Most importantly, these rendering engines can only output images with a tri-chromatic colorspace. However, for proper camera simulation, we require multi-spectral data. This problem is the one that RenderToolBox addresses. In order to allow for multi-spectral renderings, it provides multiple input light sources to the rendering engine, each at a specific wavelength. The intensities of these monochromatic input light sources are at the corresponding intensities of the desired light sources of that wavelength. After the rendering engine renders these scenes with different wavelength light sources, it then combines the data obtained. RenderToolBox also allows for us to obtain a depth map of the scene, at the specified camera position. See Figure 2 for the data flow of RenderToolBox.

Figure 2: Data flow for RenderToolBox and interaction with Radiance.

Available Objects To Render

A multitude of shapes, textures, lights, etc. can be rendered with Radiance, and therefore, with RenderToolBox as well. For example, Radiance is able to render different 3D shapes such as cones, spheres, cylinders, and cups. All these objects can then be made of different materials such as plastics, metals, and textures. Interesting lighting effects can be rendered as well for the scene. Not only can there be multiple light sources, but Radiance can also render glowing surfaces, mirrors, prisms, glass, and even mist. Also, any combination of different materials and lighting can be assigned to the objects.

Moreover, RenderToolBox and Radiance are able to use triangular meshes to create objects. This is a very powerful feature because any arbitrary object, which can be approximated with a 3D mesh can be rendered. In fact, RenderToolBox allows for the import of 3D meshes from 3D modeling software such as Maya.

Rendering Options

RenderToolBox is also highly customizable in terms of rendering options. There is access to different rendering engines to use (usually Radiance, or PBRT), as well as the camera angle, position, and field of view. The prominent light source can also be changed as well as the number of rendered light bounces. In other words, if there are highly reflective surfaces that can result in multiple light bounces, then the user can choose the number of light bounces should be simulated when rendering.

Interface With ISET

Rendering multi-spectral scenes is useful, but being able to render these scenes in a camera simulation framework such as ISET could be even more useful. Interfacing with ISET is relatively straightforward. The output from RenderToolBox is a Matlab .mat file, which contains a cell array, with each cell specifying the radiance of the scene at every point in space. This cell array was then converted to a 3D matrix, with the 3rd dimension specifying wavelength, and the first 2 dimensions specifying radiance in the camera plane. This 3D matrix is then fed into an ISET scene. The proper light source and brightness is also specified for the ISET scene. See Appendix for this code.

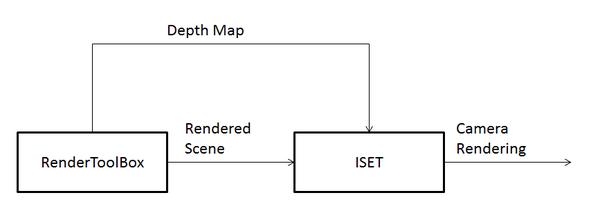

The second output from RenderToolBox is a depth-map. The depth-map is obtained by adding a rendering call to Radiance by modifying a RenderToolBox function. Although Radiance offers an output file for the depth-map, it is in a raw binary format. A Matlab script was written in order to properly read this data. After reading the depth map data, some data conditioning was performed to make sure that the depth data was within a proper range. See Figure 3 for a block diagram of how RenderToolBox can interface with ISET.

Figure 3: RenderToolBox and ISET can interface with each other quite easily.

Simulating Lens Depth-of-Field Blur

One possible simulation using RenderToolBox data in ISET is lens depth-of-field(DOF) blur. A lens is able to converge light rays that originate from 1 point in space, back onto 1 point at the image plane. However, the point in space in which this lens can re-converge light is limited in distance from a lens. If the object distance is too close, the lens will not be strong enough to re-converge the light, resulting in a blurry circle corresponding to that one point in space. On the other hand, if the object distance is too far, the lens will be too strong, and the light rays will cross each other, then form a blurry circle, once again at the image plane. See Figure 4 for an illustration of this concept. Therefore, the Point Spread Function(PSF), or impulse response, for the lens will vary according to the depth of an object. For areas of the image which are at the focal plane, the PSF will be close to an impulse. For areas of the image which are further from the focal plane, the PSF will become an increasingly large circle, producing blur for areas of the scene which are not in focus.

Another parameter that can influence the blur amount of the PSF, given a fixed sensor plane, is the aperture of the lens. When the aperture of a camera is more open, light near the edges of the lens will be bent and redirected more in order to converge when an object is in focus. When an object is out of focus, due to geometry, light that hits areas further from the center of the lens will again be bent more, producing a larger blur circle, than if the aperture were smaller. In order to characterize lens blur, given an object distance and aperture size, H. H. Hopkins [CITATION: H.H. HOPKINS PAPER] defined the w20 parameter.

The W20 parameter

As described earlier, there are 2 different parameters that influence lens blur, given a fixed sensor plane, the object distance as well as the aperture size. The larger the aperture, the larger the DOF blur. The further the object is from the focal plane, the larger the lens blur. The W20 parameters takes these 2 factors into account, and becomes a parameter for lens blur, which can then be used to calculate the optical transfer function (OTF), the Fourier transform of the PSF. [CITATION:HOPKINS].

The W20 parameter is roughly derived as follows. First, we define the following pupil function, which predicts the response of the system to a single ray of light:

The imaginary term accounts for the phase shift that the lens introduces, which bends the light towards the center. The w20 function then describes the attenuation that the lens introduces, as a function of the distance from the center of the lens. Note that x and y are coordinates in the plane of the lens. In other words, if one is to look through the lens from the front, they would be looking at the x-y plane directly. In this expression, w20 is a function of x and y, so the w20 parameter varies with x and y, which makes sense since the attenuation of the lens varies depending on the distance from the center of the lens, which usually has the least attenuation of light.

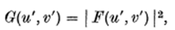

According to Fourier optics, the resulting electric field F at the sensor plane is found by taking the Fourier transform as follows:

The perceived intensity of the image is then the magnitude of the resulting electric field, squared.

This result is similar to the simpler Young's Double-slit Experiment, where the intensity of light at the image plane is the magnitude of the Fourier Transform of the indicator function which indicates the pattern of the slits[CITATION: YOUNG'S DOUBLE SLIT EXPERIMENT]. Since this perceived intensity is that of the response to a single point source of light, this intensity is referred to as the PSF.

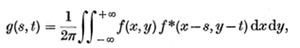

The OTF that we wish to calculate is actually the Fourier Transform of the PSF.

where g represents the OTF. Using Parseval's Theorem, we can then rewrite the OTF as follows:

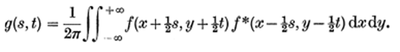

Next, we perform a simple change of variables to obtain the following expression:

Finally, we rewrite g as a function of s only, by setting t to 0 by accounting for the circular symmetry of the expression. D(s) then becomes the 1D OTF, which can be expanded to a 2D OTF by considering symmetry. Note that D(s) is a function of a, which is a function of w20.

The region of integration for D then becomes the intersection of 2 circles, as described in Figure 5, due to the two shifted pupil functions, f, which are radially symmetric.

The simplification of this integral then becomes the following expression, which is then written as the weighted sum of various Bessel functions:

Figure 5: The area of integration includes the intersection of the two circles.

Simulating Lens Blur

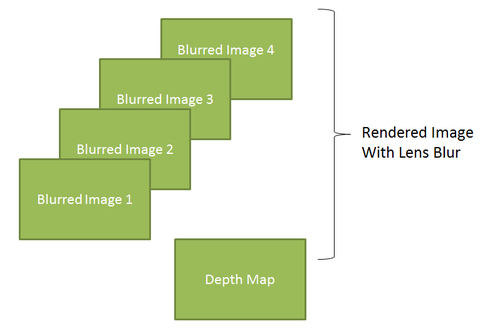

Once the w20 parameter is found, we can then simulate lens blur by employing the following method. Since the lens blur must be feasible, we bin the object distances uniformly. Since we assume that the aperture of the lens will not change, for different objects, which is a reasonable assumption, the only attribute of the system that modifies the w20 parameter, is object distance. Thus, for every discrete distance, we calculate the corresponding w20 parameter, then calculate the OTF, given this w20 parameter, and obtain a blurred image corresponding to that specific depth. Next, given the depth map, we can combine the different blurred images, by using the correct blurred image for a specific depth. See Figure 6 for an illustration of this concept.

Figure 6: The combination of different blurred images, each with the blur corresponding to a different depth, and a depth map can be used to render an image with lens blur.

Figure 6: The combination of different blurred images, each with the blur corresponding to a different depth, and a depth map can be used to render an image with lens blur.

I attempted to simulate this lens blur, with the previously described algorithm in ISET. However, the implementation is not yet feasible, since one of the ISET functions, defocusMTF, takes a very long time to run. This issue should be looked at in detail in the future, to allow for an ISET simulation of DOF lens blur.

Results

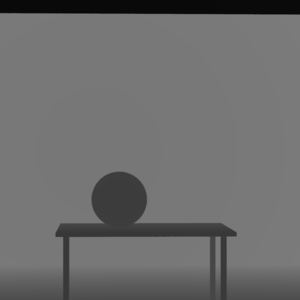

I was able to successfully render a basic scene, which is composed of a table with a sphere on top of it, within a rectangular indoor room. D65 was used as the illuminant, and all surfaces are glossy. The simulated camera was placed approximately directly in front of the table. See Figure 7 for an example monitor render of this scene. This rendering was taken directly from RenderToolBox. See Figure 8 for the successful depth-map rendering of the same table-sphere scene.

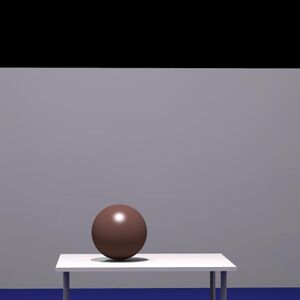

After rendering the table-sphere' scene in RenderToolBox, the radiance values were imported into ISET, along with the illuminant, D65. Afterwards, this scene was then fed into a standard camera image processing pipeline in ISET. See Figure 9 for the ISET rendering of the table-sphere scene. Although the ISET rendering for DOF lens blur is not completed, renderings of this type of image was produced in Photoshop, as inspiration for what is possible with depth information and rendering data. See Figure 10 for examples of these results with various blur amounts, and focal points.

Figure 7: A monitor rendering of the table-sphere scene.

Figure 8: Depth-map of the table-sphere scene. Darker areas signify closer depth, while lighter areas signify further depth.

Figure 9: ISET rendering of table-sphere.

Figure 10: ISET rendering of table-sphere, with Photoshop depth blurring, at various focal points, and aperture sizes.

Discussion

As illustrated, I was able to render an example scene in RenderToolBox, render a depth-map, and feed this data into ISET. However, this is only the first step of this simulation process. Many more different types of renderings are possible. First, a proper DOF lens blur rendering must be achieved in ISET. Although the Photoshop renderings look visually appealing, there is little control and precision of the blurring operation. Simulation in ISET would be much more scientifically correct, and also convenient for research purposes.

Moreover, after proper integration into ISET, further simulations can be performed. For example, synthetic apertures, light-fields, and even multiple-camera systems can be simulated. As an inspiration, see Figure 10 for an example multiple-camera set of renderings. These multiple camera system simulations are particularly useful, for binocular camera systems, or even expensive camera arrays can be simulated and analyzed easily without the tedious and costly setup of a true camera array. Another topic to explore is view-point synthesis. Since we can produce practically arbitrary view-points with cameras, the accuracy of view-point synthesis algorithms such as the ones described in VIEWPOINT SYNTHESIS PAPER[CITATION: viewpoint synthesis] can be explored. Since we have access to the ground truth images, more accurate algorithm performance assessment can be achieved. Although the camera view-point and orientation can be placed almost arbitrarily, issues with rendering arise if the view-point is too extreme.

Figure 10: Example multiple-camera rendering.

Conclusion

As illustrated, we were able to create an example scene in RenderToolBox, and feed the radiance data and depth-map into ISET. Although we could not properly render a DOF blurred image, such a task is feasible and will be completed in the near future. Different experimentation with view-point variation, and the ability to simulate different apertures allows for future renderings of synthetic apertures, multiple-camera systems, and even light-field cameras. Moreover, more complex scenes could be rendered in the future, with the help of perhaps Maya, a 3D prototyping software. Synthetic renderings of multi-spectral scenes with RenderToolBox seems promising and will be pursued further in the future.