The Statistical Fingerprint of AI-Generated Images

The Blur Between Real and Artificial

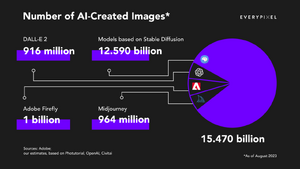

In 2023, the Sony World Photography Awards awarded a prize in the Creative category to an image titled "The Electrician". The image presented a haunting, black-and-white portrait of two women. However, the artist, Boris Eldagsen, refused the award, revealing that the work was not a photograph at all, but a synthetic creation generated by AI. This event marked a significant turning point: synthetic imagery had crossed a threshold of fidelity where even expert judges could no longer distinguish pixels captured by photons from artificially generated pixels.[1] This blurring of reality is compounded by the unprecedented scale of production. Recent reports indicate that in just 1.5 years, generative AI models have produced as many images as traditional photography produced in its first 150 years (approximately 15 billion images).[2] We are rapidly entering an era where a significant portion of digital visual data is artificially generated, rather than captured on a physical image sensing system.

Research Question and Project Goals

For the general public, the inability to distinguish real from synthetic raises concerns about misinformation, as well as a loss of creativity intrinsic to humans. However, for Image Systems Engineering, artificially generated images also provoke a serious question of engineering safety. Developers of autonomous systems, such as autonomous vehicles (AVs), are increasingly turning to generative AI to create training data for perception systems to bridge the gap between expensive real-world data and the need for massive edge-case datasets, such as car accidents and severe weather. Although these digital twins may appear realistic to human observers, they often fail to behave like real sensors, lacking the correct noise, spectral, or optical properties. Thus, artificially generated data introduces a high-risk domain gap where autonomous systems are trained on hallucinations rather than physics. This poses a critical question: Can we discern the real from the artificial?

This project conducts a forensic analysis to determine whether AI-generated images can be distinguished from camera-simulated images based on radiometric statistics. In this investigation, we ignore the semantic content (whether the car looks like a car) and focus entirely on the physical statistics (how the image was formed from the radiance source). By comparing a physical radiance dataset (ISET3D physically based ray tracing[3]) and camera photograph dataset (ISETCam[4]) against an artificially generated image dataset (Stable Diffusion v1.5[5]), we aim to quantify the statistical fingerprints of the AI across four domains:

- Spatial Statistics: Texture and frequency distribution.

- Photometric Statistics: Signal-dependent noise response.

- Spectral Statistics: Inter-channel color correlation.

- Optical Statistics: Point spread function (PSF) and diffraction.