The Statistical Fingerprint of AI-Generated Images

Introduction

The Blur Between Real and Artificial

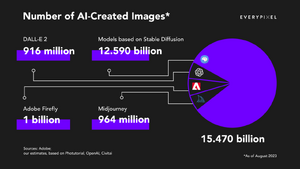

In 2023, the Sony World Photography Awards awarded a prize in the Creative category to an image titled "The Electrician". The image presented a haunting, black-and-white portrait of two women. However, the artist, Boris Eldagsen, refused the award, revealing that the work was not a photograph at all, but a synthetic creation generated by AI. This event marked a significant turning point: synthetic imagery had crossed a threshold of fidelity where even expert judges could no longer distinguish images formed by photons from artificially generated pixels.[1] This blurring of reality is compounded by the unprecedented scale of production. Recent reports indicate that in just 1.5 years, generative AI models have produced as many images as traditional photography produced in its first 150 years (approximately 15 billion images).[2] The field is rapidly entering an era where a significant portion of digital visual data is artificially generated, rather than captured on a physical image sensing system.

Research Question and Project Goals

For the general public, the inability to distinguish real from synthetic raises concerns about misinformation, as well as a loss of intrinsic human creativity. However, for Image Systems Engineering, artificially generated images also provoke a serious question of engineering safety. Developers of autonomous systems, such as autonomous vehicles (AVs), are increasingly turning to generative AI to create training data for perception systems to make up for the scarcity of expensive real-world data, especially edge-case datasets, such as car accidents and severe weather. Although these digital twins may appear realistic to human observers, they often fail to behave like real sensors, lacking the correct noise, spectral, or optical properties. Thus, artificially generated data introduces a high-risk domain gap where autonomous systems are trained on hallucinations rather than physics. This poses a critical question: Can we discern the real from the artificial?

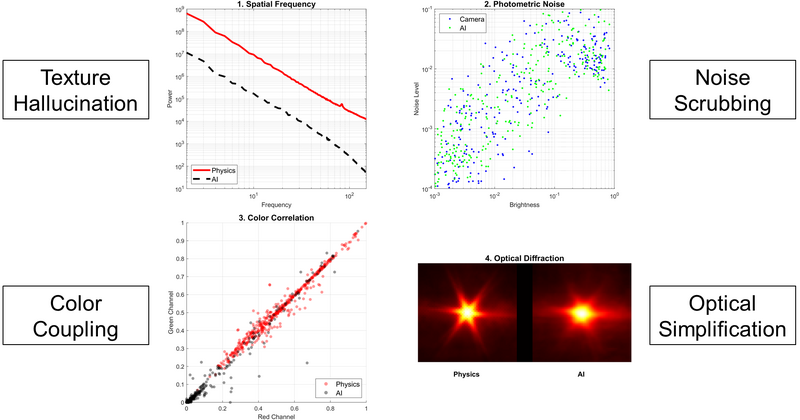

This project conducts a forensic analysis to determine whether AI-generated images can be distinguished from camera-simulated images based on radiometric statistics. In this investigation, the semantic content (whether the car looks like a car) is ignored. Instead, the focus is on the physical statistics (how the image was formed from the radiance source). By comparing a physical radiance dataset (ISET3D PBRT[3]) and camera photograph dataset (ISETCam[4]) against an artificially generated image dataset (Stable Diffusion v1.5[5]), the project aims to quantify the statistical fingerprints of the AI across four domains:

- Spatial Statistics: Texture and frequency distribution.

- Photometric Statistics: Signal-dependent noise response.

- Spectral Statistics: Inter-channel color correlation.

- Optical Statistics: Point spread function (PSF) and diffraction.

Background and Related Work

The Proliferation of Data and Machine Learning Models

To understand the challenge of distinguishing real from artificial imagery, one must first understand the mechanisms that enable modern AI generation and the forensic tools previously developed to detect them. The recent explosion in synthetic imagery is driven by two converging factors: the aggregation of massive image datasets and the development of latent diffusion models.

First, the capabilities of modern generative models are directly tied to the scale of their training data. In the last decade, the internet has become a vast repository of visual information, allowing researchers to scrape billions of image-text pairs. Foundational models like Stable Diffusion were trained on subsets of LAION-5B (Large-scale Artificial Intelligence Open Network), a dataset containing over 5.85 billion clip-filtered image-text pairs.[6] Similarly, proprietary models like Adobe Firefly rely on massive libraries of professional stock photography (estimated at 300+ million images), while OpenAI’s DALL-E 3 is estimated to use hundreds of millions of licensed and public images.[2][7] This scale allows models to learn a statistical approximation of reality by ingesting virtually every visual concept captured in the history of digital photography.

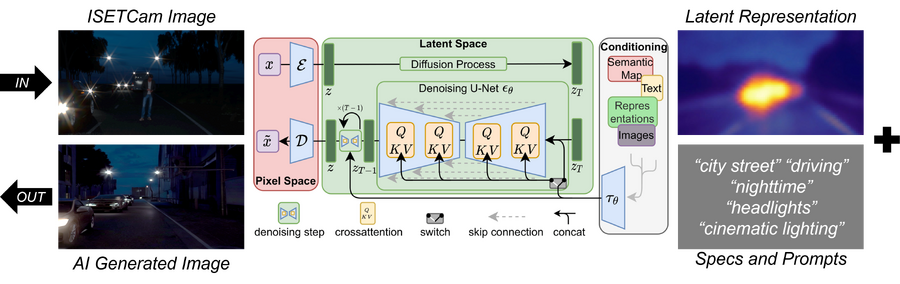

Second, the availability of this massive data was unlocked by a fundamental shift in model architecture. While early generative models struggled with high-resolution synthesis, Latent Diffusion Models (LDMs) represented a breakthrough in efficiency and fidelity. The specific model analyzed in this study, Stable Diffusion v1.5, is based on the LDM architecture proposed by Rombach et al. (2022).[5] Unlike pixel-based diffusion, which is computationally expensive, LDMs operate in a compressed latent space. The process involves two main components: first, an autoencoder compresses the input image into a lower-dimensional latent representation, reducing the computational complexity while preserving semantic details; second, in the latent space, the model is trained to reverse a gradual noising process. Gaussian noise is iteratively added to the latent vector (Forward Diffusion), and a neural network (typically a time-conditional U-Net) is trained to predict and remove this noise (Reverse Diffusion) to reconstruct the clean latent vector. The U-Net uses cross-attention layers to condition the generation on text prompts (for example, "a car on a dark road"). Once the denoising is complete, the decoder reconstructs the final pixel-space image. Because the model reconstructs the image based on learned statistical patterns rather than physical light transport, it risks hallucinating textures that look plausible to the human eye but lack physical integrity.

Past Work in Image Forensics

The field of image forensics has a long history of detecting manipulated imagery, traditionally focusing on manual edits (photoshop) and, more recently, deepfakes. While effective in their respective domains, these methods rely on assumptions that may not hold for modern generative models:

Geometric Forensics: Pioneering work by Hany Farid and colleagues focused on physical inconsistencies in manually manipulated images. Their methods analyzed geometric constraints, such as the consistency of cast shadows and lighting directions.[8] For example, if a person was inserted into a photo, the shadow they cast often failed to converge on the same light source as other objects in the scene. While effective for cheap fakes, these geometric checks are often semantic and difficult to automate at scale for subtle synthetic generations.

Frequency and Pixel Statistics: With the rise of Generative Adversarial Networks (GANs), researchers pivoted to detecting invisible artifacts. Frequency analysis revealed that GANs often leave unnatural high-frequency patterns caused by upsampling layers in the Fourier domain.[9] More recently, methods like Diffusion Reconstruction Error (DIRE) attempt to detect diffusion-generated images by checking if an image can be easily reconstructed by a pre-trained diffusion model, considering that real images tend to have higher reconstruction errors than synthetic ones.[10]

The Radiometric Gap: While existing methods detect semantic errors (shadows) or digital artifacts (frequency peaks), they rarely interrogate the radiometric formation of the image. Real photographs are created from specific physical processes: photons hitting a sensor, shot noise governed by Poisson statistics, and diffraction limited by apertures. Generative AI, however, is born from denoising a latent vector. This project fills the gap by proposing radiometric forensics: detecting AI not by what it looks like, but by how physically plausible its light statistics are compared to a camera simulation.

Image Generation Methodology

To isolate the differences between physical radiance, image sensing, and generative AI, a dataset of 243 unique night-driving scenes was constructed into three aligned sets: Physical Ground Truth (Set A), Camera Simulation (Set B), and Generative AI (Set C).

Set A: Physical Radiance (Ground Truth)

The foundation of the dataset is a collection of high-dynamic-range (HDR) spectral radiance maps derived from the ISETHDR driving scene database.[3] Unlike standard RGB images, these scenes contain the full spectral energy distribution of light at every pixel, modeled via physically based ray tracing (PBRT). Nighttime driving scenes were specifically selected because they present the most difficult challenge for imaging systems: a high dynamic range where bright light sources (headlights, streetlights) can be five orders of magnitude more intense than the surrounding dark regions. This extreme contrast creates optical flare that can obscure vulnerable road users, such as pedestrians or cyclists, making accurate simulation critical for safety validation.[11] For each of the 243 scenes, four independent lighting components were extracted: headlights, streetlights, other environmental lights, and skylight. To create a realistic night-driving environment, these components were combined using a specific weighting vector , prioritizing vehicle headlights while maintaining low-light ambient conditions. This resulting spectral radiance map serves as the physical ground truth, representing the raw photons arriving at the camera lens before any optical or sensor degradation.

Set B: Camera Simulation (The Baseline)

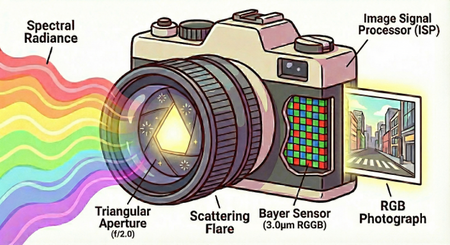

To generate photorealistic baselines, the spectral radiance from Set A was passed through a virtual camera model using ISETCam [4]. This stage introduces the physical imperfections inherent to optical imaging systems.[11] The specifications of the camera model are described below:

- Optics: A 4mm focal length lens with a wide aperture was simulated. Crucially, the aperture was modeled with a triangular shape (3 sides) rather than a perfect circle, following the flare simulation methods validated in previous work.[11] This introduces a distinct 6-point starburst diffraction pattern on bright sources (like headlights), serving as a known optical fingerprint. Additionally, lens scattering was modeled by introducing randomized dust and scratches into the aperture function, as previously demonstrated for accurate nighttime flare simulation.[11]

- Sensor: The optical image was captured by a simulated Bayer RGGB sensor with a pixel size of , matching the specifications of commercial sensors analyzed in previous studies.[11]

- Processing: The raw sensor data underwent a standard Image Signal Processor (ISP) pipeline, including demosaicing and global tone mapping to mimic the non-linear response of consumer photography.

Set C: Generative AI (The Digital Twin)

The final dataset consists of synthetic reconstructions of Set B generated by Stable Diffusion v1.5.[5] An Image-to-Image (Img2Img) pipeline was used to ensure the AI respected the broad geometry of the original scene, filling in fine-level texture and lighting details without hallucinating completely new objects or structural deviations. The model was conditioned with the text prompt: "photorealistic night driving scene, city street, car headlights, streetlights, highly detailed, 8k, cinematic lighting" and a negative prompt to suppress artifacts: "blur, noise, grain, cartoon". A denoising strength of 0.5 was used. This critical parameter represents a balance point where the model retains approximately half of the original structural information while synthesizing new textures, preventing it from completely overriding the scene geometry. The generation was performed at resolution with a guidance scale of 7.5, creating an image that appears similar to the camera photo but is radiometrically synthetic.

The resulting dataset provides 243 aligned triplets. By subtracting the AI generation (Set C) from the camera simulation (Set B), some initial visual differences can be observed. As shown in Figure 7, differences are most pronounced around high-intensity light sources, where the AI fails to replicate the complex diffraction spikes created by the triangular aperture, defaulting instead to generic Gaussian blooms. Significant, yet often invisible, differences will be revealed in the following section using radiometric-inspired statistical analysis.

Feature Extraction and Results

To quantify the radiometric differences between PBRT, ISETCam photos, and Stable Diffusion generated images, statistical features were extracted across four domains: spatial frequency, photometric noise, spectral correlation, and optical diffraction.

Spatial Statistics: Power Spectral Density

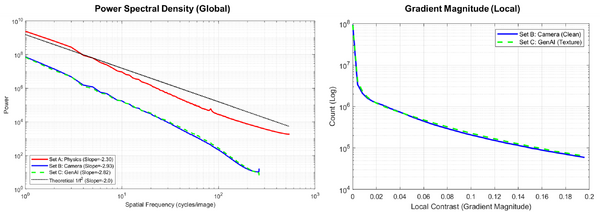

The spatial frequency content of natural scenes typically follows a power law distribution , where spectral power decreases as frequency increases. The Modulation Transfer Function (MTF) of an optical system acts as a low-pass filter, attenuating high frequencies and steepening this slope.[12] The Power Spectral Density (PSD) was computed by averaging the 2D Discrete Fourier Transform (DFT) of the luminance channel across all images:

By fitting a line to the log-log plot of power versus frequency, the spectral slope was extracted:

As shown in Figure 8 (left), the physical ground truth (Set A) exhibited a slope of , consistent with sharp natural scene statistics. The Camera simulation (Set B) had a significantly steeper slope of , reflecting the optical blurring inherent to the simulated lens. Interestingly, the GenAI images (Set C) closely mimicked the camera with a slope of . This statistic is not a useful discriminator between Camera and AI images because the diffusion model effectively replicates the blur aesthetic of a photograph. However, it successfully differentiates both from raw physical radiance, confirming that the AI is generating processed photos rather than scenes of physical radiance.

Local Spatial Statistics: Gradient Magnitude

While the Power Spectral Density provides a global view of frequency content, it can be dominated by large-scale structures. To investigate spatial statistics at a finer granularity, the gradient magnitude was analyzed as a complementary metric. This feature measures the strength of local contrast transitions at every pixel , defined as the magnitude of the gradient vector:

The distribution of these magnitudes was computed for the Camera simulation (Set B) and the GenAI images (Set C) to determine if the local edge statistics respect the physical limits of the optical system. As shown in Figure 8 (right), the distributions for Set B (Camera) and Set C (GenAI) were closely aligned, further confirming that the AI effectively mimics the general look of the photograph. However, a subtle but distinct divergence is visible in the middle-to-high contrast range (0.06 - 0.18 on the x-axis). The GenAI images consistently exhibited a higher count of pixels with strong gradients compared to the camera simulation. The increased prevalence of high-contrast edges in Set C suggests that the diffusion model is hallucinating textures that are slightly sharper than the simulated lens blur would physically allow. While the AI learned the global blur (as seen in the PSD analysis), it fails to perfectly replicate the local softness imposed by the optical Point Spread Function, resulting in physically impossible edge sharpness in specific texture regions.

Photometric Statistics: Signal-Dependent Noise

In a physical imaging system, noise is signal-dependent. Photon shot noise follows a Poisson distribution where the variance increases linearly with the mean intensity .[12] To test for this physical signature, local variance was analyzed against local mean intensity in non-overlapping pixel blocks. The relationship is modeled as:

The Camera simulation (Set B) exhibited a steep slope of 1.94 (Figure 9, Left). This slope is steeper than the theoretical Poisson slope of 0.5 because the gamma compression in the Image Signal Processor (ISP) amplifies noise in darker regions while compressing bright highlights. In contrast, the GenAI images (Set C) showed a flat slope of 0.80 (Figure 9, Right). The result indicates that the noise in the AI image is merely a uniform texture added by the diffusion process, distinct from the physical coupling of light intensity and photon arrival statistics found in real sensors. This appears to be a highly useful discriminator between the image datasets.

Spectral Statistics: Color Correlation

Physical light sources often have distinct spectral power distributions, meaning the energy in the Red and Green channels should vary somewhat independently (for example, a red taillight vs. a green signal). However, digital cameras introduce color coupling through the use of Color Filter Arrays (CFAs) and demosaicing algorithms, which interpolate missing color values from neighbors.[12] To quantify this coupling process, the Pearson correlation coefficient was computed for pixel pairs:

The physical radiance (Set A) showed the lowest coefficient of determination (), reflecting the natural spectral independence of the scene lights. The Camera simulation (Set B) increased this correlation () due to Bayer interpolation. The GenAI (Set C) exhibited the highest correlation (). While the AI over-smoothes color transitions, the difference between the Camera and AI correlations is minimal (0.004), suggesting the AI model has learned the strong color coupling present in its training data.

Optical Statistics: Point Spread Function (PSF)

The most critical test of physical realism in night scenes is the Point Spread Function (PSF). High-intensity point sources should diffract according to the wave nature of light and the shape of the aperture.[12] The simulation used a triangular aperture, which physically mandates a 6-point starburst pattern. The effective PSF was extracted by cropping a region around the maximum intensity pixel in each image:

As visually demonstrated in Figure 11, the Camera simulation (Set B) faithfully rendered the 6-point starburst, verifying the wave optics model. The GenAI (Set C), despite being prompted with photorealistic, rendered a generic Gaussian blob. The diffusion model treats the bright light as a semantic object to be smoothed rather than a physical wavefront to be diffracted. This failure reveals that the AI operates on statistical priors of what a light looks like rather than the physical laws of how light behaves. Although primarily visual in this presentation, this analysis of the PSF appears to be the strongest discriminator between the datasets.

Conclusion

Summary of Outcomes

This project successfully established a radiometric forensic framework to distinguish between physically simulated photographs and artificially generated images. A triplet dataset including spectral radiance, simulated photography, and artificially generated imagery was created for a rigorous evaluation of generative AI's physical fidelity. The analysis revealed that while Stable Diffusion v1.5 is highly effective at mimicking the semantic appearance of a photograph, it often fails to respect the radiometric laws of physics. Specifically:

Spatial Statistics: The AI learned the global blur characteristic of a lens, matching the power law slope of the camera simulation (-2.82 vs -2.93) rather than the sharper physical scene (-2.30).

Photometric Statistics: The AI failed to replicate signal-dependent photon shot noise. While the camera simulation showed a strong intensity-to-variance relationship (slope = 1.94), the AI produced a uniform noise texture (slope = 0.80) unrelated to photon arrival statistics.

Spectral Statistics: The AI exhibited high color channel correlation (), suggesting it has learned the demosaicing artifacts present in its training data of internet photographs.

Optical Statistics: The most significant visual discriminator was the Point Spread Function. The AI rendered bright light sources as generic Gaussian blobs, completely missing the wave-optics diffraction patterns (starbursts) mandated by the physical aperture.

Limitations

- Model Improvements: Stable Diffusion v1.5 is from 2022. While it is a standard baseline, newer architectures may have different statistical priors and biases.

- Prompt Bias: The prompts used to guide the artificial image generation may have encouraged a reduction in image noise, inadvertently violating photon statistics.

- Spatial Bottleneck: The reference images needed to be downsampled to match the stable diffusion model’s native resolution, inherently acting as a low-pass filter.

- Single-Frame Analysis: While the analysis on snapshot images is intriguing, the natural progression would be to consider full automotive videos to evaluate statistics related to temporal consistency.

Future Work

- Train on Radiometric Data: Instead of training a model on internet JPEGs, train a diffusion model directly on raw sensor data to learn physical units like photons.

- Physics-Guided Adapters: Develop low-rank adaptations (LoRAs) that can be plugged into the generic models to enforce physical light constraints.

- Hybrid Rendering: Use standard ray tracing (ISETCam) for the geometry, optics, and motion, then finish the image with an AI model for texture synthesis.

- Downstream Perception Validation: Beyond pixel statistics, assess synthetic images with task performance, such as autonomous driving, to see if systems fail from hallucinated textures and obstacles.

References

Appendix

The dataset and code used in this project can be found at https://github.com/mikesomms/The-Statistical-Fingerprint-of-AI-Generated-Images.git.