Irtorgb

Introduction

Near-Infrared (NIR) images have broad application in remote sensing and surveillance for its capacity to segment images according to object’s material. Although NIR images made object detection an easier task, its monochrome nature is conflicted with human visual perception and thus might not be user friendly. Lack of color discrimination or wrong colors on NIR images would limit people’s understanding and even lead to wrong judgement. So colorizing the grayscale NIR images would be desired.

Colorization of NIR images is a difficult and challenging task since a single channel is mapped into a three dimensional space with unknown interchannel correlation, which greatly reduces the effectiveness of using traditional color correction/transfer method to solve this problem. Moreover, since surface reflection in the NIR spectrum band is material dependent, some objects might be missing from the NIR scenes due to their transparency to NIR. Therefore, different from grayscale image colorization which only estimates chrominance, IR colorization requires estimating not only the chrominance, but also the illuminance, which add a lot complexity to the problem.

Traditional colorization method extracts color distribution from input image and then fit it into the color distribution of the output image. Typical method[1-2] involves segmenting image into smaller parts that receives the same color, then retrieve each color palette to estimate responding chromince. Recent approaches[3-5] leverage the use of deep neural networks to enable colorization automatically. Some[3] train from scratch, using the neural network to estimate the chrominance values from monochrome images, other methods[4,5] include using a pre-trained model as a starting point, and then apply transfer learning to adapt the current model for their own colorization tasks. All these work shows that deep learning techniques provide promising solutions for automatic colorization.

In this project, we proposed several machine learning solutions like L3 (Local, Linear and Learned) and a neural network based model to find the appropriate mapping from NIR to RGB visible spectrum representation which human eyes are more sensitive to. The results are evaluated by CIELAB ∆E and MSE.

Method

L3 Method

The L3 (Local Linear Learned) method[6] combines machine learning and image systems simulation that automates the pipeline design. It comprises two main steps: rendering and learning. The rendering step adaptively selects from a stored table of affine transformations to convert the raw IR camera sensor data into RGB images, and the training step learns and stores the transformations used in rendering.

Dataset

Our input dataset are the original scene NIR images with 6 fields (mcCOEF, basis, comment, illuminant, fov, dist). We started with 26 such images. With some adaptation from the script in L3, we first created a RGB sensor, which generates the corresponding IR sensor with a irPassFilter. Then these two sensors read in the NIR images, along with the padded spectral radiance optical image computed from those spectral irradiance NIR images (to allow for light spreading from the edge of the scene), and output from sensorCompute(irSensor/rgbSensor, oi) the sensor volts(electrons) at each pixel from the optical image. Finally we used an ipCompute on these sensor volts images for the final sensor data images after demosaicing, sensor color conversion, and illuminant correction.

Note that our output images for training shows a reddish effect on the RGB images. We couldn't find the right way to get the normally colored RGB images and IR images with the same size - saving the images directly from the optical images gives the right hue on a complete size image (606*481), however the corresponding IR images cannot be saved this way. So for the purpose of the L3 training we decided to keep our original output from the image processing pipeline(ipCompute), which includes IR and RGB images of size 198*242, with the RGB images showing a reddish effect.

|

|

|

Model and Attempted Improvements

We did 4 experiments on our L3 model: (1) The general L3 model: training all images from the dataset, with the exception of 4 images each from one different category as the testing images. (2) Single category training: training the 4 categories from the dataset (fruit, female, male, scenery) separately. (3) Single channel training: training the RGB channels with IR images separately. (4) RGB to IR reverse training: "decolorization" of images to get a sense of the difficulty on the mapping of both directions.

Results are demonstrated in the 'Results' section below.

CNN Method

Due to the great performance of CNN models in image processing tasks [7], we proposed an integrated approach based on deep-learning techniques to perform a spectral transfer of NIR to RGB images. As inspired by Matthias et al. [8], a Convolutional Neural Network (CNN) is applied to directly estimate the RGB representation from a normalized NIR image. Then, to obtain better image quality, the colorized raw outputs of CNN would go through an edge enhancement filter to transfer details from the high resolution input IR images.

Dataset

For the complexity of spectrum mapping, a deep CNN model with thousands or even millions of trainable variables would be desired. Thus, a large amount of data is required to prevent overfitting, where the model perfectly fits the training data set but loses the ability to inference on a new IR image. For CNN model, We use the RGB-NIR Scene Dataset [1] which consists of 477 images in 9 categories captured in both RGB and Near-infrared (NIR) sensor.

Due to the comparatively positive result of single category training in L3, we only used Urban Building dataset that consists of 102 high resolution RGB/NIR images in the CNN model. The dataset was split into 80% training data and 20% test data, then all the images were cropped into 64 × 64 patches to feed into the model.

|

|

| Number of 64 × 64 Patches used in CNN Model | ||

|---|---|---|

| Data Type/ Data Use | Train | Test |

| Input IR (64 × 64) | 7572 | 1044 |

| Output RGB (64 × 64 × 3) | 7572 | 1044 |

CNN Architecture

Illustration of our CNN network architecture is given in Figure X. We used many convolution/deconvolution layers and relatively few pooling layers to increases the total amount of non-linearities in the network, which would help us learn complex mapping from IR to RGB. The activation function of each convolution layers is the ReLu function: , batch normalization is followed afterwards to further avoid overfitting.

In total, we have 2,377,331 params in the model, among which 2,376,723 params are trainable. In the training process, we used stochastic gradient descent to minimize the mean squared error (MSE) between the pixel estimates of the normalized output RGB image (oRGB) and normalized ground truth RGB images(GT). MSE is defined as follows:

Post Processing

The raw output of the CNN is blurry and has visible noise, which might be caused by inaccurate pixel-wise estimations. The subsampling property of the pooling layers combined with the correlation property of the convolution layers amplify this effect.[[2]] So, post-processing is necessary to recover the lost details.

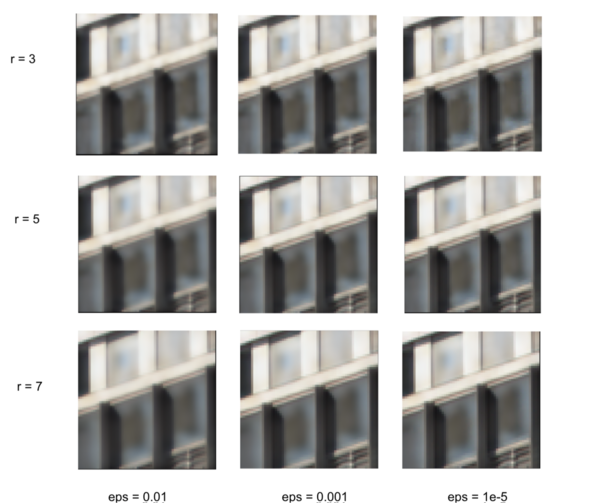

In the post-processing step, the colorized raw CNN output went through a guided filter[3] with edge-preserving smoothing property to learn high frequency details from input IR image. The edge-preserving smoothing property of guided filter is mainly controlled by two parameters: a) window radius r and b) noise parameter ε. In the 'Result' section, we compares different sets of parameters for guided filter. With fine-tuned parameters, you could see that compared to the raw output oRGB, object contours and edges are clearly visible.

Results

L3

L3 model result

Main result for our L3 model (22 images for training set, 4 images for testing set):

| Category | Data Amount | DeltaE |

|---|---|---|

| Training+Testing | 22+4 | 7.1602 |

| Test: Scenery | 4 | 15.7399 |

| Test: Male | 10 | 5.9912 |

| Test: Female | 4 | 5.1804 |

| Test: Fruit | 8 | 7.1626 |

|

|

Attempt 1 : Single Category Training

| Category | Data Amount | DeltaE - Training | DeltaE - Testing |

|---|---|---|---|

| Test: Scenery | 4 | 5.8738 | 5.2851 |

| Test: Male | 10 | 5.1895 | 4.8235 |

| Test: Female | 4 | 2.8871 | 8.0136 |

| Test: Fruit | 8 | 5.7514 | 3.9328 |

|

Attempt 2 : Single Color Channel Training

| Category | Data Amount | DeltaE - Single Channel | DeltaE - Original |

|---|---|---|---|

| Training | 22 | 7.1603 | |

| Test: Scenery | 4 | 15.7399 | 5.2851 |

| Test: Male | 10 | 5.9901 | |

| Test: Female | 4 | 5.1816 | |

| Test: Fruit | 8 | 7.1626 | |

Attempt 3 : RGB to IR Reverse Training

| Category | mse - RGB to IR | mse - IR to RGB |

|---|---|---|

| Training | 0.05556 | 0.07572 |

| Test: Scenery | 0.04430 | 0.14670 |

| Test: Male | 0.06464 | 0.04639 |

| Test: Female | 0.03845 | 0.06328 |

| Test: Fruit | 0.04301 | 0.06706 |

CNN

CNN model result

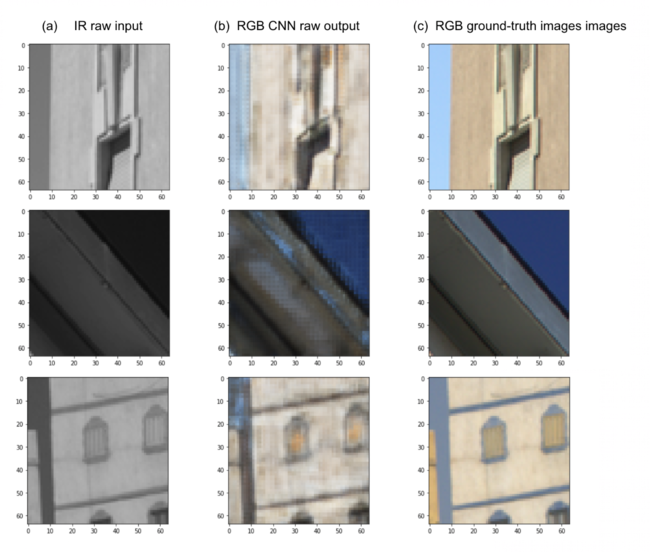

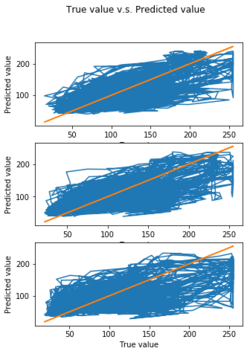

To evaluate the result against the ground truth RGB images, we used MSE metric to quantitatively measure pixels differences across image, and uses CIELAB ΔE metric to measure the difference in human perception level. Moreover, we also plot the relation between true RGB values and predicted RGB values to measure how close we are to the ground truth image. We trained the CNN model for 1000 epoch using CUDA Toolkit 9.0 in Nvdia GPU. [4]. The training error and test error still have a tendency to improve at a slower speed after 1000 epoch, if keep on training, we might reach a better result, but we stopped the training process for time concern. The following sections shows our current results of train and test set colorization.

(1) Train set results

Metric 1: MSE = 0.119

Metric 2: CIELAB ΔE = 2.3265

|

|

(2) Test set result

Metric 1: MSE = 0.0134

Metric 2: CIELAB ΔE = 4.8342

|

|

We could see that training set with smaller MSE has better performance compared to the test set in the following three aspects: a) visually more colorful b) the predicted RGB values locates more concentrated around the true line c) a relatively smaller CIELAB ΔE, which indicates smaller visual difference

Also, the performance of inference varies in the test set. The first two row of images has better visual performance than the third row image. Apparently, our CNN failed to learn the color details for the artificial colorful objects in the third image, the reason might reside in the lack of colorful images in our train set. The Urban Building dataset are filled with modern buildings and blue sky which have relatively neural color distributions. So when encountering a different color distribution in test set, the trained model lost the ability to inference on the brand new IR image.

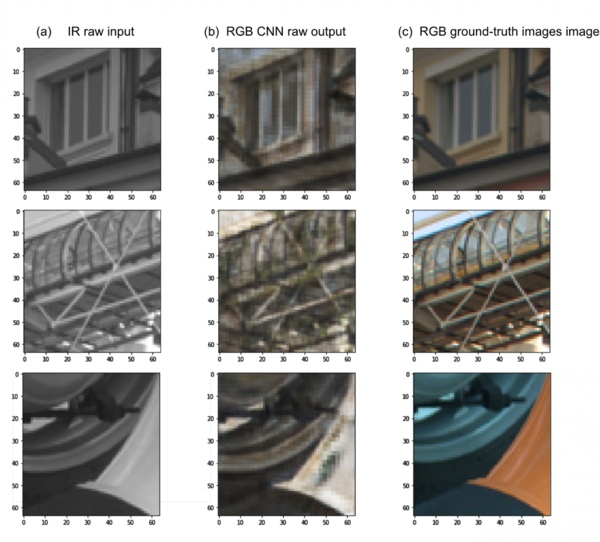

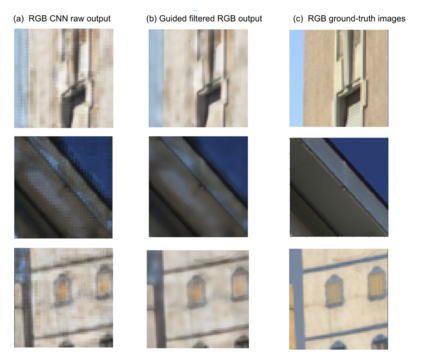

Guided Filter result

The raw output image of CNN in last section look blurry, it seems that we retrieve the color at the expense of resolution. So we put the results into a guided filter. The following gives the filtered result of under different parameters combinations: a) local window radius r and b) ε epsilon. Local window determined the smooth region of a local filter and the epsilon ε indicates the denoising ability of the filter. We tried 3 three common window radius and 3 epsilon values. The experiment results are given below, we found that guided filter with a window radius r = 5, and noise parameter epsilon = 1e-5, has better edge preserving and smoothing ability for our problem.

CNN Final result

In this section, we give the final results of the integrated CNN approach.

|

|

Conclusions

Todo: compare L3 and CNN

Future Step: The future work involves collecting a larger and more comprehensive dataset which consists of various color distribution to enable multi-category training. This might gave the CNN model better inference ability on a new IR input. Besides, we could also try a different CNN model. For now, we integrated the RGB channel in our training process, but we could definitely try to train them separately. Triplet DCGAN model[ref] is a good reference to turn to in the future. It is an extension of Generative Adversary Network to image processing tasks, in which a generative model G captures the data distribution to generate R, G, B channel respectively and then combined to form a normal RGB image, and a discriminative model D estimates the probability that a sample came from the training data rather than G. In this way, we could better preserve the inner channel information.

Reference

[1] A. Levin, D. Lischinski, and Y. Weiss, “Colorization using optimization,” ACM Transactions on Graphics, vol. 23, no. 3, pp. 689–694, 2004.

[2] L. Yatziv and G. Sapiro, “Fast image and video colorization using chrominance blending,” IEEE Transactions on Image Processing, vol. 15, no. 5, pp. 1120–1129, 2006.

[3] S. Iizuka, E. Simo-Serra, and H. Ishikawa, “Let there be color!: Joint end-to-end learning of global and local image priors for automatic image colorization with simultaneous classification,” Proceedings of ACM SIGGRAPH, vol. 35, no. 4, 2016.

[4] G. Larsson, M. Maire, and G. Shakhnarovich, “Learning representations for automatic colorization,” Tech. Rep. arXiv:1603.06668, 2016.

[5] Limmer, M., Lensch, H.P.A.: Infrared colorization using deep convolutional neural networks. In: ICMLA 2016, Anaheim, CA, USA, 18–20 December 2016, pp. 61–68, 2016.

[6] isetL3, Github source code

[7] Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition[J]. arXiv preprint arXiv:1409.1556, 2014.

[8] He K, Sun J, Tang X. Guided image filtering[C]//European conference on computer vision. Springer, Berlin, Heidelberg, 2010: 1-14.

[9] Suárez P L, Sappa A D, Vintimilla B X. Infrared image colorization based on a triplet DCGAN architecture[C]//Computer Vision and Pattern Recognition Workshops (CVPRW), 2017 IEEE Conference on. IEEE, 2017: 212-217.

[10] Jiang H, Tian Q, Farrell J, et al. Learning the image processing pipeline[J]. IEEE Transactions on Image Processing, 2017, 26(10): 5032-5042.

Appendix I

Github Page for this project: [5]

Appendix II

Todo: conclusion + future work + contribution

Todo: polish words

Todo: image label size arrangement

Todo: code organization

Todo: Reference rrorganize

Todo: email professor