Creating an Automated Image Processing Pipeline using a Convolutional Neural Network

Abstract

The Learned, Linear, Local (L3) method developed by Qiyuan Tian et al. attempts to automate the image processing pipeline of a camera by applying learned linear transforms to the data obtained at sensors in order to reproduce the original image incident on the outer lens. Within this project, the team aimed to create a similarly automated image pipeline for a camera with an RGBW sensor pattern, using a convolutional neural network (CNN). Based on results obtained for two different datasets - one with sensor data taken directly from a camera and the other with sensor data simulated using software - the L3 method appeared perform better when the dataset is smaller and relatively homogeneous, while the CNN appeared to perform better when the dataset is larger and relatively heterogeneous.

Introduction

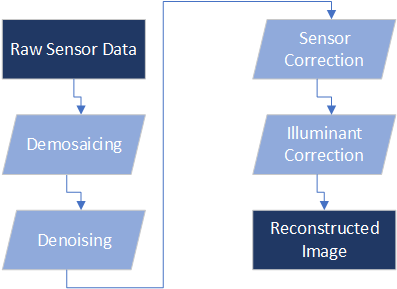

The traditional image processing pipeline within a camera executes various steps in order to reconstruct original scenes based on voltage readings from its sensors; some of the key steps in this process are shown in the Figure 1.

Within each step shown in this pipeline, algorithms have to be executed based on parameters that have been “tuned” by engineers or specialists; this is both computation and labor intensive.

One example of an attempt to automate the image processing pipeline comes from Qiyuan Tian et al. at Stanford University in the form of the Learned, Linear, Local (L3) method which aims to create a set of linear transformations to classified patches of the sensor data matrix based on learned parameters, in order to reconstruct the original image. This method has demonstrated viable performance on various datasets and different sensor color filter array (CFA) patterns.

Machine learning is an area in artificial intelligence that has gained increased attention over the past few years. Within this field, the use of convolutional neural networks have come to prominence, especially as it relates to applications such as image classification. This begs the question - would it be possible to use convolutional neural networks to automate the image processing pipeline of a camera?

Background

What is the Data?

In order to compare the differences in performance between the L3 algorithm and a convolutional neural network, two datasets were selected; these are described below.

Dataset 1: Sensor Data from Camera

Repository: Stanford SCIEN Image Repository

Image Description: Garden Scenery

Camera: Nikon D200

Number of Images: 39

Target Image Format: JPEG

Sensor Data Format: PGM

Sensor CFA Type: RGBW

Dataset 2: Simulated Sensor Data

Repository: Caltech Computational Vision Image Repository

Image Description: Pasadena Houses and Entrances

Camera: Unknown

Number of Images: 363

Target Image Format: JPEG

Sensor Data Format: PGM - Generated using ISETCam

Sensor CFA Type: RGBW

The key differences between the two datasets are listed below:

- Dataset 1 contains within it the sensor data taken directly from a Nikon D200 camera and the corresponding reconstructed images, whereas the sensor data within Dataset 2 have been simulated using the ISETCam software package

- Dataset 1 is relatively small with 39 images, where as Dataset 2 is relatively large with 363 images

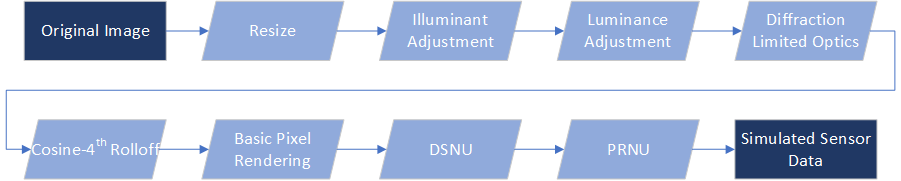

The steps executed using ISETCam in order to create the simulated sensor data for Dataset 2 to are shown in Figure 2.

Why Machine Learning?

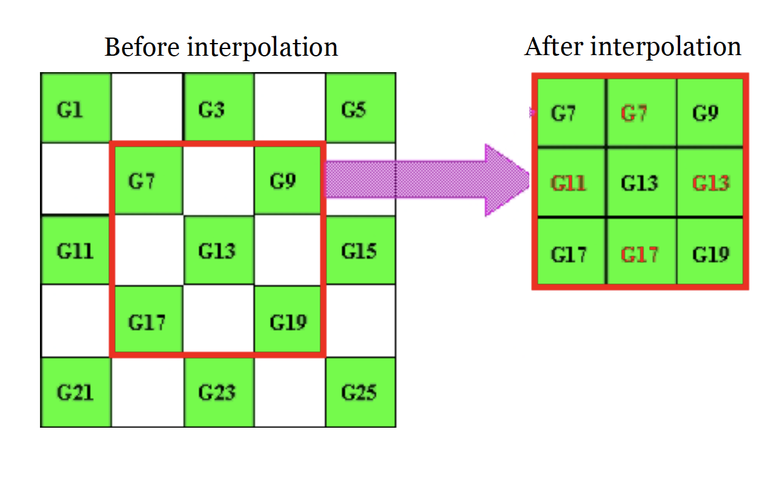

As is visible from Figure 3, the task reduces to an interpolation problem, in which we predict the missing pixels using the surrounding pixels. Machine Learning works really well in practice for such interpolation problems.

Why Convolutional Neural Networks (CNN)?

Input images are really high dimensional. Parametric models with varying levels of non-linearity such as artificial neural networks require a large number of parameters to deal with such data. CNNs assume local parameter sharing, and have certain properties such as translation invariance which are very relevant for interpolation. Deep CNNs also capture both local and global information about the image.

Methods

CNN

Deep Convolutional Neural Networks are known to work really well in practice. This is primarily because as the depth of CNN increases, the receptive field for every neuron at the final layer increases - which means that the CNN gets to look at a larger portion of the image. However, this increase in receptive field comes at the cost of downsampling the image to really small (spatially) feature activations.

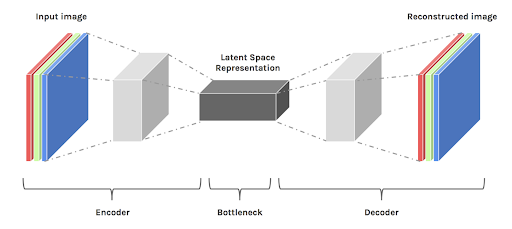

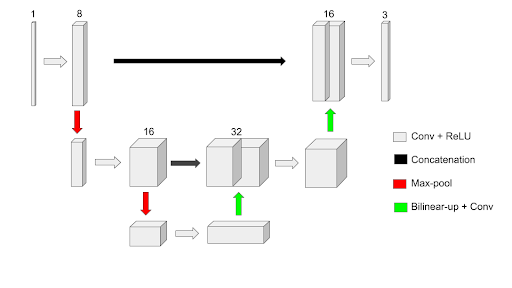

For the problem at hand, the input is a 3D matrix (with 1 channel) of raw sensor data, and the output is a 3D matrix (with 3 channels for RGB values) and the same spatial dimensions as the input. This would require significant upsampling of the feature vector and hence, an autoencoder architecture is naturally applicable to this problem.

One issue with such an autoencoder is that it tries to reconstruct the same dimensions from a really small feature representation which consists of high level semantic information about the input. This means that the network has no access to low level image details, and hence the output produced is really blurry.

A U-Net architecture (called so because of its shape), combines the low-level image details at every upsampling layer and rectifies this problem. The final architecture of our model is shown in Figure 6.

As can be seen, a 1 channel input (sensor data) is transformed to a 3 channel RGB image of the same spatial dimensions.

This CNN architecture is trained to minimize the objective: || G - I ||2, where I is the ground-truth RGB image and G is the generated RGB image.

Because the network is fully-convolutional (consists of no fully connected layers), it can take inputs of any size and output the same size. This enables us to train with random crops of images and sensor data to augment and increase the dataset size.

We train the network in multiple phases: phase 1 involves training with small resolution images. This enables faster training and convergence. Phase 2 and 3 involve increasing levels of resolution so that the network learns to capture finer details.

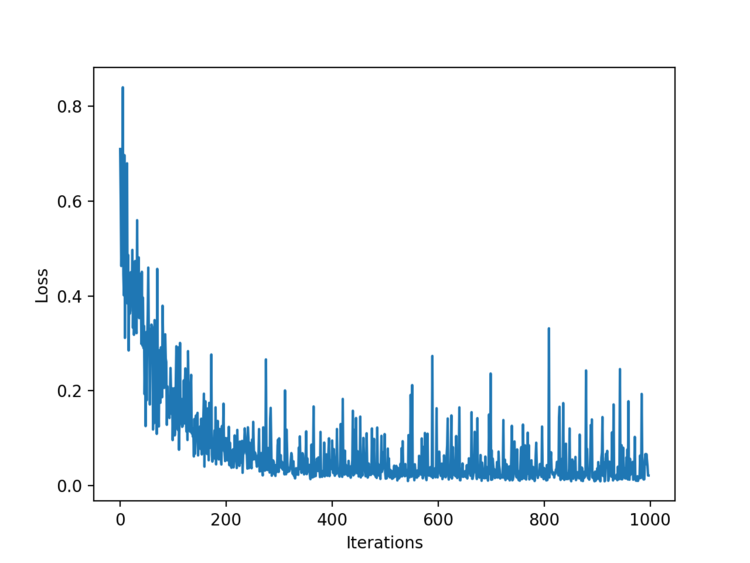

The loss curve for this network is shown in Figure 7.

L3

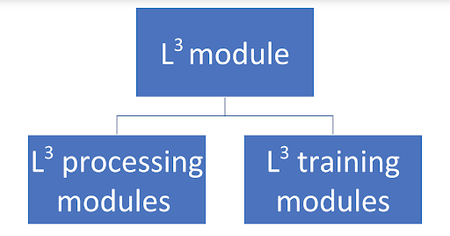

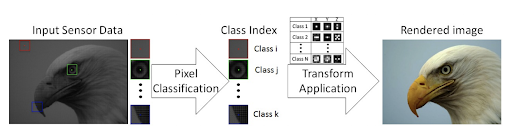

The Local Linear and Learned method as it is called is a state of the art method which is highly useful in replacing the whole image processing pipeline. It works on applying local linear transformations to chucks of images. Each image is split into several chunks and each chunk is categorized into a class. This method maps the sensor data from the pixel to its target output based on this classification. It has two modules in it as given in Figure 8: the training module and the processing module. The training module is responsible for applying the linear transformations of the images. These transforms adapt to a specific training scene, camera design and desired outputs. The processing module is responsible for identifying from the Look Up Table (LUT) that was created in the previous step and gives out the output. Some of the advantages of this method include parallel computation feasibility, great performance on small dataset etc. The whole phase of the L3 method is depicted in Figure 9.

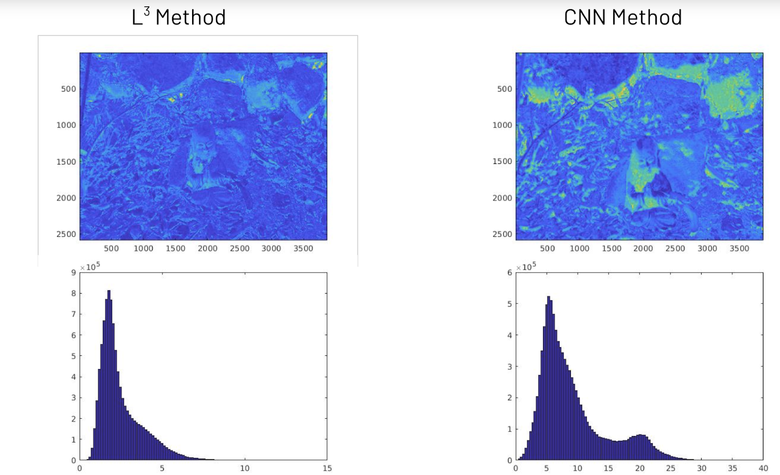

Results

Initially we started with the Garden Dataset and both L3 and CNNs were trained on the 40 images. The Figure 10 shows the results for them. It is seen that there is a great amount of greenish hue on the CNNs while the L3 method really depicts the actual image. The difference image and the corresponding histogram plots for the Scielab DeltaE values depict similar nature too. The poor performance of the CNNs are attributed to the overfitting problems in Machine Learning. Typically the CNN model is trained on just 40 images. Though there is random cropping in the images and the ideal dataset size increases more than just 40, the overall behavior of the CNN tends to learn too much from the green colored patches of the dataset. So a regularization part also does not work as the model likely did not learn about the other colors in it. This is the reason for the high Mean SCIELAB DeltaE value too. This gives the motivation for experimenting with an enhanced and varied dataset which is not restricted with a single color alone.

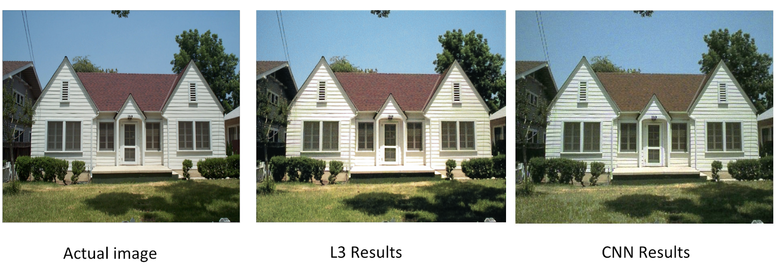

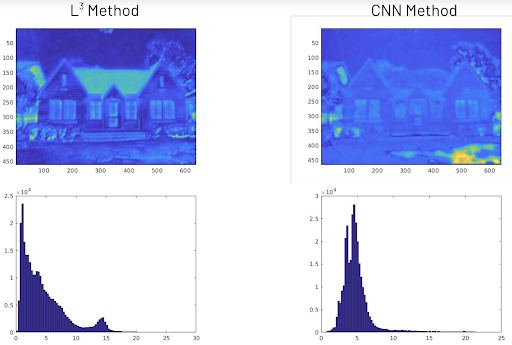

The results for the Simulated Sensor Dataset is quite convincing in Figure 12 and Figure 13. The CNNs perform better in this dataset. The dataset has 363 images in total and as mentioned previously, the dataset is randomly cropped and the overall training examples are large. The data is split into 70% training and 30% testing. This dataset does not have a concentration over a single color. The histogram plots of the CNN show a uniform distribution of the SCIELAB DeltaE values where the mean was around 5 and the other values are centered around it. This highly validates that the CNN model with the proposed architecture outperforms the L3 method. Also one more huge advantage of CNN is that it takes only 30ms to give out a testing image. Though we cannot directly compare the two methods based on speed because of different platforms where it is trained and used (Python for CNNs and Matlab for L3), we can still argue that the CNN method is working very quicker considering the very less time it takes for computing the image.

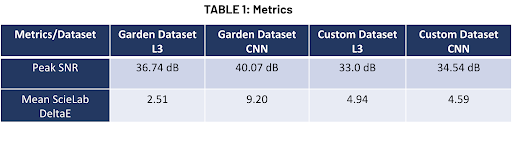

Finally we have the comparison based on metrics in Table 1. The mean ScieLab DeltaE values and Peak SNR values are tabulated here for both the dataset. As expected, the values of Mean ScieLab DeltaE is greater for CNN than L3 in the Garden dataset and the results are flipped in the custom dataset. But one interesting thing in PSNR values is that for both the datasets, the CNNs model have a higher PSNR value. This intuitively gives us that the CNNs, though performing poorly in Garden Dataset, was able to render an image which was comparatively noise free than using L3 method. In other words, the image quality of the CNNs are smooth. This is one of the big advantages of this proposed method.

Conclusions

We started this project to compare the L3 method and CNNs for replacing the image processing pipeline. We trained the methods on two datasets: Garden and Simulated Sensor Data. CNNs tend to overfit with small dataset where L3 is better for such cases. CNNs perform better when dataset is large. In other words, when there is a good amount of variance in the dataset, CNNs capture the inherent dynamics and perform better. When the data is following a motonic pattern, L3 method is the best choice. This result is validated by mean ScieLab DeltaE values, PSNR values, the visualization of the images and the difference in the images along with histograms of ScieLab DeltaE values.

Future Work

Current images can be a little noisy/blurry in high resolution. We attribute this to our simple optimization objective that tends to produce average of data points in the optimal scenario. The optimization objective can be improved to include more terms such as:

- Perceptual loss - Minimize Euclidean distance between generated image and ground-truth in feature space using pre-trained feature extractors such as VGGNet.

- Gradient loss - Minimize Euclidean distance between local gradients in the generated image and ground-truth image to generate sharp predictions.

- Adversarial loss (GAN) - Generate realistic looking sharp images using adversarial training.

- Delta E Approximation - As suggested during the presentation, we could use a linearized version (using Taylor’s expansion) of delta E as our optimization objective to directly optimize for color. We expect this to outperform L3 for small datasets such as Garden as well.